Beyond OCR: How we get RAG-ready markdown from broken PDFs without burning tokens

How Mojar turns messy technical PDFs into page-level Markdown for trustworthy RAG, with benchmark results across native extraction, OCR, and selective VLM parsing.

Table of contents

When we started building Mojar, the idea was simple: an embeddable "Ask AI" chat for company websites. Our first target? Hardware manuals.

The goal was to let a user ask a question, get an answer, and see the exact page it came from. But before we could build the RAG pipeline, we had to solve ingestion: turning messy PDFs into clean text with page references.

I thought this would be the easy part. It was 2025. We had AI that could reason, code and solve mathematical problems. Surely somebody had solved PDF parsing well enough that we could just plug in a library.

I couldn't have been more wrong.

PDFs are not semantic documents. A PDF is a frozen rendering recipe. It knows where marks go on a page, but not what those marks mean. Sometimes the text layer is clean. Sometimes the page is just a scanned image. Sometimes a table is visually obvious but semantically invisible.

If a parser returns an error, you can retry. But if it silently drops a crucial warning label, the retriever searches bad text, the model answers from bad context, and your RAG pipeline is dead on arrival.

How we measured parser quality

Before we could decide what to build, we needed a benchmark that matched the product problem. It was not enough to ask, "did the parser return text?" The harder question was whether the text was complete, in the right order, and useful enough for retrieval with page-level citations.

So we built a small internal benchmark.

The benchmark used 42 pages from production PDFs from a client. We had permission to benchmark the documents, but not to redistribute them, so the dataset is not public. These were the old, messy technical documents Mojar had to ingest.

For each page, we prepared Markdown ground truth by hand, then compared each parser's page-level Markdown output against it.

The scoring was simple: normalize Markdown into tokens, measure token F1, measure ordered token accuracy with edit distance, and use a stricter score that punishes both missing content and bad ordering. If a page has headings, heading recall can cap the score. We also tracked runtime, cost and LLM-routed pages (this will make sense later).

The strict score is the main number in this article. Token F1 catches whether the right words are present, including missing and extra words. Ordered accuracy catches sequence. Heading recall catches structural loss. The strict score takes the lowest of these metrics, so a parser cannot score 90% by throwing a jumbled pile of correct words onto the screen.

This is not a universal PDF parser leaderboard. The benchmark was designed to answer a narrower question:

On the kind of manuals Mojar needed to parse, which strategy gave us the best text quality per dollar and per second?

The first mistake: trusting native text extraction

The first version of the parser was what most people would try first: use existing PDF text extraction libraries and compare the output.

We tried the usual suspects: pdfplumber and MarkItDown. On friendly PDFs, they were useful. Modern PDFs with clean selectable text often parsed quickly. For simple documents, the output was good enough to chunk, embed, and search.

Then we pointed them at older manuals.

Some scanned pages had no native text at all. Some two-column pages came back in the wrong order. Some tables collapsed into words that no longer meant anything. A few pages looked clean at a glance but had missing labels, broken units, or reordered instructions. And some pages were completely broken: unknown symbols, gibberish, each letter multiplied by 4 (lllliiiikkkkeeee tttthhhhiiiissss).

But the worst part was that the parser did not always look broken. It often looked almost right. But in a manual, the small things are often the answer: a voltage range, a warning note, a model number, a unit after a number.

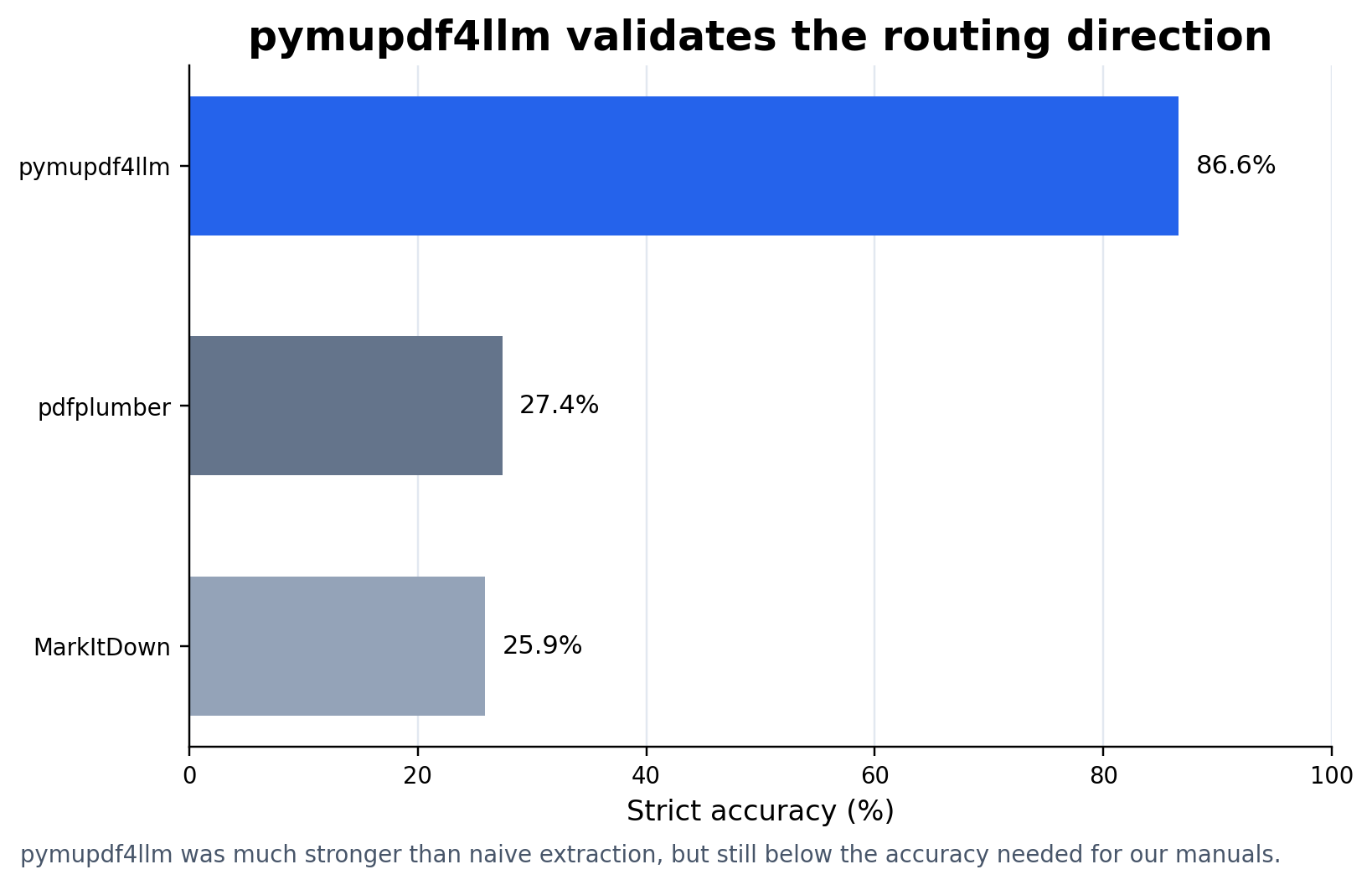

The benchmark results made the problem clearer:

| parser | strict accuracy | token F1 | runtime |

|---|---|---|---|

| pdfplumber | 27.4% | 37.5% | 54.3s |

| MarkItDown | 25.9% | 35.9% | 81.0s |

The scores were ugly. They matched what we saw by hand. These tools are useful on clean native PDFs but not the right solution here.

At that point, the next obvious move was OCR.

The second mistake: assuming OCR solves old PDFs

If native text extraction fails on old manuals, OCR feels like the obvious fix.

In a way, it is. A scanned page is an image. Tesseract can recover text the PDF text layer never had. For pages where native extraction gave us garbage, OCR made the benchmark feel less hopeless.

| parser | strict accuracy | token F1 | runtime |

|---|---|---|---|

| Tesseract | 90.3% | 92.5% | 577.2s |

That was a real jump. Tesseract recovered far more of the words we needed.

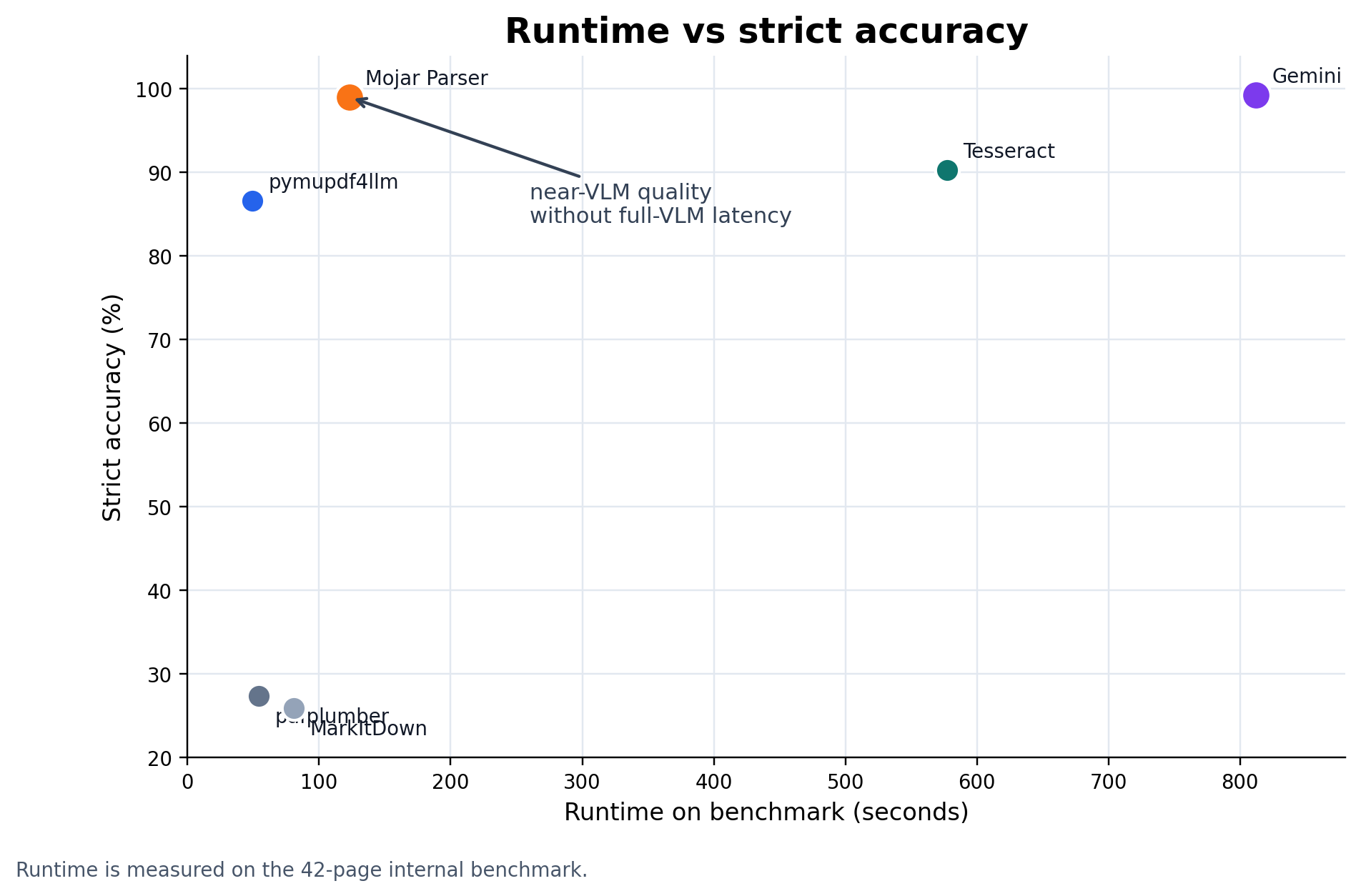

But OCR did not solve the whole problem.

It was slow. On this 42-page sample, Tesseract took 577.2 seconds. That may work for a small offline batch, but it gets painful when ingestion needs to feel responsive. It also made RAG-relevant mistakes: flattened tables, split headings, misread units, labels in the wrong order, and still some gibberish at times.

OCR moved us from "this is unusable" to "this is close enough to be tempting." It also created a routing problem. OCR was useful on hard pages and wasteful on easy ones. A clean PDF should not wait for an OCR pipeline. A scanned manual probably should.

So the next idea was not "use OCR everywhere." It was "figure out which pages deserve the expensive path."

The third mistake: using vision models for everything

The next expensive path was a visual language model.

Instead of trusting the PDF text layer or an OCR pass, we rendered each page as an image and asked Gemini to extract the content. The model sees what a person sees: headings, labels, diagrams, captions, tables, and visual relationships.

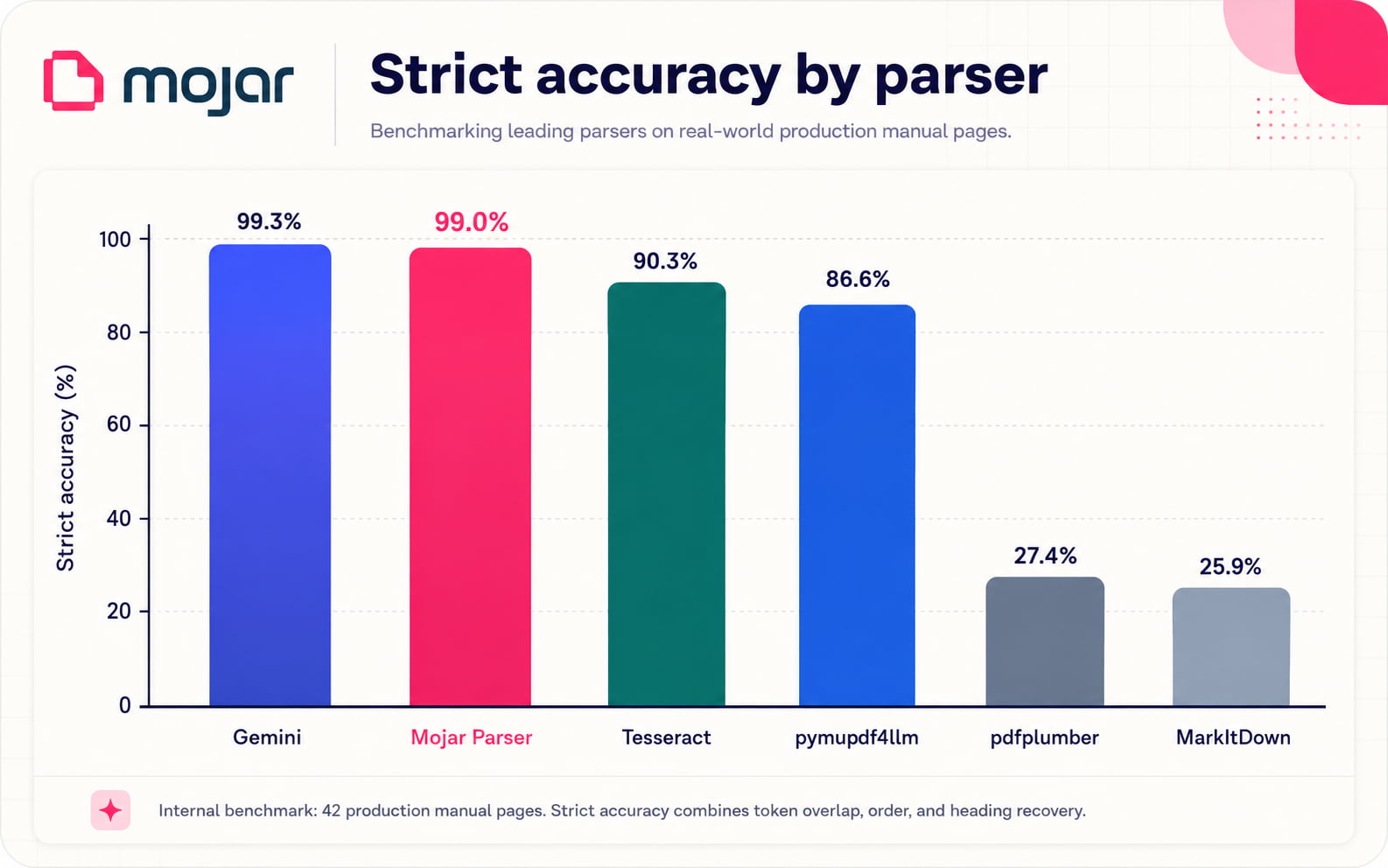

On the benchmark, this worked almost exactly how we hoped:

| parser | strict accuracy | token F1 | runtime | cost |

|---|---|---|---|---|

| Gemini | 99.3% | 99.9% | 812.1s | $0.129 |

For the pages in this benchmark, full VLM parsing was extremely accurate. If the only goal was "parse these 42 pages as accurately as possible," this was the answer.

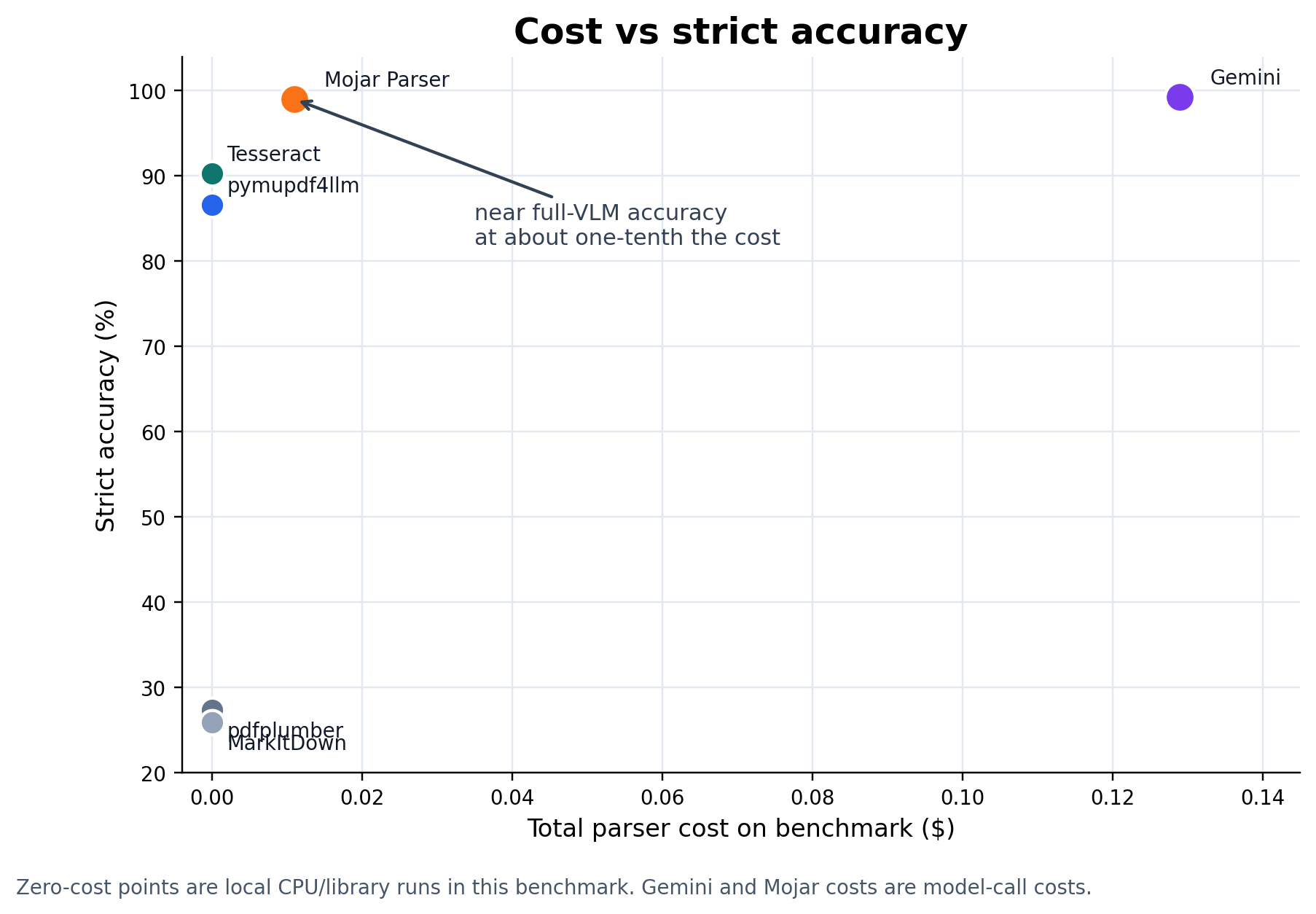

But the problem with this approach is that every page becomes a billable model call, and every easy page pays the same tax as a hard page.

Many PDFs have a usable text layer. Sending all of them to a VLM is like calling a senior engineer to rename a file. It works, but it is overkill.

The useful lesson was not "replace the parser with Gemini," but that visual models are excellent fallback parsers. They should be used when cheaper extraction cannot be trusted.

The routing hypothesis

The parser we wanted was not a single extractor. It was a decision system. Most pages should go through the cheapest path that can be trusted. Hard pages should escalate.

At a high level:

PDF page

-> fast text/layout extraction

-> quality checks

-> OCR escalation

-> quality checks

-> VLM escalation

-> Markdown + page metadata

-> RAG ingestion

The first pass should be fast. In the current architecture, that means starting with a practical text/layout extraction layer instead of rendering every page for a model. A tool like pymupdf4llm fits because it handles many normal PDFs well and gives us Markdown-shaped output.

But the first pass is not trusted blindly.

The router looks for weak output: too little text, strange character patterns, bad ordering, missing structure, low heading recovery, or flattened tables.

When the cheap path looks good enough, we keep it. When it does not, we escalate the page to a OCR. When OCR produces sub-par quality, we escalate to a VLM.

Routing also has to happen per page, not per document. Manuals are mixed. One page may be a clean table of contents. The next may be a scanned wiring diagram. Per-page routing lets the parser treat each page honestly.

The goal was to make every VLM call earn its place, not to avoid them entirely.

pymupdf4llm was the proof that routing already works

pymupdf4llm already validates this idea on a smaller scale. It handles straightforward text extraction, and when a page needs OCR, it can route into that path instead of treating every PDF page the same way. That is the shape we wanted: start cheap, escalate when needed.

On our benchmark, pymupdf4llm reached 86.6% strict accuracy and 90.0% token F1 in 49.5 seconds. That was much better than the naive extraction baselines, and fast enough to be attractive as a first pass.

But it still missed too much for the manuals we cared about. For cited RAG over technical documents, the last few percentage points matter. A missing warning note, unit, table row, or diagram label can be the difference between a useful answer and a confident wrong one.

So the idea was not to replace pymupdf4llm's routing. It was to extend the same idea one level further: use a strong cheap parser first, then add a VLM route for pages where that output is not trustworthy enough.

Near-VLM quality without full-VLM cost

Once we tested selective routing, the benchmark finally matched the intuition.

Full VLM parsing was still the quality ceiling. But selective routing got very close while using far fewer model calls.

| parser | strict accuracy | token F1 | ordered accuracy | heading recall | runtime | cost | LLM pages |

|---|---|---|---|---|---|---|---|

| Gemini | 99.3% | 99.9% | 99.8% | 99.4% | 812.1s | $0.129 | 42 |

| Mojar Parser | 99.0% | 99.7% | 99.6% | 99.2% | 123.3s | $0.011 | 9 |

On this benchmark, Mojar was within 0.3 percentage points of full VLM strict accuracy, while routing only 9 of 42 pages to the LLM.

That changed the cost and runtime profile. Full VLM parsing cost $0.129 on the sample. Selective routing cost $0.011, about 10.9x cheaper.

Full VLM parsing took 812.1 seconds. Selective routing took 123.3 seconds, about 6.6x faster.

I am not going to pretend 42 pages is enough to declare victory over every PDF on earth. But the benchmark proved the routing architecture does exactly what it is supposed to do: easy pages passed through for pennies, layout disasters escalated to the VLM, the final extraction hit the VLM quality ceiling, and the total API bill dropped by an order of magnitude.

This is the parser architecture we should have started with. VLMs are very good at this. But "very good" is not the same as "should run on every page."

What this benchmark does not prove

The dataset is small. Forty-two pages is enough to catch real failure modes, but not enough to make universal claims about PDF parsing. The documents are also private, so readers cannot rerun the exact test.

Parser benchmarks are sensitive to the document mix. Clean academic papers reward different behavior than scanned maintenance manuals. Invoices are different from datasheets. Born-digital PDFs are different from old photocopied manuals.

So the supported claim is narrow: on the messy technical manuals we initially built Mojar for, selective VLM routing got us close to full-VLM quality at a much lower cost and runtime.

Scoring text extraction is also imperfect. Token F1 and ordered accuracy are useful, but they do not understand every semantic detail. That is why we also checked page-level failures by hand.

One caveat: we initially tried a massive public benchmark, but aborted the run. We realized we were burning our compute budget on a dataset that was heavily rewarding handling of complex math formulas and LaTeX. Since we hadn't built specialized math handling yet, and our clients didn't need it, we decided to stop chasing the academic leaderboard and focus on benchmarking the messy documents that actually matter to our system.

What we learned

We started this project thinking PDF parsing was the boring ingestion step before the real AI work. It was not. The model's answer was only as trustworthy as the text we gave it.

Native PDF extraction is useful, but too fragile for messy manuals on its own. OCR helps, but it is slow and still loses structure. VLMs are excellent at hard pages, but too expensive and slow to use blindly.

The final lesson was the one that shaped the parser:

PDF parsing for RAG is a routing problem.

Use the cheap path when it is good enough. Escalate when the page gives you a reason. Track which route each page took. Return text with page metadata so the RAG pipeline can cite sources instead of guessing.

The final system treats intelligence as a managed resource. By defaulting to fast extraction and treating the VLM as an escalation path, we get the quality we need at a fraction of the cost. Even better, it validates an architecture that scales seamlessly to catch new parsing gaps the moment they are detected.