Agentic AI has a live knowledge problem

66% of enterprises say real-time data is non-negotiable for trusted AI agents. The actual gap is governed, fresh, auditable knowledge infrastructure.

Table of contents

The report landed this week; the problem is months old

Denodo released its AI Trust Gap Report on April 15, and the headline figure is circulating widely: 66% of organizations say AI data must be accessed in real time to be considered trustworthy (Denodo via GlobeNewswire). A finding that barely made the coverage: 63% say finding relevant data within specific business contexts is a primary deployment barrier. And 67% struggle to maintain consistent security and access controls across the systems their AI touches (same source).

These numbers are striking, but they describe something enterprises have been running into quietly for the better part of a year. As organizations pushed past the chatbot phase and started building agents that actually take actions, the stakes changed fundamentally.

What changed when agents replaced chatbots

Early enterprise AI was mostly retrieval. Ask a question, get an answer. The response might be wrong, but the cost was bounded: a frustrated employee, a confused customer, maybe a corrected support ticket.

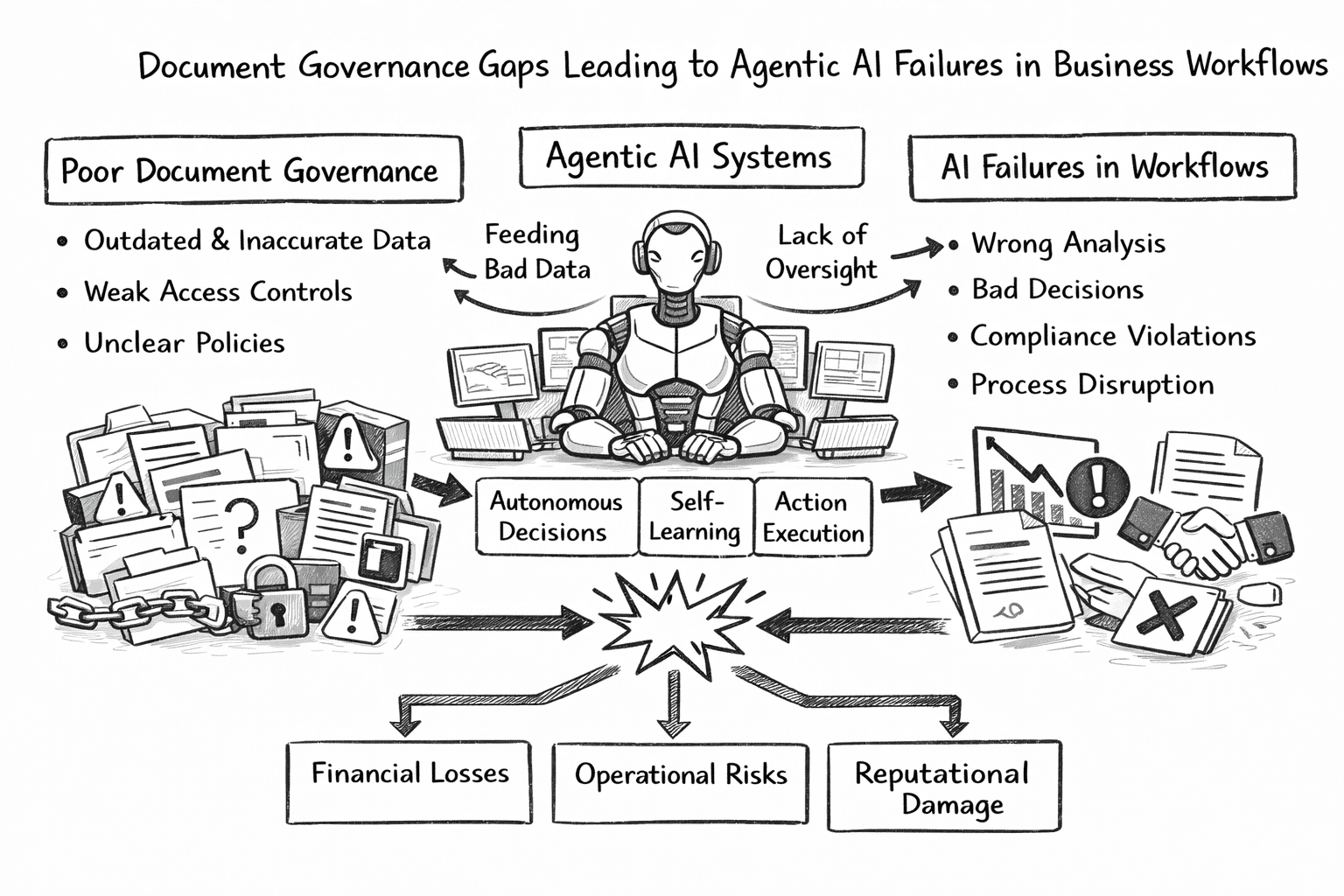

Agents work differently. They route. They approve. They update records, trigger downstream processes, file forms, and query systems of record on behalf of the workflows they serve. When an agent acts on bad information, the failure is a wrong action that may have already touched something that matters, not just a wrong answer sitting in a chat window.

We saw this firsthand when we deployed Mojar with an enterprise insurance client. Their claims-processing agent pulled a coverage document that had been superseded two weeks earlier. The agent approved a claim under outdated terms. A human caught it before payment, but the near-miss made the risk concrete: the agent did exactly what it was told, and what it was told was no longer true.

Chatbot failures vs. agent failures

The difference is structural. A comparison makes the risk profile clearer:

| Chatbot failure | Agent failure | |

|---|---|---|

| What happens | Wrong answer displayed | Wrong action executed |

| Blast radius | One user sees bad info | Downstream systems affected |

| Recovery | User searches again | May require reversal, audit, or reporting |

| Staleness tolerance | Minutes to hours | Near zero for action-critical knowledge |

| Example | "Your deductible is $500" (it's $750) | Claim approved under wrong coverage terms |

The Denodo data confirms what we've seen across deployments: agentic AI, operating at machine speed inside business workflows, has no tolerance for the casual staleness that a human worker would catch before acting on.

A person looks at the policy, thinks "wait, I saw an update email last month," and pauses. The agent reads what's there and proceeds.

Real-time is a business risk statement, not a technical spec

"Real-time data" sounds like an infrastructure requirement. In practice it is shorthand for something more specific: freshness matched to business risk.

When an insurance agent processes a claim, it needs current coverage terms, not last quarter's. When a procurement agent checks supplier compliance, it needs the rules in force today. When an HR agent answers a question about benefit eligibility, the answer depends on whether the employee's status has changed since the knowledge base was last touched.

None of these require live streaming data in the strict technical sense. What they require is knowledge that reflects current reality at the pace current reality changes, and that the cost of acting on something outdated has been accounted for.

Informatica's CDO Insights 2026 report reinforces this: 57% of data leaders cite data reliability as a top barrier to AI deployment, and half say data quality and retrieval are the biggest challenges for agentic AI specifically (Informatica). Three quarters say governance has not kept pace with AI adoption.

The models are ready. The knowledge they act on is the bottleneck.

How freshness requirements vary by use case

We recommend mapping freshness tolerance to the business risk of each agent workflow. In our experience, the following framework helps teams prioritize:

| Knowledge type | Example | Acceptable staleness | Risk if outdated |

|---|---|---|---|

| Regulatory/compliance | HIPAA policies, financial regulations | Same-day | Compliance violation, audit failure |

| Pricing/contracts | Rate cards, SLAs, coverage terms | Same-day | Revenue leakage, legal exposure |

| Product specs | Feature docs, API references | Weekly | Support errors, misquoted capabilities |

| Training materials | Onboarding guides, SOPs | Monthly | Inconsistent execution, slower ramp |

| Market context | Competitor positioning, industry trends | Quarterly | Strategic drift, not operational failure |

The principle: knowledge currency should match the pace at which the underlying reality changes, weighted by the cost of acting on something outdated.

Governed access matters as much as availability

There is a temptation to frame this as a data pipeline problem: connect more sources, give agents what they need. The Denodo findings push back on that.

67% of enterprises struggle to maintain consistent security and access controls across systems (Denodo). The core issue is that access is not governed in a way that is safe to deploy at scale.

An agent with broad data access risks more than acting on bad information. It risks exposing information it should never have surfaced, mixing data from sources with different permission levels, or producing responses that could not survive an audit because the retrieval path cannot be reconstructed.

Our approach at Mojar is built around permission-aware retrieval: every document carries its access scope, and every retrieval is logged with full provenance. When we built this, the design goal was simple: if an auditor asks "what did the agent see before it made that decision," the answer should take seconds, not days. We have seen clients in healthcare and financial services treat this as a deployment prerequisite, not a nice-to-have.

Governed access means an agent operates only on information it is authorized to use, with a traceable record of what it saw and when. For regulated industries this is table stakes. For any production deployment where someone might someday have to explain what the agent knew and why it acted, it is the minimum standard. We have covered why knowledge quality is an execution risk in depth.

Business context is the real bottleneck

The 63% who cannot find data in business context are pointing at something more specific than poor search.

Business context means the agent knows the customer it is helping is on a trial tier, not the standard plan. It means the pricing it retrieves is for the European market, not the US market. It means the SOP it surfaces applies to the specific product line in question, not the general one.

You can have fresh, accessible data and still fail this test completely. According to the Denodo report, the average enterprise AI initiative now pulls from over 400 data sources, with 20% of organizations managing more than 1,000. More sources do not automatically produce more context. They produce more surface area for retrieval to go wrong.

This is a knowledge architecture problem, not a volume problem. Agents need knowledge that is scoped, attributed, and structured around how the business actually operates, not raw data made queryable.

What "contextual retrieval" looks like in practice

When we tested Mojar's retrieval system with enterprise sales teams, we found that unscoped retrieval returned the correct document only 40-60% of the time. Adding business context metadata (customer tier, region, product line) to the knowledge base pushed accuracy above 90%.

The steps that made the difference:

- Tag every document with business metadata at ingestion: customer segment, region, product line, effective date

- Scope retrieval queries to include the agent's current context, not just the user's question

- Weight recency so the most current version of a document surfaces first when multiple versions exist

- Log retrieval paths so teams can audit which document the agent used and why

Without these steps, adding more data sources makes retrieval worse, not better. The agent has more to search through and less ability to distinguish what is relevant to this specific situation.

Where agent failures actually originate

The cleanest version of this problem involves structured data: databases, CRMs, ERPs. The messier version, where most enterprises actually live, is unstructured content.

Policy documents. Standard operating procedures. Compliance manuals. Training materials. Product specs. Contracts. Support documentation accumulated over years across departments that do not talk to each other. Agent failures are most likely to originate here, not because a database row is stale, but because the PDF from three regulatory updates ago is still what the agent finds first.

AI readiness is really knowledge base readiness, and for most enterprises, the bottleneck is the unstructured document estate: files with no version control, conflicting instructions across documents nobody has audited, knowledge that was never designed to be queried by a machine.

We have seen this pattern across every organization we have deployed with. The typical enterprise document estate includes:

- Duplicate documents with conflicting information (3-5 versions of the same policy is common)

- Outdated files that nobody owns or maintains but that search still surfaces

- Format silos where the same information lives in PDFs, SharePoint pages, Confluence wikis, and email threads

- Permission gaps where sensitive documents are accessible to agents that serve frontline employees

When agents start working inside those environments, freshness failures become action failures fast.

What enterprise AI teams need to treat differently

The practical implication is to treat knowledge infrastructure with the same seriousness as model selection. Pausing agent deployments is not the answer; building the governed knowledge layer underneath them is.

Trusted agents need fresh knowledge, not just a capable model. Systems of record are necessary but not sufficient. The knowledge those systems contain has to be current, accessible to authorized users, and structured in ways agents can retrieve accurately with source attribution.

Three disciplines most teams are missing

Based on our deployments and the patterns in the Denodo and Informatica data, we recommend enterprise AI teams invest in three capabilities:

1. Knowledge freshness as a measurable metric

Go beyond "when was this last updated" to "what is the acceptable staleness for this specific knowledge, given the actions that depend on it." Assign freshness SLAs to each document category, and build alerts when documents exceed their staleness threshold. As agents become metered, autonomous workloads, the economics of acting on bad knowledge get meaningfully worse.

2. Permission-aware retrieval

Access controls that travel with the knowledge, not just with the system it lives in. An agent should not be able to surface information that the user querying it is not authorized to see. In our experience, this requires tagging permissions at the document level and enforcing them at retrieval time, not just at the application boundary.

3. Auditability of what was retrieved

Trace not just what the agent decided, but what it read before deciding. When something goes wrong (and at scale, something will), teams need to answer: what did the agent know, and where did that knowledge come from? This is the difference between an incident you can diagnose in minutes and one that takes weeks to reconstruct.

A practical step-by-step approach

For teams starting to build governed knowledge infrastructure, we recommend this sequence:

- Audit your document estate. Map every source your agents read from. Identify duplicates, outdated files, and permission gaps.

- Classify by business risk. Use the freshness table above to assign staleness thresholds per document category.

- Implement version control for documents. Ensure your retrieval system surfaces the current version and can show the history.

- Tag permissions at the document level. Do not rely on application-layer access controls alone.

- Build retrieval logging from day one. Every agent query should produce an audit trail showing which documents were retrieved.

- Monitor and measure. Track freshness compliance, retrieval accuracy, and permission violations as operational metrics.

The trust decision happens at the knowledge layer

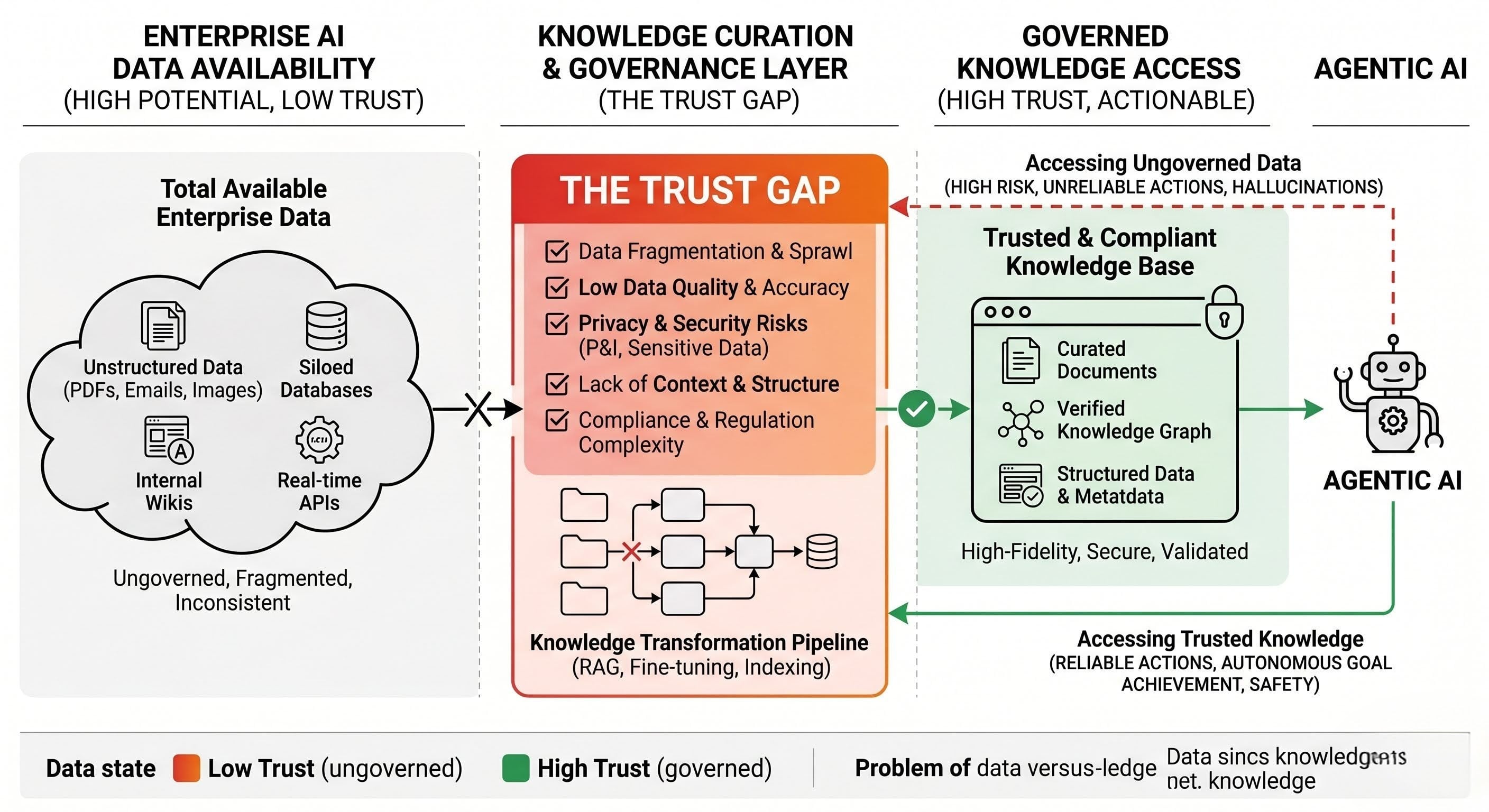

Denodo frames the trust gap as a data problem. That framing is close but incomplete. Data availability and pipeline engineering are part of the solution. The harder part is what happens between "the data exists somewhere" and "the agent can act on it safely."

The governed knowledge layer is the architecture that makes documents queryable, keeps knowledge current, preserves source attribution, scopes access by permission, and catches contradictions before agents act on them. This is where Mojar operates, specifically for the unstructured, document-heavy knowledge that structured data systems do not cover.

Systems of record tell agents what the record says. Governed knowledge systems tell agents what the organization actually knows, and whether that knowledge is still true.

That gap is what 66% of enterprises are trying to name. The ones who close it before their agents go to production are the ones who will not spend the next year explaining what went wrong.

What to watch next

The next round of enterprise AI buying conversations will center on specifics that barely appeared in the last round:

- Freshness SLAs for knowledge inputs

- Permission-aware retrieval architecture

- Provenance and audit trails for agent decisions

- Contradiction detection across document sets

- Measurable trust thresholds before production deployment

The market has moved past "does the AI work." The question now is whether it can be trusted to act.

If your team is building agentic workflows and the knowledge layer is still ungoverned, book a demo with Mojar to see how governed retrieval works in practice. Or try Mojar and connect your first knowledge source in under five minutes.

Frequently Asked Questions

Chatbots retrieve information and answer questions. Agents take actions: routing, approving, updating records, triggering downstream workflows. A stale answer is a failure you can explain. A stale action is a failure you may have to reverse, defend, or report. The consequences differ in kind, not just degree.

Availability means an agent can technically reach data. Governed access means the agent only sees what it is authorized to see, with clear provenance, enforced permissions, and a traceable record of what information informed each decision. Most enterprise AI deployments have the first and are missing the second.

Not every use case requires live streaming data. A policy document that changes quarterly needs to reflect the current version, but not millisecond updates. The principle is that knowledge currency should match the pace at which the underlying reality changes, and the cost of acting on something outdated.

A data pipeline makes data available. Governed knowledge ensures the agent only sees authorized information, retrieves the current version of a document, attributes every answer to a traceable source, and catches contradictions before the agent acts. Pipeline is plumbing; governed knowledge is policy plus architecture.