Why multi-agent AI needs a shared semantic context layer

Different agents operating from different business definitions create silent failures at scale. Microsoft's Fabric IQ points to the semantic infrastructure fix.

Table of contents

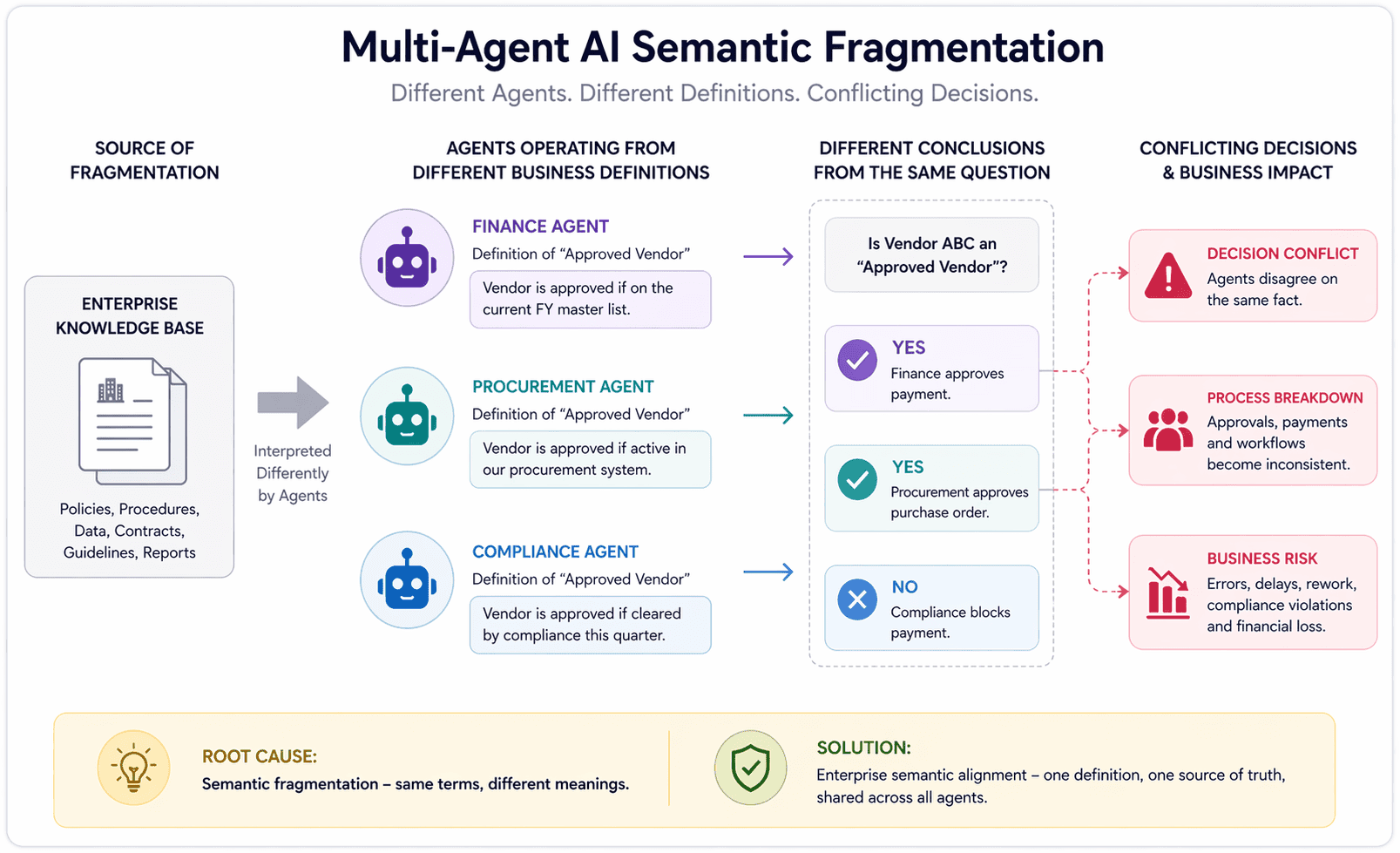

When agents disagree on what a customer is

Two agents. Same enterprise. One handling sales, one handling support. Both reference "the customer." They mean different things.

That's a semantic consistency problem, and it's becoming the dominant failure mode in multi-agent AI deployments. It isn't a prompt engineering issue or a model quality issue. It's a structural fragmentation issue that grows more expensive as enterprise agent estates scale.

Data engineers working with multi-agent systems in 2026 keep running into the same wall: agents built on different platforms, by different teams, each carry their own interpretation of how the business works. What counts as an active customer? How is a region defined? What business rules govern an escalation? When those definitions diverge across a fleet of agents, decisions break down in ways that are hard to trace and expensive to fix.

The fragmentation problem at scale

The fragmentation compounds faster than most teams expect. A single definitional gap between two agents produces a handful of edge-case errors. Add a third agent with a different vendor source and a fourth built on a custom LLM API, and the number of potential inconsistencies grows combinatorially.

We've seen this pattern repeatedly when enterprises come to Mojar after deploying agents across multiple platforms. The symptoms are usually described as "agents giving contradictory answers" or "the AI is inconsistent," but the underlying cause is almost always the same: no shared reference for what the business entities mean. The agents aren't malfunctioning; they're operating from different premises.

Why this problem is surfacing now

First-wave agent infrastructure focused on two things: tool access and prompt quality. Can the agent call the right API? Does it have a clear system prompt? Those are solvable problems, and many teams have solved them.

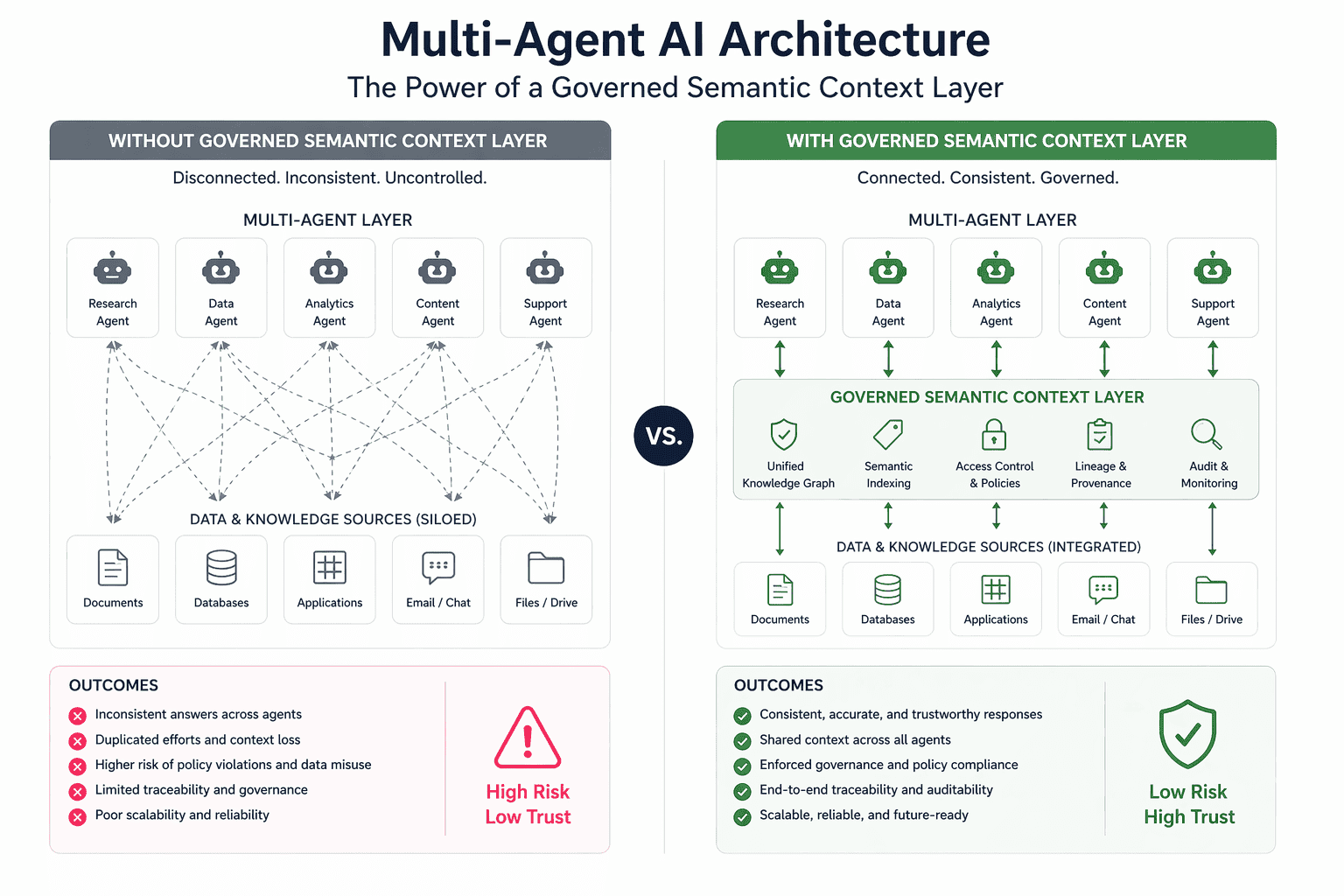

What's showing up now is a harder category: structural inconsistency across multi-vendor agent estates.

When one team deploys a Salesforce agent, another deploys a ServiceNow agent, and a third builds a custom workflow on an LLM API, those agents don't share a common understanding of the business. Each was built in isolation. Each carries its own model of what your operations look like. At the scale of an enterprise, dozens of agents, multiple platforms, different deployment timelines, that fragmentation accumulates into real decision failures.

As Microsoft put it in its Fabric blog announcement: "Speed alone does not create alignment. Many platforms focus on moving data faster, through streaming pipelines, dashboards, alerts, but without shared context, teams and AI systems interpret signals differently. Insights fragment. Decisions diverge."

What Microsoft's Fabric IQ makes explicit

At FabCon 2026, Microsoft significantly expanded Fabric IQ, the semantic intelligence layer it debuted in late 2025. The headline change: the business ontology is now accessible via MCP to any agent from any vendor, not just Microsoft's own.

That shift matters. Before, Fabric IQ was useful inside the Microsoft ecosystem. Now it's candidate infrastructure for any multi-vendor enterprise deployment. Any agent, regardless of who built it or what platform it runs on, can query the same governed set of business definitions.

Microsoft CTO of Fabric Amir Netz used a film analogy to explain why the shared context layer matters. He compared agents without it to the character in 50 First Dates: every morning, they wake up and forget everything. You have to re-explain the business from scratch every time.

Why MCP-accessible semantics changes the deployment picture

Making the ontology MCP-accessible moves semantic context from a proprietary feature into shared infrastructure. Netz was direct about the intent: "It doesn't really matter whose agent it is, how it was built, what the role is. There's certain common knowledge, certain common context that all the agents will share."

This is the architecture shift that matters for enterprise teams evaluating multi-vendor agent estates. The question moves from "which platform do all our agents need to run on?" to "what shared semantic infrastructure can all our agents query, regardless of platform?" Those are different problems with different answers, and the second framing is significantly more tractable for enterprises that have already committed to multiple agent vendors.

What a semantic context layer actually does

Three layers get conflated in most enterprise AI discussions. Worth separating them precisely, because each solves a different problem and each has different failure modes when missing.

| Layer | What it handles | What breaks without it |

|---|---|---|

| Document retrieval (RAG) | Policy lookup, handbook sections, contract clauses, regulatory guidance | Agents hallucinate policy details or reference outdated documents |

| Real-time business state | Current inventory, crew availability, live order status | Agents act on stale operational data |

| Semantic definitions and ontology | What "customer" means, how regions are structured, escalation constraints | Agents from different platforms reach different conclusions from identical inputs |

A semantic context layer handles the third category and partially the second. It doesn't replace the first. Understanding this distinction is the practical prerequisite for building a multi-agent architecture that actually works.

The three layers break down as follows:

- Document retrieval (RAG): when an agent needs to look up what a policy says, find the relevant section of a handbook, or pull a specific clause from a contract. On-demand retrieval from a document corpus, returned with source attribution.

- Real-time business state: which planes are in the air right now, whether a crew member has enough rest hours, what inventory is available at a given warehouse. Data that changes continuously and can't be pre-loaded.

- Semantic definitions and ontology: what "customer" means in this business, how regions are structured, what the decision constraints are for an escalation. The shared vocabulary that lets agents reason about operations consistently.

Why RAG still matters alongside semantic infrastructure

Netz drew this line clearly in the FabCon presentation. Microsoft Learn's Fabric IQ overview describes it as unifying data according to "the language of the business," but Netz was equally explicit about what it doesn't cover.

"We don't expect humans to remember everything by heart," he said. "When somebody asks a question, you have to know to go and do a little bit of a search, find the right relevant part and bring it back." That's RAG: regulations, company handbooks, technical documentation, content that's too large to load into every context and needs to be retrieved on demand.

"The mistake of the past was they thought one technology can just give you everything," Netz said.

Semantic layers and RAG solve different problems. Shared ontology tells an agent what a customer segment is. A governed document retrieval system tells it what the current policy for that segment says. Real-time data tells it what's happening with that customer right now. Drop any one layer and the agent is operating blind on that dimension.

In practice, our customers tell us they typically discover this gap after deploying semantic infrastructure and noticing that their agents now agree on business definitions but still return inconsistent policy answers. The semantic layer fixed one problem and exposed another. That's not a failure of Fabric IQ; it's the natural sequence of addressing layers in order.

The governed document layer that semantic alignment exposes

Even with perfect semantic alignment, a shared ontology that every agent consults, enterprises still face a downstream problem. The documents those agents retrieve are often stale, contradictory, or ungoverned. Policies get updated in one system but not another. Regulatory guidance changes and the old version stays live. Two documents in the same knowledge base give conflicting answers to the same query.

Semantic context tells agents what to look for. It doesn't ensure what they find is accurate.

When we deployed Mojar's retrieval layer alongside semantic infrastructure at enterprise customers, we found a consistent pattern: semantic alignment raises the visibility of document quality failures. Before shared semantic context, agents disagree on definitions and on evidence, and it's hard to tell which problem is producing which error. After semantic alignment, the definition layer is consistent, and document quality failures become the clearly visible bottleneck.

We built Mojar's contradiction detection specifically because of what we saw in those early deployments. The agents that previously gave "inconsistent" answers were often drawing from conflicting documents, and those conflicts only became traceable once the semantic layer removed the definitional noise. Our data from those deployments consistently shows that document conflicts, not model quality, are the primary driver of wrong outputs once semantic infrastructure is in place.

The production stack for trustworthy multi-agent AI combines all of this: shared semantic definitions that all agents draw from, real-time operational state that reflects what's actually happening, and governed document retrieval with source attribution and contradiction detection. Our approach at Mojar focuses specifically on that third layer, because it's the one that semantic infrastructure assumes is solved but doesn't provide.

Without it, semantic alignment creates a false confidence problem: agents agree on what to look for, then retrieve unreliable content. The output looks coherent because the definitions are consistent. The underlying evidence is still broken.

What enterprise teams should actually do

The practical sequence for enterprise teams building multi-agent AI follows from the three-layer structure above.

Step 1: Identify where your agents currently disagree

Before investing in semantic infrastructure, run a diagnosis. Give the same business query to each agent in your estate and compare outputs. How does each agent define "active customer"? What's each agent's understanding of your regional structure? Where do they disagree?

This step is usually more revealing than expected. In our experience, teams that run this diagnosis for the first time find three to five major definitional inconsistencies in a typical enterprise agent estate, and those inconsistencies map almost exactly to the domains where they've been seeing unexplained decision quality problems. A practical guide for this step: document the output of each agent given the same three or four canonical business queries, then compare them side by side. The inconsistencies will be visible immediately, and they'll give you the prioritized list of semantic gaps to close first.

Step 2: Evaluate shared semantic infrastructure

For enterprises already on Microsoft's Fabric platform, Fabric IQ is the natural candidate. For multi-cloud or hybrid environments, the relevant question is what MCP-compatible semantic layer can serve as a shared reference point for agents regardless of their origin platform.

The key requirement is vendor-neutrality: the semantic layer has to be accessible to agents from any vendor, or it solves the consistency problem only for the subset of agents that share a common platform, which is rarely the full estate.

Step 3: Govern the retrieval layer before connecting agents to it

The enterprise AI readiness gap almost always includes a document governance gap that semantic infrastructure exposes rather than solves. We recommend auditing the document sources that feed your agent workflows before connecting them to a governed semantic layer, because the semantic layer will surface document quality failures faster than any previous architecture.

A concrete how-to for this: identify the documents each agent workflow retrieves, check for outdated versions and contradictions, and establish source attribution and review schedules. For example, if a customer service agent is querying your refund policy and a sales agent is querying your pricing policy, both documents need to be current, version-controlled, and free of internal conflicts before semantic alignment makes them more consistently retrieved. The quality of enterprise knowledge bases becomes the binding constraint once the semantic layer is in place.

The Fabric IQ expansion points toward where enterprise AI infrastructure is heading: versioned business semantics shared across all agents, cross-vendor consistency as a baseline expectation, and growing scrutiny on document quality as the semantic layer raises the bar everywhere else. When agents act on your documents, knowledge quality becomes execution risk, and that risk is more visible, not less, once agents share a common semantic reference.

The semantic layer is necessary infrastructure. It isn't sufficient infrastructure on its own. What comes after it is governance of the evidence those agents act on.

If you want to see how Mojar approaches the document retrieval layer in multi-agent enterprise deployments, including contradiction detection and source attribution, schedule a demo or try Mojar with your own knowledge base.

Bob Mojar leads research at Mojar and focuses on enterprise knowledge governance architecture for multi-agent AI systems.

Frequently Asked Questions

A semantic context layer is shared infrastructure that gives all AI agents in an enterprise a common understanding of business entities: customers, orders, locations, constraints. Instead of each agent carrying its own definition of what a 'customer' is, they all draw from one governed source. Microsoft's Fabric IQ is the clearest current example.

No. They solve different problems. Semantic layers handle real-time business state and shared business definitions. RAG handles large document bodies, policies, handbooks, regulations, where on-demand retrieval is more practical than loading everything into context. Enterprise agents need both.

Each agent is built by a different team, trained or prompted differently, and has no shared reference for what business terms mean. Without a single source of business semantics, 'customer' in one agent's context means something different than in another's. At scale, those definitional gaps produce wrong decisions.