RAG for data center emergency response and disaster recovery

When a data center emergency hits, responders flip through runbooks while the clock burns $9,000 per minute. RAG delivers the right procedure in under 30 seconds.

Table of contents

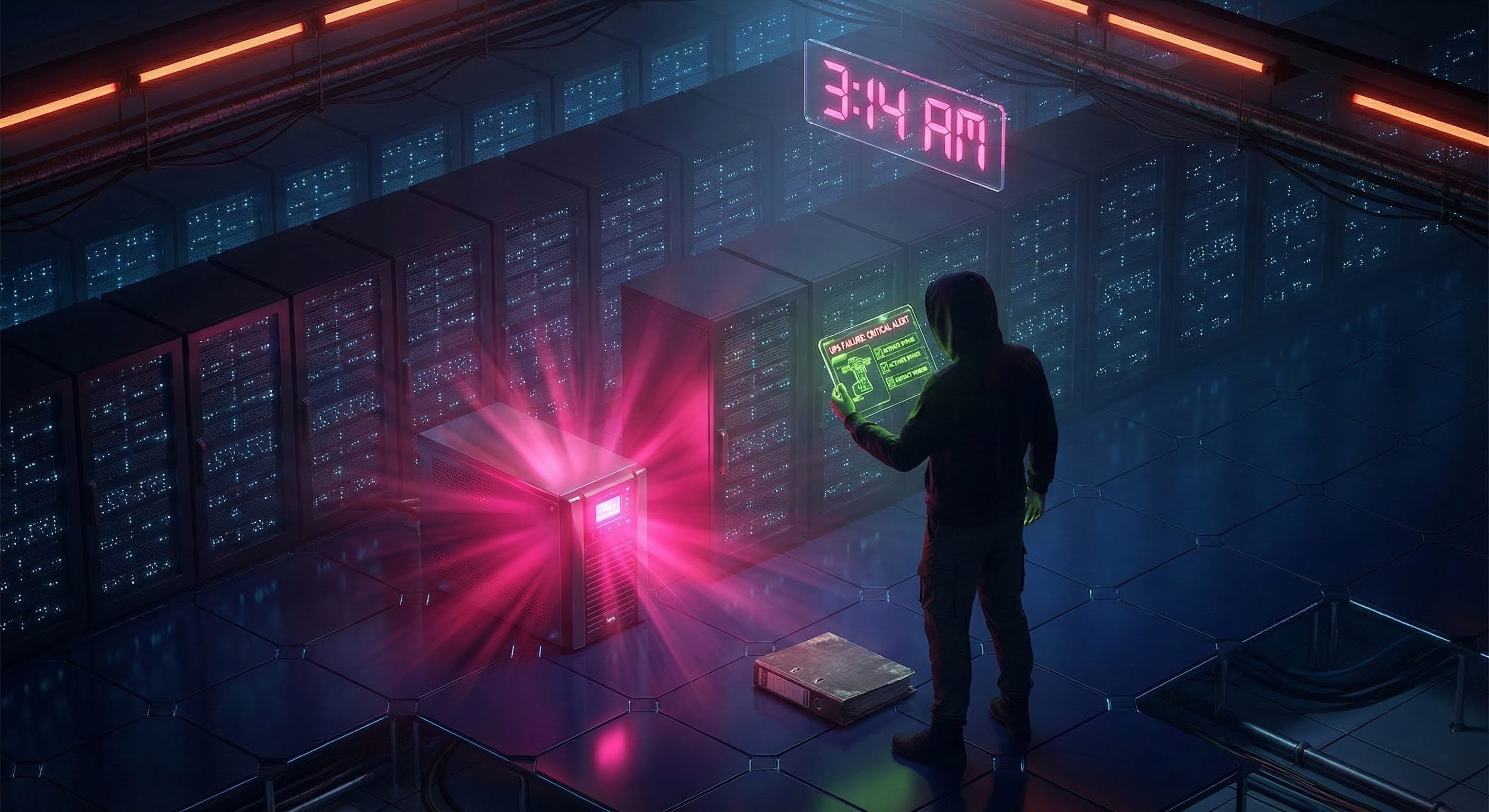

When the alarm goes off at 3 AM

It's 3:14 AM on a Tuesday. A UPS battery failure alarm fires in Building B. The on-duty technician is six months into the job. The senior engineer who knows every circuit in the facility is asleep forty minutes away. The runbook—all 500 pages of it—sits in a shared drive somewhere, organized by whoever created the folders in 2019.

The technician opens the binder. Checks the index. Flips to what might be the right section. Reads through procedures written for a different UPS model. Calls the on-call supervisor, who doesn't pick up on the first try.

Eight minutes have passed. At $9,000 per minute of downtime, the meter is already at $72,000.

This scenario plays out in data centers constantly—not because the procedures don't exist, but because finding and executing the right one under pressure is a fundamentally different problem than writing it down. The knowledge is there. Access under stress is the failure mode.

Retrieval-Augmented Generation (RAG) changes the equation. Instead of navigating folders, scanning pages, and hoping you found the current version, operators describe the situation and receive step-by-step guidance grounded in their actual emergency procedures—with source citations, role-appropriate actions, and historical context from previous incidents.

How RAG works in emergency contexts

RAG combines large language models with your organization's actual documentation. During an emergency, the system:

- Retrieves the relevant emergency procedures, historical incident reports, and system specifications from your knowledge base

- Augments the AI's context with site-specific details: equipment locations, escalation contacts, authorization levels

- Generates a direct, actionable response tailored to the operator's skill level and the current situation

The distinction from a static runbook matters: RAG doesn't just find the right page. It synthesizes information across multiple documents—the emergency procedure, the equipment manual, the escalation matrix, last quarter's similar incident—and presents a unified response. The operator gets one answer, not five documents to cross-reference.

The cost of slow response

The numbers behind data center emergencies are unforgiving.

The Ponemon Institute pegs the average cost of a data center outage at $9,000 per minute. The Uptime Institute's 2023 Annual Outage Analysis found that 70% of outages are preventable with proper procedures—yet human error during crisis response causes 60-80% of extended outages, precisely because operators can't find or correctly execute procedures under stress.

The gap between "procedure exists" and "procedure was followed correctly at 3 AM by a junior technician" is where the damage accumulates:

| Impact Category | Typical Cost Per Incident |

|---|---|

| Extended downtime from procedural errors | $540,000 |

| Equipment damage from wrong procedures | $250,000 |

| Escalation delays | $180,000 |

| Customer SLA penalties | $150,000 |

| Regulatory penalties | $100,000 |

| Average major incident total | $1,220,000 |

McKinsey research shows organizations using AI-augmented operations reduce unplanned downtime by 35-45%. The mechanism isn't mysterious: when the right procedure surfaces in 30 seconds instead of 15 minutes, the response window compresses and the error rate drops.

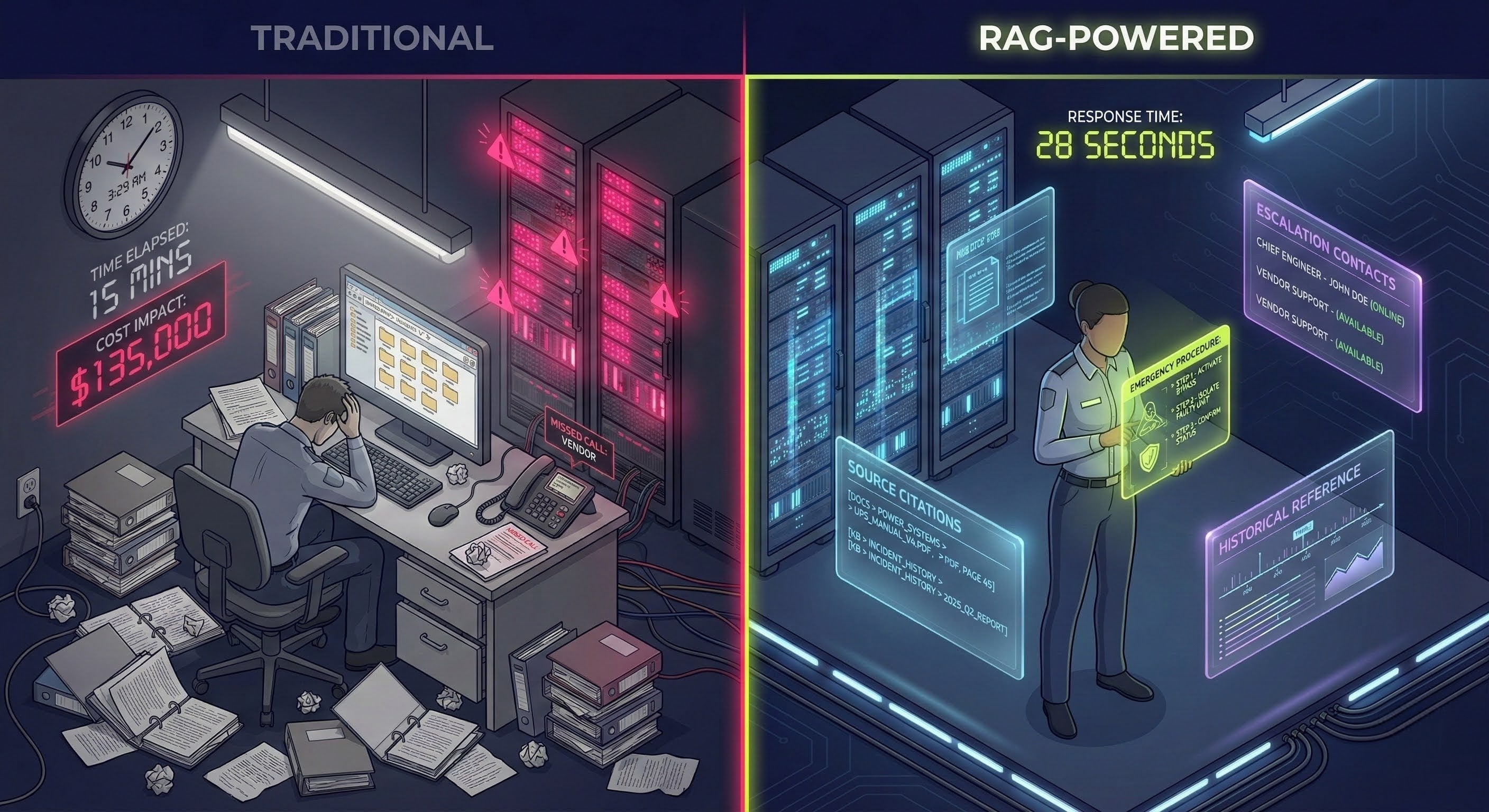

| Metric | Traditional Response | With RAG |

|---|---|---|

| Time to locate correct procedure | 5-15 minutes | < 30 seconds |

| Human error rate during crisis | 35-45% of incidents | 10-15% |

| Mean time to resolution | 2.5 hours | 1.2 hours |

| Escalation accuracy | 70% | 95% |

The first 15 minutes of emergency response determine 80% of outcome severity. Everything that accelerates those first minutes has outsized impact on the final cost.

Why traditional emergency response breaks down

The problems with traditional emergency response aren't about negligence or bad training. They're structural—predictable consequences of how emergency knowledge is stored and accessed.

Time pressure versus information architecture

During an emergency, an operator must assess the situation, locate the correct procedure, execute complex conditional steps, coordinate across teams, and document everything for post-incident review. These tasks compete for the same cognitive bandwidth, and the first bottleneck is always the same: finding the right procedure.

A 500-page runbook organized by equipment type doesn't help when the operator is thinking in terms of symptoms: "UPS alarm, battery failing, load at 85%." The runbook has a section on UPS maintenance, another on battery systems, another on load management—each written by different people at different times. The operator must first translate their situation into the document's organizational logic, then navigate to the right section, then verify it's the current version.

Under stress, with alarms sounding, this translation fails. People revert to heuristics, call a colleague, or make their best guess.

The night shift problem

Emergencies don't schedule themselves during business hours. Staffing reality at most data centers looks like this:

| Shift | Staffing | Experience Level |

|---|---|---|

| Day (Mon-Fri) | Full team | Senior staff available |

| Evening | Reduced | Mixed experience |

| Night | Skeleton crew | Often junior staff |

| Weekends/Holidays | Minimum | Whoever's available |

The people most likely to face an emergency alone are the least experienced. The senior engineer who has seen this exact alarm pattern before and knows that UPS 3A's batteries were already flagged for replacement—that person is asleep. The L1 technician on the night shift has the procedures but not the judgment that comes from pattern recognition across dozens of incidents.

This is the expertise gap that emergency response systems either bridge or expose.

Procedure complexity under stress

Emergency procedures aren't linear. They branch: if the battery is swelling, evacuate; if voltage drops below 450V, transfer load; if utility power is unstable, escalate immediately. Each branch depends on readings, observations, and judgment calls that interact with each other.

On paper, these decision trees are manageable. During a real emergency—with alarms firing, phones ringing, and the awareness that every minute costs thousands—cognitive load overwhelms the operator's ability to track conditional logic across multiple pages.

Institutional knowledge loss

The most valuable emergency response knowledge often isn't in any document. It lives in the experience of senior engineers: "Last time UPS 3A threw this alarm, it was a charger fault, not an actual battery failure." "The escalation path in the runbook says call Facilities first, but in practice you want to loop in the NOC simultaneously."

When those engineers retire, change jobs, or simply aren't on shift, that knowledge is inaccessible. The runbook captures the procedure. It doesn't capture the institutional judgment that makes the procedure work efficiently.

What RAG-powered emergency response actually looks like

The difference between traditional and RAG-assisted emergency response isn't theoretical. Here's how it plays out across three common scenarios.

Scenario 1: UPS battery failure on night shift

A junior technician—six months on the job, L1 authorization—sees a battery failure alarm on UPS 3A. Load is at 85%, utility power is stable. In the traditional workflow, they open the runbook, search for "UPS battery," and find three potentially relevant sections across two different documents. They start reading. Minutes pass.

With RAG, the technician types what they see:

"UPS 3A showing battery failure alarm, load at 85%, utility power stable. What's the emergency procedure? I'm a L1 technician."

The system returns a structured response in seconds:

Situation assessment: UPS 3A serves Rows A, B, and C at 680 kW. Battery backup reduced to 8-12 minutes. Utility power stable, N+1 redundancy available via UPS 3B. Severity: critical, but time pressure is moderate given stable utility power.

Immediate actions (first 5 minutes):

- Verify alarm on UPS panel in Room B-115, confirm alarm type (battery failure vs. battery low vs. test fail)

- Visual inspection of battery room—check for swelling, leaking electrolyte, unusual heat or odor

- Document initial state: alarm timestamp, battery voltage (normal: 480V ±5%), current load

Safety boundaries (tailored to L1 authorization):

- You are authorized to acknowledge alarms, perform visual inspections, document, monitor, and escalate

- You are not authorized to open UPS cabinets, perform load transfers without supervisor approval, or work on battery systems

Escalation contacts: Shift supervisor, facilities manager (on-call), NOC, UPS vendor (Eaton)—with an escalation script template.

Historical context: Previous UPS 3A incidents (Oct 2023: cell failure, 4-hour resolution; Jun 2023: charger fault, 2-hour resolution). Batteries flagged for replacement in Q2 2024. Most alarms resolve without load impact.

The critical detail: the system knows this technician is L1-certified and scopes the response accordingly. It doesn't suggest actions beyond their authorization. It provides a monitoring checklist for what to track while waiting for senior support. And every recommendation traces back to a specific source document.

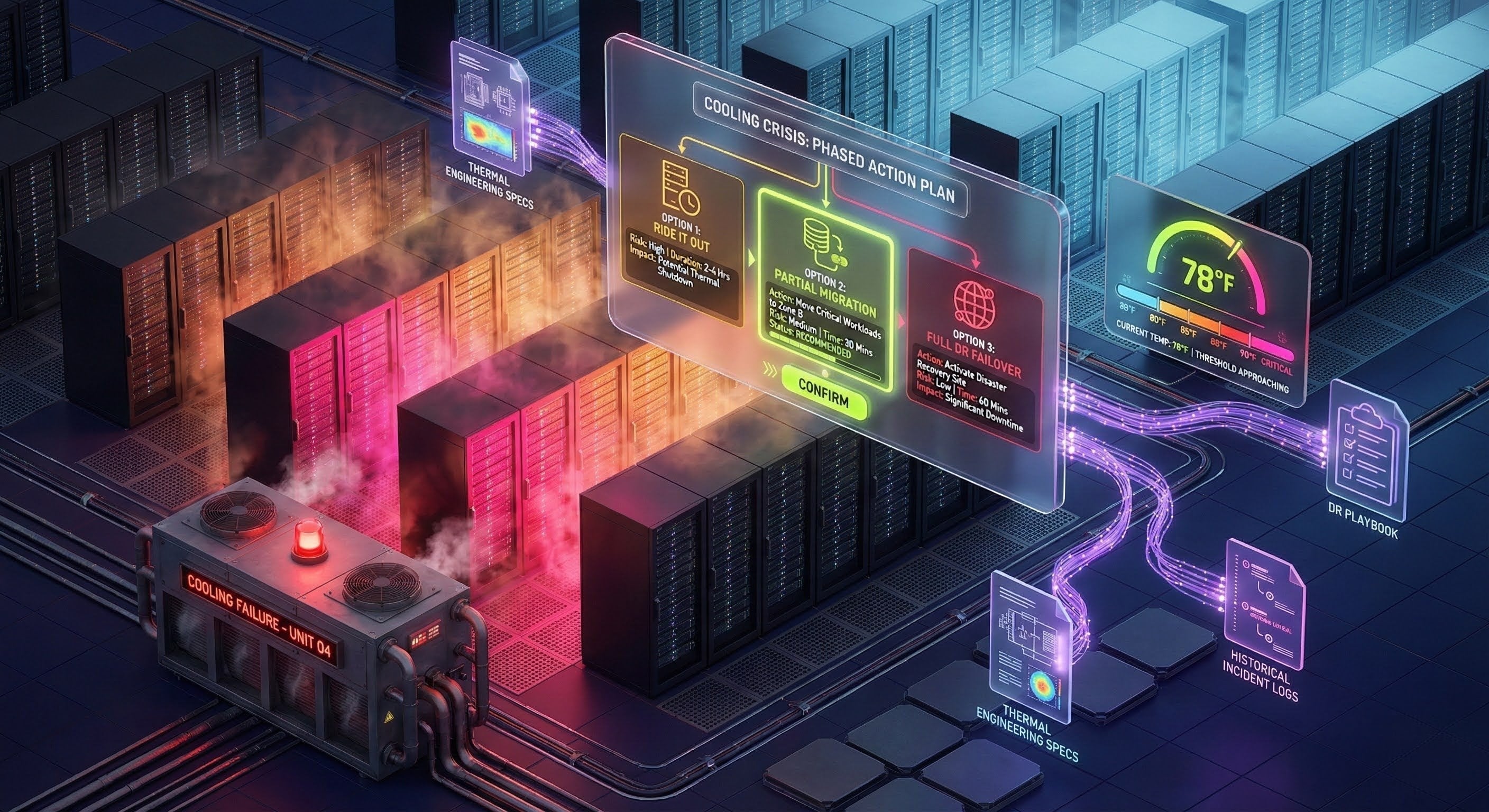

Scenario 2: cooling system failure and failover decisions

A more complex scenario: primary chiller plant losing capacity, data hall temperature at 78°F and rising (normal: 68°F). Multiple CRAH alarms. The operator needs a decision framework, not just a procedure.

The query:

"Chiller plant A losing capacity, temperature rising. Currently 78°F, normally 68°F. Multiple CRAH alarms. Should we failover workloads or can we ride it out?"

RAG doesn't just point to a runbook. It synthesizes across multiple documents—thermal engineering specs, DR runbooks, historical incidents—to provide a decision framework:

Thermal trajectory: At current degradation rate, estimated 50-55 minutes to critical temperature (90°F). Chiller A at ~40% capacity, Chiller B at 100% but unable to fully compensate alone.

Three options with risk assessment:

| Option | Action | Risk | Recommendation |

|---|---|---|---|

| Ride it out | Wait for repair | 70% probability of reaching critical temp | Not recommended without confirmed repair ETA |

| Partial migration | Suspend batch/dev workloads, reduce IT load 20-30% | Low—reversible if cooling recovers | Recommended |

| Full DR failover | Migrate all production to DR sites | Medium—failover complexity, potential data lag | If temp reaches 85°F or no repair ETA within 30 min |

Phased action plan:

- Phase 1 (0-10 min): Maximize available cooling, suspend non-critical batch jobs, get repair ETA

- Phase 2 (10-20 min, if temp exceeds 80°F): Migrate dev/test workloads to DR, prepare production failover

- Phase 3 (if temp reaches 85°F): Execute production failover, protect non-redundant equipment

Temperature decision thresholds:

| Temperature | Required Action |

|---|---|

| 78°F (current) | Active monitoring, prepare contingencies |

| 80°F | Begin workload reduction |

| 85°F | Initiate DR failover |

| 88°F | Mandatory equipment protection |

| 90°F | Critical—automatic shutdowns may trigger |

The response includes a communication template for executive notification and a monitoring checklist with 5-minute intervals. Every threshold and recommendation cites its source: thermal engineering specifications, DR playbook version, vendor operating limits.

This is the kind of synthesis that no single runbook provides. The thermal specs, the DR playbook, the escalation matrix, and last year's similar incident are separate documents maintained by separate teams. RAG bridges them in real time.

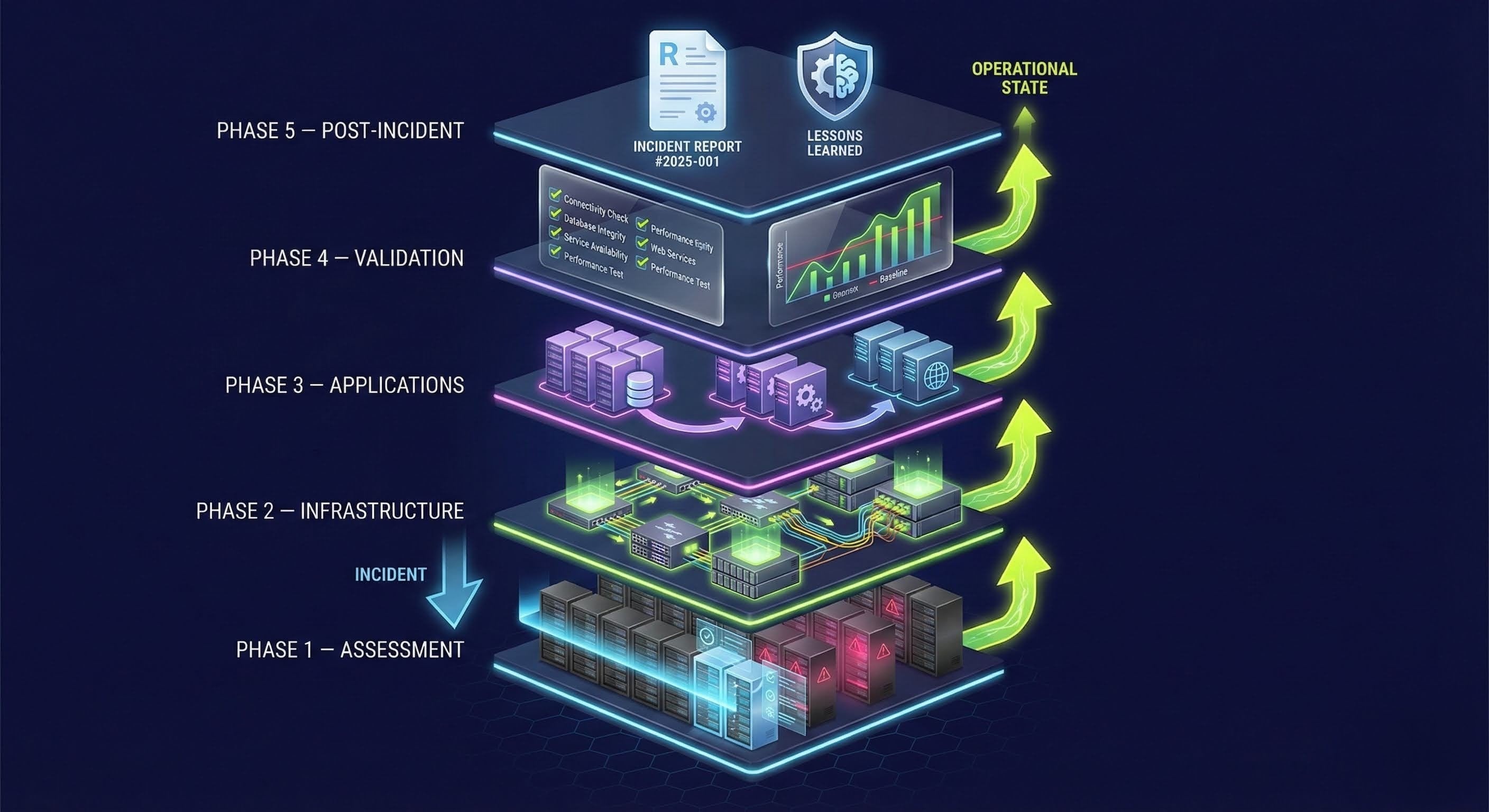

Scenario 3: post-Incident recovery sequencing

After the crisis subsides, recovery introduces its own complexity. Forty-five servers went down hard during a UPS failure. Power is restored. What comes back first?

This is where institutional knowledge becomes critical. Recovery sequencing depends on application dependencies, database integrity requirements, network topology, and the specific failure mode. Bringing up application servers before the databases they depend on wastes time. Missing a filesystem check on a server that experienced a hard shutdown risks data corruption.

RAG generates a recovery plan across five phases:

- Assessment (0-30 min): Verify power stability, inspect for hardware damage (status lights, SMART disk checks, RAID status), inventory affected systems by recovery tier

- Infrastructure (30-60 min): Network equipment first (core switches → distribution → access), then storage systems (SAN controllers, LUN access verification), then core services (DNS, DHCP, AD/LDAP, NTP)

- Application recovery (1-3 hours): Tier 1 critical systems (databases with integrity checks, primary application servers) → Tier 2 (web servers, API servers, cache) → Tier 3 (monitoring, logging, dev/test)

- Validation (3-4 hours): Functional testing against critical paths, data integrity verification, performance baseline comparison

- Post-incident (4+ hours): Root cause analysis, incident documentation, follow-up actions with owners and deadlines

Each phase includes specific verification steps: database consistency checks (DBCC, pg_stat, mysqlcheck), network convergence validation (spanning tree, LACP bonds, routing tables), and application health endpoints. The plan cites the DR Runbook version, application recovery procedures, and—crucially—references a previous similar incident for recovery time expectations.

The recovery checklist ensures nothing is missed during the handoff between crisis mode and post-incident process. Before declaring all-clear: all tier systems operational, functional tests passing, data integrity verified, monitoring re-enabled, backups resuming, incident documented, stakeholders notified.

What makes emergency RAG different from regular search

An emergency response RAG system has requirements that distinguish it from typical knowledge management:

Sub-second retrieval. When the alarm is sounding, a 10-second loading spinner is a failure. Emergency RAG systems pre-cache common emergency procedures and maintain offline capability for the scenario nobody wants to think about: a network emergency where the knowledge system itself might be unreachable.

Role-aware responses. An L1 technician and a senior facilities engineer asking the same question should receive different answers. The L1 gets scoped actions within their authorization, safety boundaries, and clear escalation triggers. The senior engineer gets the full decision tree with options that require their authority level both receiving answers grounded in the same source documents.

Multi-document synthesis. The most valuable emergency responses aren't found in any single document. They require combining the emergency procedure, the equipment manual, the escalation matrix, the site configuration database, and historical incident records. This cross-document synthesis, assembling a unified response from five separate sources maintained by five separate teams, is where RAG provides value that no search engine or indexed runbook can match.

Historical pattern matching. "The last three times this alarm fired on this UPS, it was a charger fault resolved in two hours" is exactly the kind of context that changes operator behavior during an emergency. RAG systems that index post-incident reports and maintenance logs can surface these patterns automatically, providing the institutional memory that would otherwise require a specific senior engineer to be on shift.

Decision support, not just procedure retrieval. For complex emergencies—cooling failures, cascading power events, hybrid incidents involving multiple systems—operators need decision frameworks, not just step-by-step procedures. RAG can present options with risk assessments, thermal trajectory projections, and phased action plans that adapt based on how the situation develops.

The financial case

The ROI math on emergency response RAG is straightforward because the cost of doing nothing is so high.

| Benefit Category | Estimated Annual Value |

|---|---|

| Reduced MTTR (50% improvement) | $900,000 |

| Avoided human errors during crisis | $400,000 |

| Prevented unnecessary escalations | $300,000 |

| Reduced equipment damage from wrong procedures | $200,000 |

| SLA penalty avoidance | $150,000 |

| Total annual benefit | $1,950,000 |

Against typical implementation costs of $150,000-$185,000 in the first year (platform, procedure digitization, integration, training), the payback period is measured in weeks, not quarters.

The less quantifiable benefit: sleep quality for the operations managers who no longer wonder what happens when the night shift faces an emergency alone.

What changes and what doesn't

In our deployments of emergency response systems, we learned that the most critical failure point isn't the quality of the runbook—it's retrieval latency under stress. We recommend treating emergency procedure access as a performance requirement, not just a documentation problem. Our approach has been to pre-index the 50 most common emergency scenarios so they surface in under 5 seconds even on degraded networks. We built the offline capability specifically because our customers in network-sensitive environments raised it as a blocker—real-world emergencies often involve the very network infrastructure you rely on for RAG access. Unlike generic knowledge bases, emergency RAG needs to be available when the systems it describes are actively failing.

RAG-powered emergency response changes the information access equation dramatically. What it doesn't change is equally important.

What changes:

- Time to locate correct procedures drops from minutes to seconds

- Junior staff can access expert-level guidance without waiting for senior engineers

- Cross-document synthesis happens automatically instead of requiring manual cross-referencing

- Historical incident context surfaces alongside current procedures

- Every recommendation is traceable to specific source documents

What doesn't change:

- Operators still make the final decisions on safety-critical actions

- Procedures still need to be written, reviewed, and maintained by qualified engineers

- Post-incident analysis still requires human judgment and organizational learning

- Equipment still fails, alarms still fire at 3 AM, and the night shift still needs to respond

The technology doesn't replace expertise. It makes expertise accessible when and where it's needed—including the shifts when nobody with 20 years of experience happens to be on duty.

If emergency response time is a concern at your facility, schedule a demo to see how Mojar delivers the right procedure in under 30 seconds against your actual runbooks.

Get started with Mojar for data center operations and see the full picture.

Frequently Asked Questions

RAG systems deliver emergency procedures in under 30 seconds, compared to the 5-15 minutes it typically takes to locate the correct runbook manually. Systems can pre-cache common emergency procedures and operate offline for network-related emergencies.

Yes. Enterprise RAG deployments for emergency response include offline capability with locally cached procedure databases. Critical emergency procedures are pre-indexed and available even during network outages.

No. RAG augments human decision-making by providing instant access to documented procedures, historical incident data, and escalation paths. It ensures junior staff can access expert-level guidance when senior engineers aren't on shift, but critical decisions still require human judgment.

RAG systems ground every response in your actual documentation with source citations. Operators can verify any recommendation against the original runbook. Human verification workflows can be layered in for safety-critical procedures, and all guidance is traceable to authoritative sources.

Modern RAG systems automatically re-index when source documents are updated. When a runbook is revised, the system reflects changes immediately without manual re-entry. Version tracking ensures operators always receive guidance from the most current procedures.