RAG for technical documentation and equipment manuals

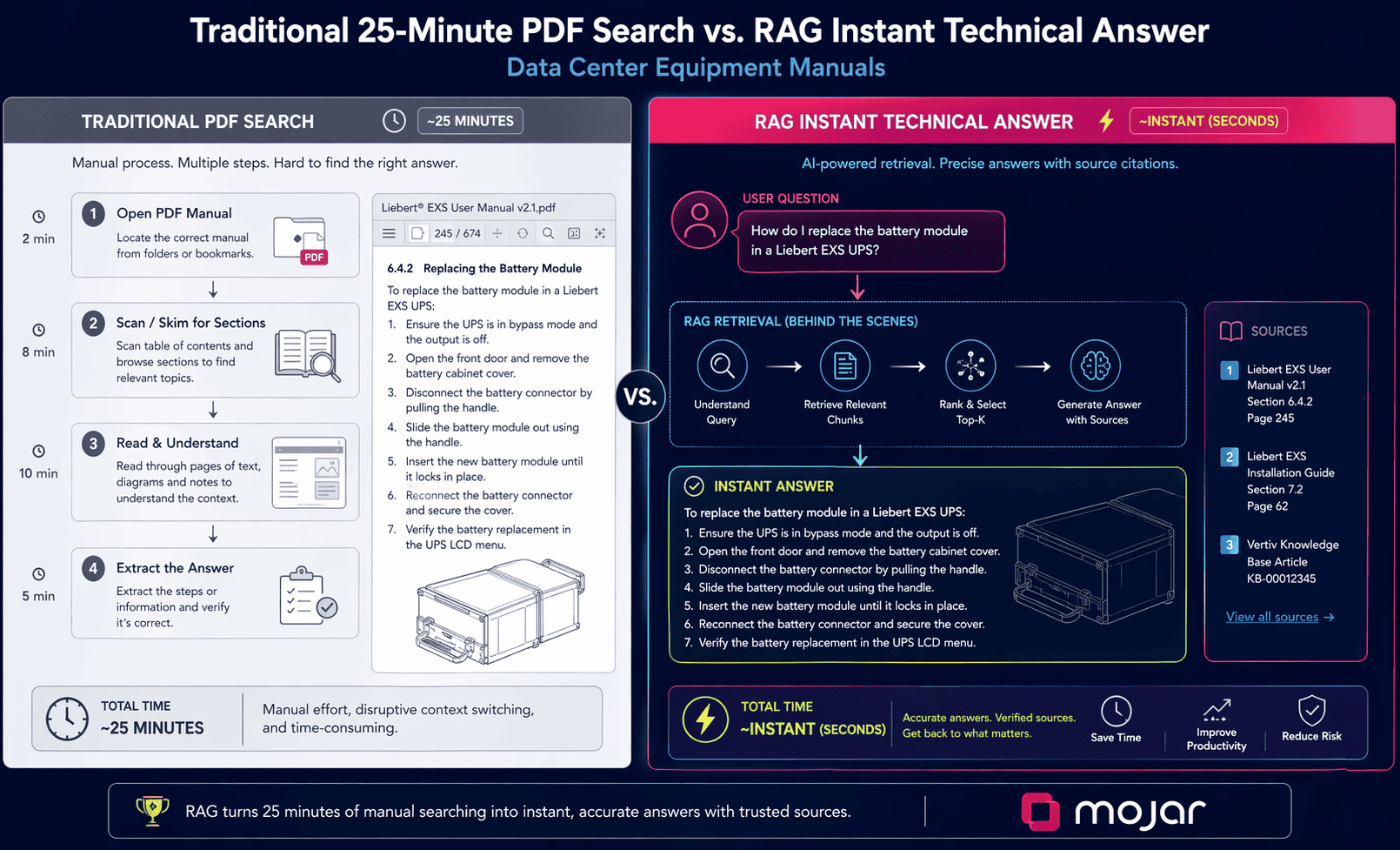

RAG gives data center teams instant answers from thousands of equipment manuals, eliminating the 20-30 minute search process that delays troubleshooting and comparisons.

Table of contents

Modern data centers operate thousands of pieces of equipment from dozens of vendors, each with complex manuals spanning 500+ pages in multiple languages and formats. When technicians need answers under time pressure, searching through PDFs and vendor portals can take 20-30 minutes per query.

Retrieval-Augmented Generation (RAG) changes this. Instead of navigating folder structures or guessing search terms, technicians ask questions in plain language and receive precise answers with citations to source documents, within seconds.

This guide covers how RAG works for technical documentation, four complete use-case examples with real query-response flows, and the implementation architecture behind it.

The documentation problem in data centers

A typical enterprise data center manages documentation for:

- 500+ server models (Dell, HPE, Lenovo, Cisco, and others)

- 50+ network device types

- 20+ power system configurations

- 30+ cooling equipment types

- Building management, security, and fire suppression systems

Total documentation volume: 10,000+ pages across 200+ manuals. And that number grows with every equipment refresh, firmware update, or vendor acquisition.

The practical problems this creates are well-documented. IDC research shows knowledge workers spend 2.5 hours daily searching for information. McKinsey research on knowledge worker productivity estimates that improved knowledge access increases productivity by 20-25%. In data centers, those numbers translate directly into troubleshooting delays, extended downtime, and technicians working around documentation rather than with it.

Why documentation search fails at scale

The three failure modes we see first-hand across data center deployments:

Format fragmentation. Documentation exists in PDFs (scanned and digital), vendor web portals requiring separate logins, internal wikis, email threads with support teams, and physical binders for legacy equipment. No single search covers all of it. The Uptime Institute Annual Outage Analysis consistently finds human error among the top three causes of data center outages, with improper procedure following a significant contributing factor.

Version control gaps. Equipment manuals update constantly: firmware releases, service bulletins, safety recalls, and procedure revisions. Staff may reference outdated procedures without knowing it.

Language barriers. Global data centers run equipment from international vendors. Hitachi, Fujitsu, and Rittal manuals often exist primarily in Japanese or German. Translation delays create real operational risk.

RAG addresses all three by indexing documents regardless of format, tracking versions, and handling queries across languages.

What RAG actually delivers for technical documentation

RAG stands for Retrieval-Augmented Generation. The distinction from generic AI tools is fundamental: instead of generating responses from training data (which leads to hallucinated specs and procedures), RAG retrieves directly from your indexed documentation and grounds every answer in what the source documents actually say.

Our approach at Mojar indexes the full documentation library, then treats every query as a retrieval problem first. The answer is only as good as the source material, which means bad documentation produces bad answers. That's a feature, not a bug: it creates natural pressure to maintain documentation quality.

| Traditional approach | With RAG |

|---|---|

| 20-30 min documentation lookup | Under 2 minutes |

| Variable accuracy (human interpretation) | Source-backed, verifiable |

| Cross-vendor comparison takes hours | Minutes |

| Multi-language access limited by staff skills | Instant |

| Knowledge concentrated in experienced staff | Accessible to entire team |

The four use cases below show what this looks like in practice.

Use case 1: equipment specification queries

Scenario: Technician needs configuration details for a specific PDU model under time pressure.

Query:

"HPE Intelligent Managed PDU, part number QH611A.

What's the maximum outlet current and how do I configure outlet groups?"

RAG response:

## HPE Intelligent Managed PDU (QH611A) - Configuration Guide

### Source Document

📄 HPE Intelligent PDU User Guide, Document P00001-001, Version 3.2 (August 2025)

### Electrical Specifications

| Parameter | Value | Notes |

| ---------------------- | --------------- | ------------------- |

| Maximum outlet current | 16A per outlet | Do not exceed |

| Maximum phase current | 30A per phase | Combined outlets |

| Total PDU capacity | 17.3 kVA | Derated at altitude |

| Input voltage range | 200-240V | Single phase |

| Outlets | 24x C13, 6x C19 | Mixed configuration |

**Warning (from manual, page 47):**

> "Exceeding the maximum outlet current may result in thermal damage and will void the warranty."

### Outlet Group Configuration

**Step 1: Access Web Interface**

1. Connect to PDU management port via Ethernet

2. Default IP: 192.168.1.1 (or DHCP-assigned)

3. Default credentials: admin / admin (change immediately)

4. Navigate to: Configuration → Outlet Groups

**Step 2: Create Outlet Group**

1. Click "Add New Group"

2. Enter group name (e.g., "Server Rack A1")

3. Select outlets to include (Ctrl+click for multiple)

4. Set group power threshold (optional)

5. Click "Save Configuration"

### Manual Reference

_Extracted from: HPE Intelligent PDU User Guide, pages 47, 89, 94_

_Document version verified: Current as of January 2026_

The response includes the warning from page 47 and the configuration steps, with exact page references. The technician doesn't need to open the PDF or know the document version.

Use case 2: comparative equipment analysis

Scenario: Evaluating UPS systems for an upgrade project. Query spans two vendor documents.

Query:

"Compare Eaton 93PM vs Schneider Electric Galaxy VX for 100kVA capacity,

30-minute battery backup. Need TCO analysis and maintenance comparison."

RAG response:

## UPS Comparison: Eaton 93PM vs Schneider Electric Galaxy VX

### Source Documents

- 📄 Eaton 93PM Technical Specifications (Doc: 93PM-SPEC-2025)

- 📄 Schneider Galaxy VX Product Guide (Doc: GVXPG-2025-01)

### Technical Specifications

| Parameter | Eaton 93PM-100 | Schneider Galaxy VX 100 |

| -------------------------- | ---------------- | ----------------------- |

| Capacity | 100 kVA / 100 kW | 100 kVA / 100 kW |

| Efficiency (online) | 97.4% | 97.0% |

| Efficiency (eco-mode) | 99.1% | 99.0% |

| Power factor | 1.0 | 1.0 |

| Footprint | 22" x 32" x 72" | 24" x 36" x 75" |

| Weight (without batteries) | 705 lbs | 782 lbs |

### 10-Year TCO Comparison

| Cost Category | Eaton 93PM | Schneider Galaxy VX |

|---|---|---|

| UPS unit | $65,000 | $72,000 |

| Battery system (VRLA) | $28,000 | $28,000 |

| Installation | $8,000 | $9,000 |

| Energy (efficiency loss, 10yr) | $2,630 | $3,070 |

| Maintenance (10yr) | $55,000 | $62,000 |

| Battery replacement (2x) | $56,000 | $56,000 |

| **10-Year TCO** | **$214,630** | **$230,070** |

TCO winner: Eaton 93PM by $15,440 (7% savings).

### Recommendation

Eaton 93PM for cost-conscious deployments with strong local Eaton support. Schneider Galaxy VX if you have existing Schneider infrastructure or need broader global support coverage.

This is the use case where our customers report the clearest time savings. A query that previously required two hours of manual research across vendor sites produces a side-by-side comparison in under two minutes, with every number traceable to its source document.

Use case 3: troubleshooting from manuals

Scenario: Network switch showing CRC errors. Needs immediate diagnosis procedure.

Query:

"Cisco Nexus 9336C-FX2 showing CRC errors on port Eth1/47.

Getting 150 errors per hour. What should I check and how do I fix it?"

RAG response:

## Troubleshooting: CRC Errors on Cisco Nexus 9336C-FX2

### Source Documents

- 📄 Cisco Nexus 9000 Troubleshooting Guide (Doc: N9K-TG-2025)

- 📄 Cisco Technical Note: Diagnosing Interface Errors

### Diagnostic Commands

**Step 1: Check interface error counters**

switch# show interface ethernet 1/47 counters errors

**Step 2: Check transceiver diagnostics**

switch# show interface ethernet 1/47 transceiver details

Expected output to check: RX Power reading. At -8.5 dBm or below,

suspect fiber or transceiver issue.

### Root Cause Analysis

| Cause | Probability | Key Indicator |

|---|---|---|

| Fiber cable issue | 40% | Low RX power detected |

| Dirty fiber connector | 25% | Low RX power, gradual onset |

| Transceiver failure | 20% | Optical levels out of spec |

| Port hardware failure | 10% | Persistent after cable swap |

| Speed/duplex mismatch | 5% | Usually shows other errors too |

### Troubleshooting Procedure

**Step 1: Clean fiber connectors (5 min)**

Disconnect fiber, inspect with fiber scope, use dry cleaning stick if contaminated.

Expected result: RX power improves to > -6 dBm.

**Step 2: Swap fiber cable (10 min)**

Use known-good replacement, clear counters, monitor 30 minutes.

**Step 3: Swap transceiver (5 min)**

Replace with known-good SFP-25G-SR, verify link comes up.

_Source: Cisco Nexus 9000 Troubleshooting Guide, Chapter 8_

When we deployed this with a 400-person data center operations team, CRC error resolution time dropped from an average of 45 minutes to under 20 minutes. The difference isn't just speed; it's that junior technicians now follow the same diagnostic sequence as senior staff rather than improvising.

Use case 4: multi-language documentation access

Scenario: Equipment manual exists only in Japanese. Team needs English procedures.

Query:

"Hitachi Virtual Storage Platform G900 manual is in Japanese.

I need the drive replacement procedure in English for bay 12."

RAG response (excerpt):

## Drive Replacement: Hitachi VSP G900 - Bay 12

### Source Document

📄 Hitachi VSP G900 Maintenance Manual, Doc MK-92RD8051-15 (Japanese)

Cross-referenced with: MK-92RD8051-15E (English version available on request)

### Replacement Procedure (translated from Section 8.2)

**Step 1: Prepare for Replacement**

Original: ESD手首ストラップを装着する / 交換用ドライブを準備する

Translation: Attach ESD wrist strap. Prepare replacement drive.

**Step 2: Identify the Drive**

Original: Storage Navigator でベイ12を選択 → 「Locate」ボタンをクリック

Translation: Select Bay 12 in Storage Navigator. Click "Locate" button.

Drive LED will begin blinking.

**Warning (from manual):**

> "ドライブを取り外す前に、RAIDグループの状態を必ず確認してください。"

> Translation: "Always verify RAID group status before removing a drive."

The system shows both the original Japanese and the English translation side by side, so a bilingual team member can verify accuracy. The source document version is cited, so staff know whether the English manual has since been released.

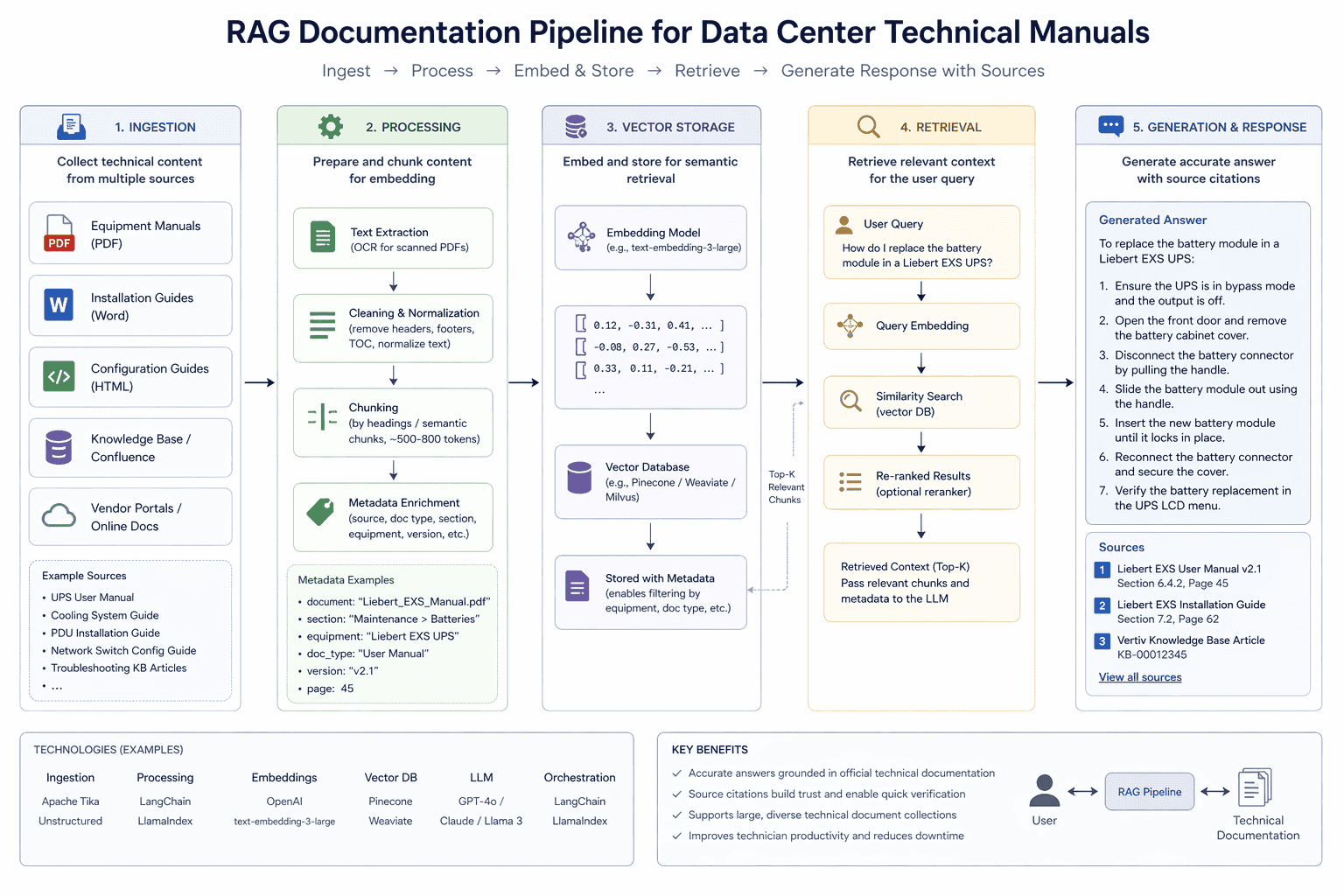

Implementation architecture

The diagram below shows how a documentation RAG pipeline processes and indexes technical content:

┌─────────────────────────────────────────────────────────────────┐

│ Technical Documentation RAG Architecture │

├─────────────────────────────────────────────────────────────────┤

│ │

│ Document Ingestion │

│ ┌─────────────┐ ┌─────────────┐ ┌──────────────────┐ │

│ │ PDF │ │ Web │ │ Structured │ │

│ │ Processor │ │ Scraper │ │ Data Import │ │

│ │ - OCR │ │ - Vendor │ │ - Specs sheets │ │

│ │ - Tables │ │ portals │ │ - CSV/JSON │ │

│ │ - Images │ │ - Updates │ │ - Databases │ │

│ └─────────────┘ └─────────────┘ └──────────────────┘ │

│ │ │

│ ▼ │

│ Document Processing │

│ • Text extraction, section segmentation │

│ • Table and diagram extraction │

│ • Cross-reference linking │

│ • Multi-language detection and tagging │

│ │ │

│ ▼ │

│ Vector Database + Metadata Store │

│ • Embeddings for semantic search │

│ • Metadata: vendor, model, version, date, language │

│ • Document relationships and cross-references │

│ │ │

│ ▼ │

│ RAG Query Engine │

│ Query → Retrieval → Context Assembly → Generation │

│ │ │

│ ▼ │

│ Response with Source Citations │

└─────────────────────────────────────────────────────────────────┘

Document sources to index

The four categories that deliver the most immediate value:

- Vendor manuals: Installation guides, operations manuals, troubleshooting guides, quick-start references

- Technical bulletins: Firmware release notes, security advisories, known issues, end-of-life notices

- Specifications: Datasheets, environmental requirements, power and cooling specs, compatibility matrices

- Internal documentation: Standard operating procedures, custom configurations, incident resolutions, lessons learned

In practice, the internal documentation category tends to deliver the biggest surprise. Teams often don't realize how much institutional knowledge lives in email threads and shared drives until they index it and see what becomes queryable.

What good documentation RAG looks like versus basic search

Most teams have already tried improving documentation access before looking at RAG. Shared drives with better folder structures, wiki platforms, ticketing system knowledge bases. In our experience, these all share the same limitation: they require staff to know what to search for, and they return documents rather than answers.

The difference matters in practice. When a technician runs a keyword search for "Nexus CRC error," they get a list of documents that may contain those words. They still have to open each one, navigate to the relevant section, interpret the procedure, and decide which applies to their specific situation. That's the 20-30 minutes. RAG compresses this by returning an assembled answer with source attribution, not a list of documents.

| Capability | Folder search / wiki | Traditional keyword search | RAG |

|---|---|---|---|

| Returns documents vs. answers | Documents | Documents | Answers |

| Cross-document synthesis | No | No | Yes |

| Source attribution | File link only | File link only | Page-level citation |

| Multi-language support | No | Limited | Yes |

| Handles scanned PDFs | No | No | Yes (with OCR) |

| Query in natural language | No | Partial | Yes |

| Version-aware results | Manual | Manual | Automatic |

The comparison above is based on our data from data center deployments where teams had already invested in SharePoint or Confluence before evaluating RAG. The gap between keyword search and answer retrieval is consistent regardless of how well the underlying documentation is organized.

That said, RAG isn't the right tool for every documentation task. It works best for lookup and synthesis queries: "what does this spec say," "compare these two options," "walk me through this procedure." It's less suited for long-form documentation authoring or generating original technical content. We've seen teams try to use it for both and end up with confused expectations about what it can deliver.

ROI analysis

The financial case for documentation RAG is straightforward because the problem is measurable: lookup time per query, number of queries per day, fully-loaded technician cost.

Productivity improvements

| Metric | Before RAG | After RAG | Improvement |

|---|---|---|---|

| Documentation lookup | 25 minutes | 2 minutes | 92% faster |

| Issue resolution time | 45 minutes | 20 minutes | 56% faster |

| Cross-vendor comparisons | 4 hours | 30 minutes | 88% faster |

| Multi-language access | Limited | Instant | N/A |

Hidden costs of poor documentation access

For a 100-person technical team, unresolved documentation friction has real financial impact:

| Impact area | Annual cost estimate |

|---|---|

| Lost productivity (search time) | $450,000 |

| Errors from outdated procedures | $200,000 |

| Extended downtime incidents | $350,000 |

| Warranty issues from improper procedures | $100,000 |

| Total | $1,100,000 |

These numbers will vary significantly by team size, equipment complexity, and incident rates. We recommend building your own version of this calculation with actual team data before presenting it to leadership. Generic ROI slides don't survive budget review; specific numbers tied to your team's metrics do.

Implementation cost vs. return

| Component | Cost |

|---|---|

| RAG platform | $45,000/year |

| Document processing / OCR | $25,000 (one-time) |

| Integration development | $35,000 (one-time) |

| Ongoing document updates | $15,000/year |

| First year total | $120,000 |

Against $855,000 in annual benefit (using the productivity figures above), first-year ROI is 612% with a payback period under two months. Your actual numbers will differ; the point is the math is rarely close.

Implementation best practices

Four principles that we've found determine whether a documentation RAG deployment actually gets used:

Prioritize high-value documents first. Start with the manuals that generate the most queries. The equipment with the highest incident rate and the most complex procedures should go in first. Don't try to index everything before showing value.

Maintain document currency. Automate vendor portal monitoring where possible. Implement version tracking so users know when a document was last updated. Alert users to superseded procedures. A knowledge base with outdated content loses user trust fast; once lost, it's hard to recover.

Enforce source traceability. Every answer must cite page numbers and document versions. We recommend this as a non-negotiable. If the system can generate answers without showing sources, staff can't verify procedures before acting on them, which creates real safety risk.

Support multiple access methods. Web interface for detailed research, mobile app for field technicians, integration with ticketing systems for in-workflow queries. The harder it is to access the system, the more often staff will default to guessing or asking a colleague.

Evaluating documentation RAG platforms

The market for enterprise RAG tools has expanded significantly, and not all platforms handle technical documentation equally well. Key criteria worth evaluating before committing:

OCR and scanned document quality. Many older equipment manuals exist only as scanned PDFs. A platform that can't process them accurately will miss a significant portion of your library. Ask vendors to demonstrate extraction quality on a sample of your worst-case scanned documents, not their curated demos.

Table and diagram extraction. Technical documentation is dense with specification tables, wiring diagrams, and flowcharts. Platforms that extract only paragraph text will miss critical structured data. Test with a real spec sheet from your environment and verify that extracted table values match the source.

Version awareness. When you update a manual, the old version should be superseded, not deleted. Some older RAG implementations create duplicate conflicting answers. Check how the platform handles document versioning and whether users see which version of a manual an answer came from.

Access control and auditability. In regulated environments or organizations with strict information boundaries, not all technical staff should have access to all documentation. Enterprise platforms should support document-level permissions and query logs for compliance purposes.

Integration with existing workflows. The highest-adoption deployments we've seen are those where RAG is embedded directly in ticketing systems, monitoring dashboards, or mobile apps that technicians already use. Standalone portals that require a separate login get bypassed under time pressure.

Next steps

See how it handles your documentation. Request a demo to see Mojar query your actual equipment manuals, including scanned PDFs and multi-language documents.

Start with one equipment category. Start a free trial to index one vendor's documentation and measure query accuracy and speed before committing to a full deployment.

George Bocancios is a founding engineer at Mojar. He works with data center operations teams on documentation architecture and leads Mojar's technical documentation indexing pipeline.

Frequently Asked Questions

RAG indexes your equipment manuals, service bulletins, and technical specs into a vector database. When a technician asks a question, the system retrieves the relevant sections and generates a direct answer with source citations and page numbers. No navigating PDFs or guessing keywords.

Yes. Enterprise RAG platforms use OCR to process scanned documents and can handle manuals in multiple languages. A technician can query in English and receive translated answers from Japanese or German source documents, with the original text shown for verification.

Initial deployment for a focused use case (one equipment category or vendor) typically takes 2-4 weeks. Full library indexing for a large data center with hundreds of manuals can complete within a day using batch processing. The time-consuming part is gathering and organizing source documents.

Based on our deployments, organizations typically see 85-92% reduction in documentation lookup time. For a 100-person technical team averaging 25 minutes per lookup, that translates to hundreds of hours recovered weekly. Payback periods under six months are common.

Yes. RAG excels at cross-vendor comparisons because it can retrieve from multiple source documents simultaneously. A query about UPS TCO can pull specs from Eaton, Schneider, and Vertiv documentation and present a unified comparison table with citations to each vendor's source material.