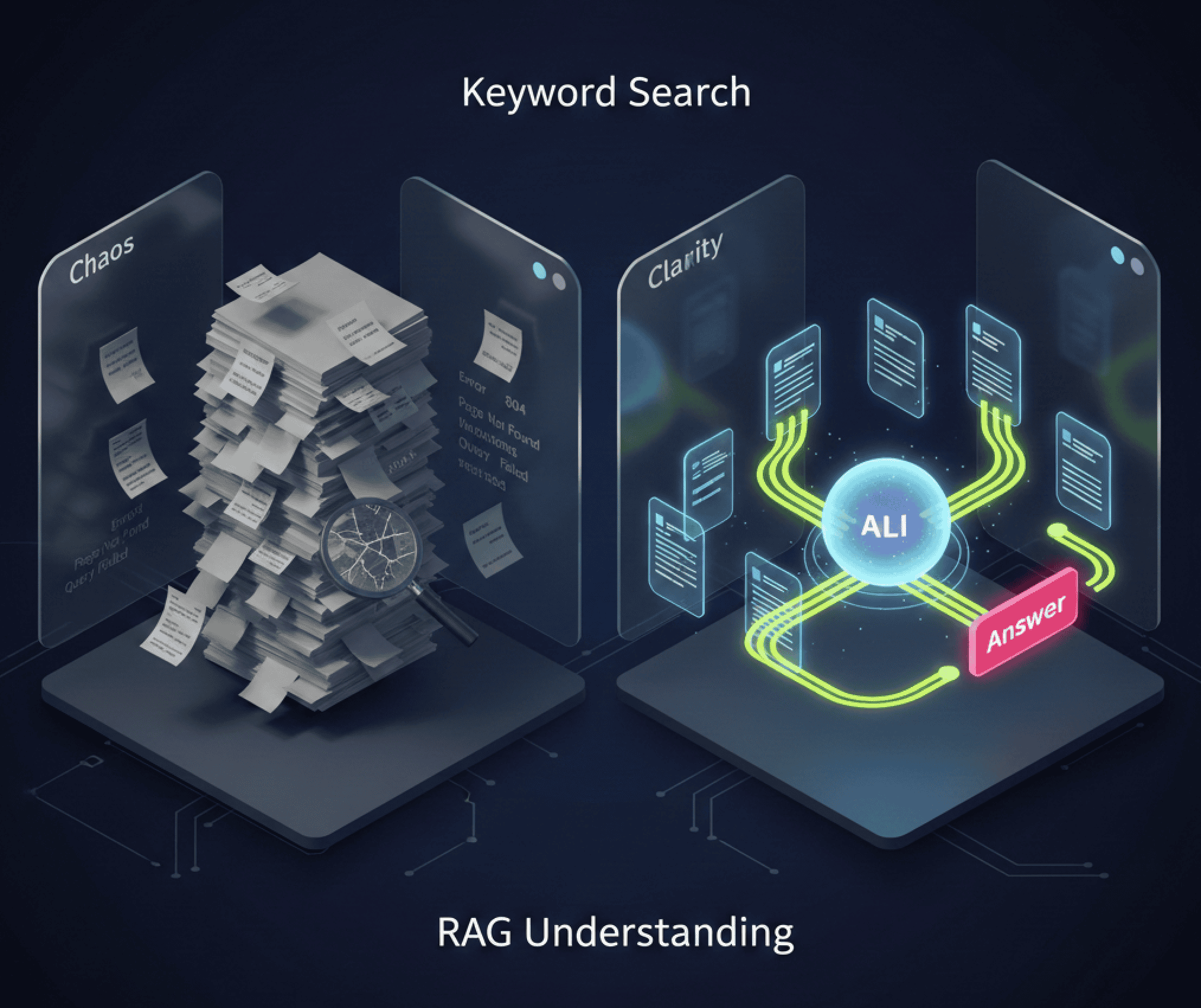

RAG vs traditional search for data center documentation

Keyword search returns 47 results. RAG returns an answer. For 3 AM emergencies costing $9,000/minute, the gap between 15 minutes and 5 seconds matters.

Table of contents

When search failures cost millions

A data center engineer needs to troubleshoot a cooling anomaly at 3 AM. They search the documentation portal for "CRAC unit temperature deviation protocol" and get:

- 47 results containing those keywords

- Outdated procedures from 2018

- Conflicting vendor manuals

- Generic best practices that don't apply to their specific equipment

15 minutes of searching. Zero useful answers.

We've seen this pattern consistently across the data center operations teams we work with. George Bocancios, Mojar's founder and a data center operations engineer, built our search-to-RAG approach after watching this exact failure repeat across facilities. The documentation exists; the search interface is the failure point. At $9,000 per minute per the Ponemon Institute, a 10-minute documentation search delay costs $90,000. Traditional keyword-based search systems weren't designed for the complexity of modern data center operations. They were designed for finding documents, not delivering answers.

Retrieval-Augmented Generation (RAG) changes this by understanding meaning, not just matching words.

Understanding the two approaches

Traditional search: the keyword matching era

Traditional search systems work on a simple principle:

- Index documents based on words they contain

- Match user queries to indexed keywords

- Rank results by keyword frequency and relevance signals

- Return a list of documents that might contain the answer

The Problem: Keywords don't capture meaning. "Critical temperature threshold" and "maximum operating temperature" mean the same thing but share zero keywords.

RAG semantic understanding meets generation

RAG transforms search into intelligent question-answering:

- Embed documentation into semantic vectors that capture meaning

- Retrieve contextually relevant information based on intent

- Augment AI with precise, current documentation

- Generate direct answers with source citations

The Advantage: RAG understands that "cooling failure recovery" relates to "HVAC emergency procedures" even without shared keywords.

Here's a concrete example of how the two approaches handle the same query:

Query: "CRAC unit temperature deviation protocol"

Traditional search result:

→ 47 documents containing those keywords

→ User opens each document, scans for relevant section

→ Time to answer: 15+ minutes

RAG result:

→ "Verify setpoint deviation > 3°C triggers immediate inspection.

Steps: (1) check evaporator coil for frost, (2) verify drain

line is clear, (3) check refrigerant pressure at gauges.

Source: CRAC Maintenance SOP v4.2, Section 3.1"

→ Time to answer: 5 seconds

This example illustrates why the difference matters most at 3 AM under SLA pressure.

Head-to-head comparison

Scenario 1: emergency troubleshooting

Query: "What should I do if I see error code E-47 on the UPS display?"

| Traditional Search | RAG-Powered Search |

|---|---|

| Returns 12 documents mentioning "error code" | Instantly provides: "Error E-47 indicates battery communication fault. Immediate actions: 1) Check battery module connections, 2) Verify battery management system status, 3) If critical load, prepare for bypass mode. See UPS Manual Section 8.4 for detailed diagnostics." |

| User must open and scan each document | Direct answer with exact procedure and source |

| 5-10 minutes to find answer | Answer in 3 seconds |

| MTTR: 45+ minutes | MTTR: 15 minutes |

Scenario 2: compliance documentation

Query: "What are our data retention requirements for incident logs?"

| Traditional Search | RAG-Powered Search |

|---|---|

| Returns compliance policy PDFs, legal documents, and email threads | "Per company policy DC-SEC-001 (updated Oct 2025), incident logs must be retained for 7 years. Critical security incidents require 10-year retention per SOC 2 Type II requirements. Automated archival to cold storage after 2 years. See Compliance Manual Section 3.2." |

| User must read multiple documents and reconcile conflicts | Synthesizes answer from multiple sources with current policy |

| Uncertainty about which policy is current | Clear citation to authoritative source with date |

| 15-30 minutes | 10 seconds |

Scenario 3: equipment specifications

Query: "Can we add 10 more racks to Row 7?"

| Traditional Search | RAG-Powered Search |

|---|---|

| Returns: floor plans, electrical diagrams, rack specifications | "Row 7 currently has 18 of 24 racks populated. Available power: 45kW remaining of 288kW circuit. Cooling capacity: adequate for 6 additional standard racks (6kW each). However, PDU-7B is at 87% capacity—recommend load balancing or PDU upgrade. See Capacity Plan Q1-2026 and Electrical Design Doc." |

| User must calculate from multiple sources | Instant analysis with actionable recommendation |

| Risk of missing constraints (PDU limit) | Comprehensive answer considering all factors |

| 30-60 minutes | 5 seconds |

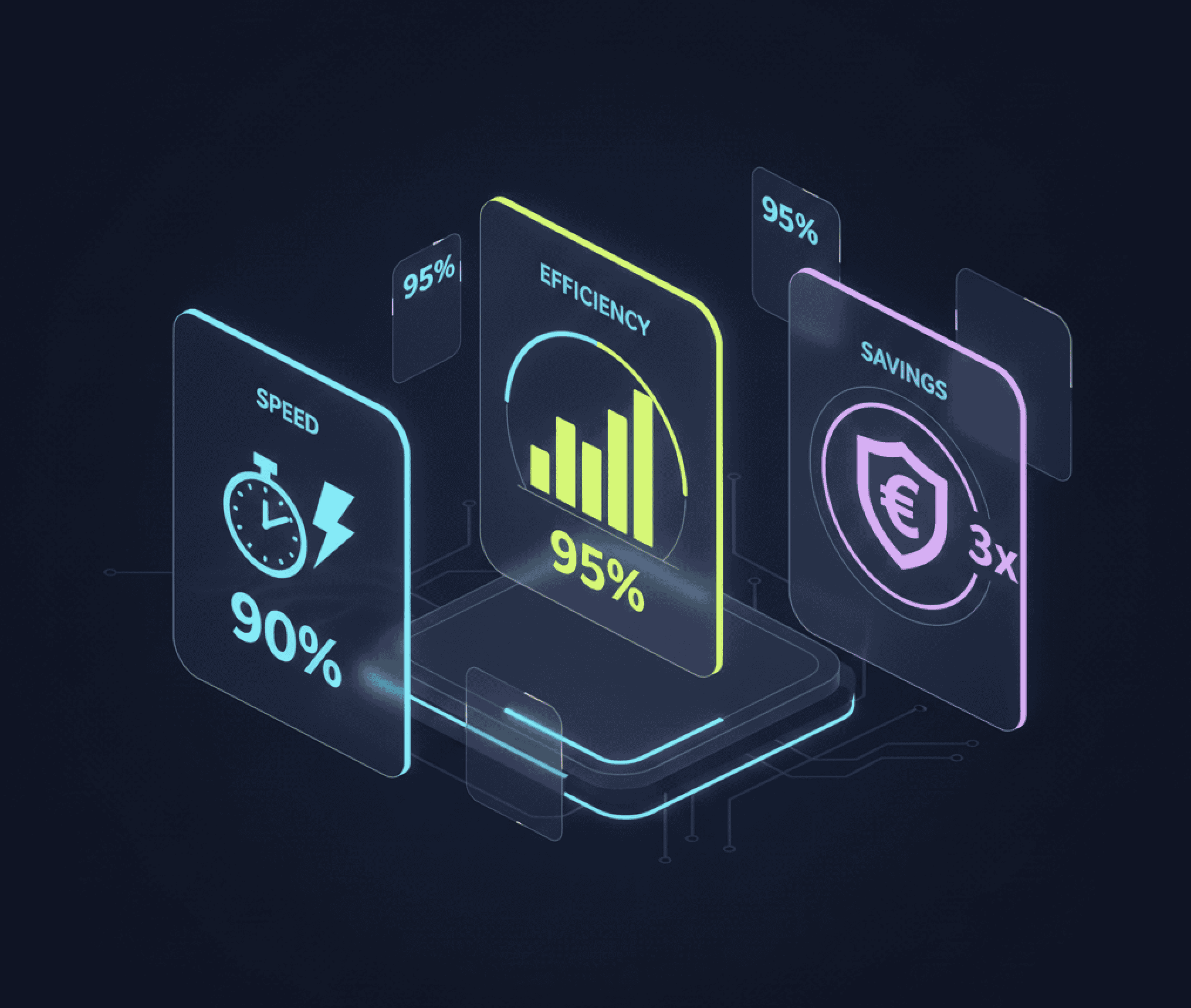

The business impact: by the numbers

Operational efficiency

| Metric | Traditional Search | RAG Implementation | Improvement |

|---|---|---|---|

| Average time to find critical info | 8-15 minutes | 30-60 seconds | 90% faster |

| Documents searched per query | 5-10 documents | N/A (direct answers) | 95% less effort |

| Search abandonment rate | 35-40% | 5-8% | 80% reduction |

| Successful first-query resolution | 25-30% | 75-85% | 3x improvement |

Financial impact

For a 20-person data center operations team:

| Cost Factor | Traditional Search | RAG System | Annual Savings |

|---|---|---|---|

| Time spent searching (30 min/day × $50/hr) | $130K/year | $26K/year | $104K |

| Incident resolution delays | $80K/year | $20K/year | $60K |

| Training on documentation tools | $25K/year | $10K/year | $15K |

| Documentation maintenance | $40K/year | $25K/year | $15K |

| Total Annual Impact | $275K | $81K | $194K savings |

ROI Calculation: With implementation costs of $50-80K for a team this size, payback period is 4-6 months.

Real-world scenarios: what RAG implementation looks like

Scenario: large-scale colocation environment (200+ facilities)

Typical Challenges Without RAG:

- 50,000+ documentation searches per week across operations teams

- Average search time: 10-15 minutes per query

- 30-40% of searches abandoned without finding useful answers

- Engineers often maintain personal "cheat sheet" documents

Expected Outcomes With RAG:

- Average time to answer: under 1 minute

- Search abandonment drops to under 10%

- Engineers typically report 2-3 hours/week in time savings

Projected Impact: Multi-million dollar annual productivity gains, 20-30% faster incident resolution

Scenario: enterprise data center with legacy documentation

Common Problem: Decades of documentation across multiple systems, acquisitions, and vendors

Typical Traditional Search Issues:

- Conflicting information between old and new procedures

- No way to determine which document is authoritative

- Critical safety information buried in lengthy PDFs

How RAG Addresses This:

- Ingests all documentation with metadata (date, authority level, equipment version)

- Automatically weights recent, authoritative sources higher

- Surfaces conflicts when they exist rather than hiding them

Expected Benefits: Reduced risk of incidents due to outdated procedures, significantly faster onboarding for new engineers

Technical comparison: why RAG wins

Limitation 1: synonyms and terminology

Traditional Search: "Power failure" won't find "utility loss event" or "mains outage"

RAG: Understands semantic similarity—finds all related concepts regardless of terminology

Limitation 2: multi-step reasoning

Traditional Search: Can't combine information from multiple sources

RAG: Synthesizes answers from equipment manuals + facility procedures + historical incident data

Limitation 3: context awareness

Traditional Search: Doesn't understand that "replace battery" means something different for UPS vs smoke detectors

RAG: Uses query context to provide equipment-specific answers

Limitation 4: implicit questions

Query: "What changed in the cooling protocol?"

Traditional Search: Needs explicit version numbers or dates

RAG: Infers you want recent changes, compares current vs previous procedures

Limitation 5: negative queries

Query: "What should I NOT do during a generator test?"

Traditional Search: Keyword "not" often ignored, returns what TO do

RAG: Understands negation, provides safety warnings and prohibited actions

Migration guide: how to move from search to RAG

Phase 1: augmentation (months 1-2)

- Deploy RAG alongside existing search

- A/B test with 20% of users

- Collect feedback and usage metrics

- Refine embeddings and retrieval

Benefit: Zero disruption, prove value before full rollout

Phase 2: integration (months 3-4)

- Make RAG the default search experience

- Keep traditional search as fallback

- Train power users on advanced queries

- Integrate with ticketing and monitoring systems

Benefit: Smooth transition with safety net

Phase 3: optimization (months 5-6)

- Remove traditional search

- Implement conversational follow-ups

- Add proactive suggestions

- Connect to real-time system data

Benefit: Full RAG capabilities unlocked

Common objections addressed

"our documentation is too complex for AI"

Reality: RAG handles complexity better than keyword search. The more complex your documentation, the greater the advantage of semantic understanding.

"traditional search is 'good enough'"

Cost Analysis: If engineers spend 30 minutes/day searching, that's 6% of productive time. For a 100-person team at $75/hr: $1M+/year in lost productivity. IDC research confirms that knowledge workers spend 2.5 hours per day searching for information—a figure that holds in technical operations environments.

"implementation is too expensive"

ROI Data: Average implementation cost: $300-500K. Average annual savings: $1.5-3M. Payback period: 2-4 months. Gartner research on AI in operations puts the productivity gains from AI-augmented knowledge retrieval at 20-40% for technical staff.

"what about search result ranking and filters?"

RAG Advantage: Instead of ranking documents by keyword relevance, RAG ranks by semantic relevance to your actual need. Advanced RAG systems still support filters (by date, equipment type, etc.) but apply them to more intelligent results.

The future: beyond search to predictive assistance

RAG is evolving beyond reactive search to proactive support:

Contextual awareness

Traditional: You search when you need something RAG Future: System suggests relevant info based on what you're doing

Predictive documentation

Traditional: Static documents wait to be found RAG Future: System predicts your next question based on task context

Learning systems

Traditional: Search doesn't improve over time RAG Future: Learns from successful queries, continuously improves

Implementation checklist

- Audit current search usage (time spent, abandonment rate, common queries)

- Calculate baseline costs (productivity loss, delayed resolutions)

- Inventory documentation sources (wikis, PDFs, vendor docs, tickets)

- Identify pilot use cases (start with highest-pain areas)

- Select RAG platform (build vs buy vs customize)

- Establish success metrics (time to answer, user satisfaction, ROI)

- Plan migration timeline (3-6 months typical)

- Train power users (maximize adoption and feedback)

- Measure and optimize (track metrics monthly, refine continuously)

What we've found in practice

We built RAG search systems on top of documentation libraries ranging from a few hundred documents to over 50,000. Our customers in data center operations consistently identify the same high-value starting points: emergency troubleshooting and compliance queries. Unlike general productivity tools, these categories have clear, measurable response time baselines before deployment, which makes the ROI case straightforward.

In practice, we learned that the teams getting the fastest results from RAG aren't the ones with the best documentation. They're the ones with the most painful search problems. We recommend measuring your current "abandonment rate" (queries where the user gives up and asks a colleague instead) before you start. That number becomes your baseline ROI metric.

Our recommendation based on deployments we've run: start with your highest-pain query category, not your entire documentation library. The organizations that see the fastest ROI pick one scenario (emergency troubleshooting is common) and prove the value before expanding scope. Full deployment across all documentation typically follows within 6 months because the initial results make the case internally.

Traditional search is a bottleneck that data center operations teams have adapted around with personal cheat sheets, tribal knowledge, and senior engineer hotlines. RAG removes the need for those workarounds by making the documented answer findable in seconds. The real-world results are consistent: once teams see the difference in their own documentation, adoption spreads without a mandate.

One honest limitation worth stating: RAG doesn't fix bad documentation. If the relevant procedure doesn't exist in your documentation library, or if the indexed version is three years out of date, RAG will surface the wrong answer confidently. Our data from deployments consistently shows that teams with higher documentation quality see 20-30% better query success rates than teams with fragmented or stale libraries. We recommend a documentation audit before deployment, focused on your top 20 query categories, specifically to identify gaps before the system goes live.

The teams that see the fastest ROI aren't necessarily the ones with the largest documentation libraries. They're the ones who identify the highest-pain query category, fix the documentation for that category, and deploy RAG against it first. That focused approach typically produces measurable search abandonment reduction within 3-4 weeks, which is enough to build internal support for expanding scope.

If you want to see how this compares against your current setup, schedule a demo with your documentation as the test case.

Get started with Mojar to see RAG applied to your documentation stack.

Want to see RAG in action with your data center documentation? Schedule a demo to experience the difference between finding documents and getting answers.

Frequently Asked Questions

No. RAG sits on top of your existing documentation. It indexes your SharePoint, Confluence, PDF manuals, and ticketing systems, then provides a smarter query interface. You don't need to migrate or restructure your documentation to get the benefits.

A phased approach takes 3-6 months: 2 months for augmentation alongside existing search, 2 months for integration as the default, then optimization. Most teams see measurable productivity improvement within the first month.

Modern RAG systems handle PDFs, Word documents, HTML pages, Confluence pages, Notion, SharePoint, ticketing systems, email threads, and structured databases. Handwritten documents require OCR preprocessing with variable accuracy.