ChatGPT alternatives for healthcare knowledge management

Compare AI alternatives for hospital knowledge management. See why RAG with document grounding outperforms generic LLMs for safe clinical policy retrieval.

The problem with "just use ChatGPT"

Someone in your organization has already suggested it. A director who saw a demo at a conference. An IT analyst testing the free version at home. The pitch sounds reasonable: upload your thousands of policies, procedures, and protocols to ChatGPT, then let staff ask questions in plain English.

The impulse makes sense. Healthcare knowledge management is genuinely broken. Nurses spend 40% of their shifts on documentation and information retrieval (AACN). Clinical staff can't find the policies they need. New hires are overwhelmed by the sheer volume of institutional knowledge they're expected to absorb in their first weeks.

But generic AI tools like ChatGPT, Claude, and Gemini were designed for general knowledge tasks, not the specific demands of healthcare operations. When we started working with hospital systems on knowledge management at Mojar, we quickly learned that the gap between "impressive demo" and "safe for clinical use" is wider than most people expect.

Why generic LLMs fail in healthcare settings

Before comparing alternatives, it helps to understand the fundamental mismatch between consumer AI tools and healthcare knowledge management requirements.

Hallucinations without warning

Large language models generate text by predicting what words should come next. They don't "know" anything in the human sense. When they encounter gaps in their training data or your uploaded documents, they fill those gaps with plausible-sounding fabrications.

In a clinical context, this is dangerous. A hallucinated drug interaction. A fabricated policy reference. A confidently stated dosage that doesn't exist in any of your documentation. Generic LLMs provide no guardrails against this because they generate answers the same way whether the source material supports them or not.

We tested this firsthand during an early proof-of-concept with a hospital network. A nurse asked about conscious sedation protocols, and the model returned a blended answer that pulled from its general training data instead of the uploaded policy document. The response sounded authoritative but was factually wrong, citing procedures that didn't match the hospital's approved protocol.

No source attribution

When a nurse asks "What's our protocol for conscious sedation?" and gets an answer, they need to know where that answer came from. Which policy? Which section? When was it last updated? Who approved it?

ChatGPT and similar tools summarize information without consistent source tracking. Even custom GPTs with document uploads maintain only an opaque connection between generated text and source material. Staff cannot verify answers against official documentation, which makes the system unreliable for clinical decision support.

Static document handling

Healthcare documentation is dynamic. Policies change. Protocols update. Formularies revise quarterly. Generic AI tools treat uploaded documents as static knowledge bases. They don't flag when information becomes outdated. They don't detect when two documents contradict each other. They don't alert you that the answer they just gave came from a policy that was superseded six months ago.

The compliance gap

Using consumer AI tools for clinical knowledge raises serious compliance questions. Where does your data go? Who can access it? Is there an audit trail? Most generic AI platforms were not designed with HIPAA considerations, access controls, or the regulatory requirements that govern healthcare information systems.

OpenAI offers Enterprise and Business tiers with BAA support, but the underlying tool still lacks healthcare-specific safeguards like document-level access controls, source verification, and clinical audit trails. For organizations handling protected health information, these gaps are a non-starter.

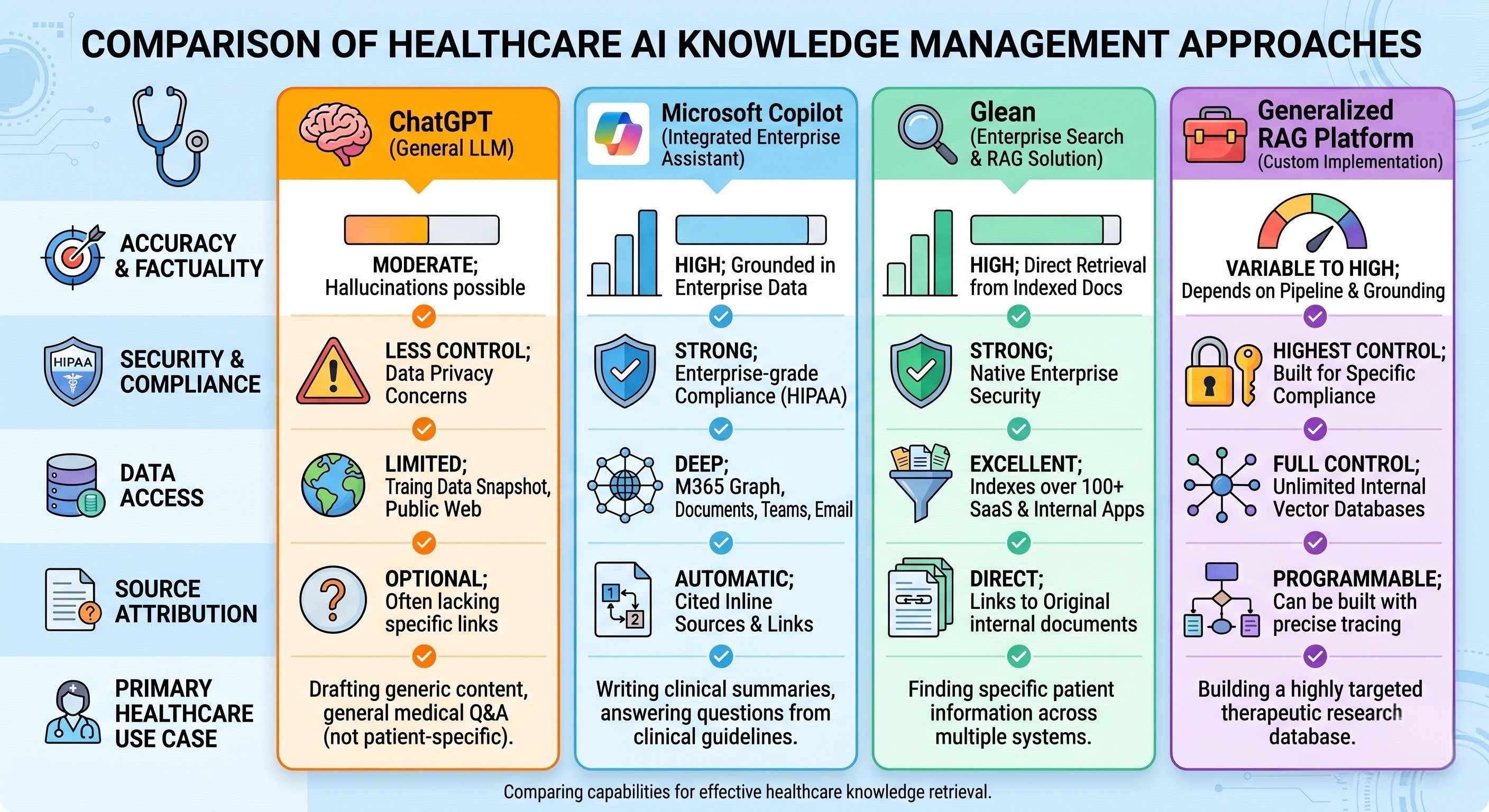

The alternative landscape

Given these limitations, what are the real options for healthcare organizations looking for AI-powered knowledge management? We tested and evaluated the major categories during our work with hospital systems. For a deeper technical guide, see our complete guide to RAG in healthcare.

Microsoft Copilot for Healthcare

Microsoft has invested heavily in healthcare-specific Copilot capabilities, integrating with Epic and other EHR systems. The pitch is compelling: AI embedded directly into the tools your staff already use.

What it does well:

- Deep Microsoft 365 integration for organizations already in that ecosystem

- EHR integration for clinical documentation workflows

- Enterprise security and compliance frameworks

Where it falls short:

Copilot excels at generating content within Microsoft applications but struggles with complex knowledge retrieval across heterogeneous document repositories. Your policies might live in SharePoint, but they might also live in legacy systems, scanned PDFs from acquired facilities, department-specific drives, and paper archives that were digitized poorly. Copilot's knowledge management is only as good as your existing SharePoint organization, which is probably part of the problem if you're reading this.

For pure knowledge management, Copilot works as a document creation and summarization tool, not as a systematic knowledge retrieval system. It won't detect that your nursing policy contradicts your pharmacy policy. It won't tell you that a document hasn't been updated since 2021.

Glean

Glean positions itself as enterprise search powered by AI. It connects to your existing systems (Google Workspace, Slack, Salesforce, Jira, and dozens more) and provides a unified search interface with natural language capabilities.

What it does well:

- Broad connector ecosystem for existing enterprise tools

- Strong permissions awareness (respects existing access controls)

- Clean, fast search interface

Where it falls short:

Glean is fundamentally a search tool. It finds documents. It does not necessarily answer questions from those documents. When a nurse asks "Can an RN remove a chest tube per our scope of practice?" Glean might return the right document, but the nurse still has to open it, read it, and locate the specific section.

Glean also doesn't solve the document quality problem. It surfaces what's there, whether it's current, contradictory, or accurate. For healthcare organizations struggling with version control, outdated policies, and conflicting department protocols, better search alone doesn't make the documents more reliable.

Traditional knowledge management platforms (Confluence, Notion, SharePoint)

These platforms represent the pre-AI approach to knowledge management. They provide structured repositories for documentation with varying degrees of search capability.

What they do well:

- Established, familiar interfaces

- Robust version control (when properly configured)

- Granular permissions and access controls

- Proven compliance frameworks

Where they fall short:

Traditional platforms solve the storage and organization problem without solving the retrieval problem. They require staff to navigate folder hierarchies, know document naming conventions, and manually search through results. As we documented in our analysis of hospital policy lookup problems, this is exactly where current systems fail clinical staff.

Adding AI features to these platforms (like Atlassian's Rovo or Notion AI) improves search but doesn't address the core challenge. These systems store documents. They don't maintain them. They don't detect contradictions. They don't improve over time based on what staff actually ask.

Specialized healthcare AI (Nuance, Suki, Ambience)

A growing category of AI tools focuses specifically on clinical workflows: documentation, coding, and ambient clinical intelligence. These tools listen to patient encounters, generate clinical notes, and handle administrative tasks.

What they do well:

- Deep clinical workflow integration

- Regulatory compliance built in

- Purpose-built for specific clinical use cases

Where they fall short:

These tools solve documentation and coding problems. They don't solve knowledge management problems. They help clinicians document what happened during a visit. They don't help staff find policies, protocols, drug information, or operational guidance. They complement knowledge management systems rather than replacing them.

Custom-built RAG systems

Some healthcare organizations build their own retrieval-augmented generation (RAG) systems using open-source tools like LangChain, LlamaIndex, or Haystack. This approach offers maximum control and customization.

What it does well:

- Complete control over architecture and data handling

- No vendor lock-in

- Customizable for specific organizational needs

Where it falls short:

Building enterprise-grade RAG systems is harder than the tutorials suggest. Production deployments require handling document parsing (including scanned PDFs that make up roughly 60% of healthcare documentation), managing embedding pipelines, optimizing retrieval accuracy, building user interfaces, implementing security controls, and maintaining the system over time.

We built our own RAG pipeline at Mojar, and even with a team dedicated to it, the engineering effort to reach production quality took months. Most healthcare IT departments are already stretched thin supporting EHRs and core clinical systems. Proof-of-concepts that never reach production, or production systems that become technical debt when their developer leaves, are the common outcomes.

What healthcare actually needs

After working with several hospital systems and seeing where every category of tool breaks down, we've seen the same five requirements surface consistently. Our approach at Mojar was shaped by these real-world patterns.

1. Document grounding with source attribution

Every answer must cite its source: specific document, specific section, last updated date. Staff need to verify information against authoritative documentation. In clinical settings, trust without verification is a liability. This is the example that separates tools built for healthcare from tools adapted for it.

2. Hallucination prevention through retrieval

The system should only answer from uploaded documents. If the answer isn't in the knowledge base, the system should say so. This requires a RAG architecture that constrains the LLM to provided sources rather than letting it fill gaps from its general training.

3. Document quality management

The system should actively improve the knowledge base over time by flagging contradictions between documents, identifying outdated content, and surfacing documents that generate confused user feedback. Most platforms treat the knowledge base as static. Healthcare requires systems that maintain and improve documentation quality.

4. Universal document ingestion

Healthcare documentation comes in messy formats: scanned PDFs from the 1990s, Word documents with inconsistent formatting, Excel spreadsheets with critical data in merged cells, images with embedded text. The system must handle these without requiring IT to clean and reformat everything first.

When we first tested Mojar with a regional hospital's document set, about 40% of their policy library consisted of scanned PDFs with no OCR layer. If your system can't parse those, you're ignoring nearly half the knowledge base on day one.

5. Clinical workflow integration

The system must be faster than asking a colleague. If it takes more clicks to query the AI than to send a text to the unit group chat, staff will work around it. Integration with existing systems like intranets, EHRs, and communication platforms is essential for adoption. We learned this through hands-on deployment: the best retrieval system in the world fails if it adds friction to clinical workflows.

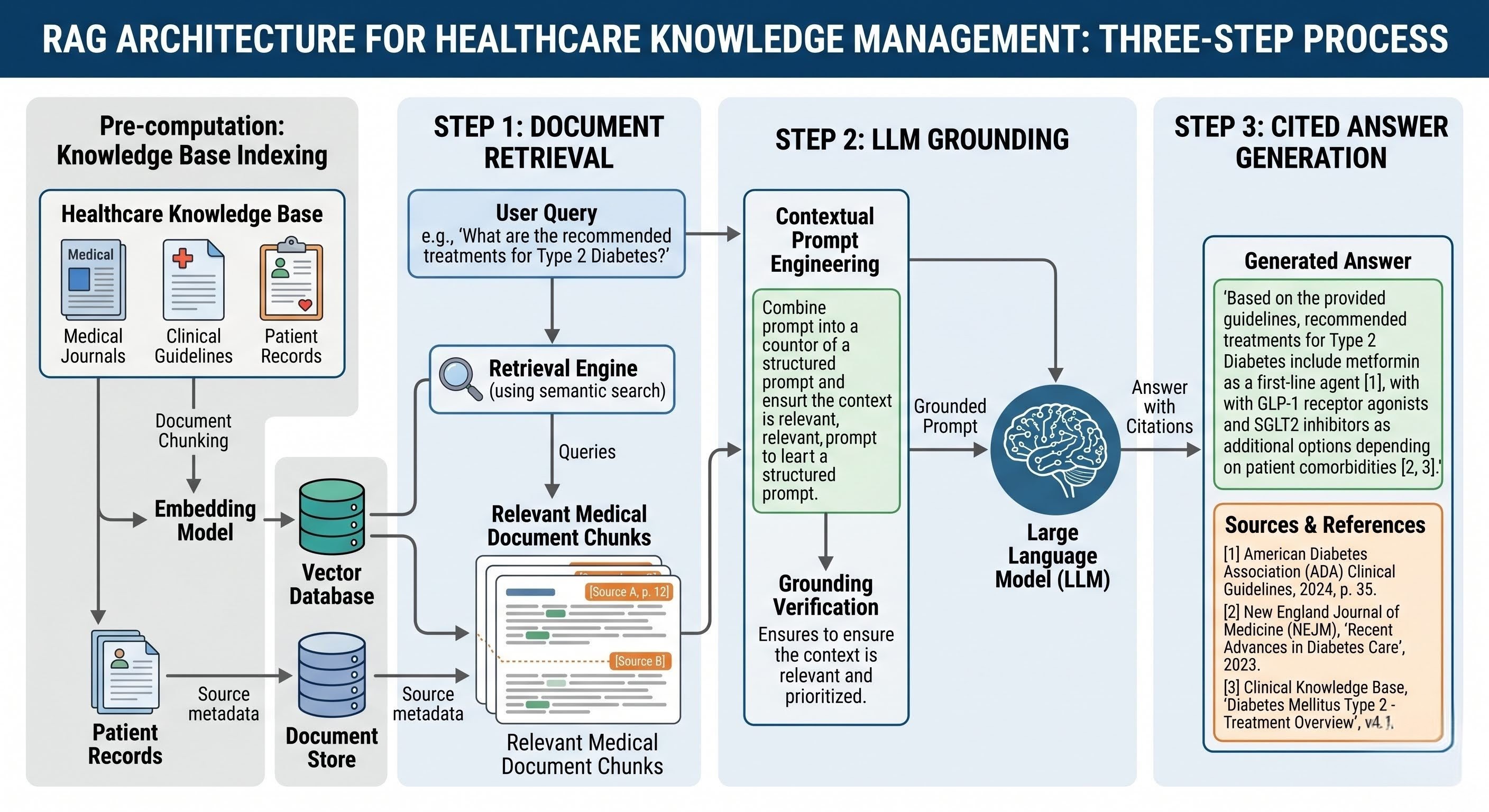

The RAG difference

Retrieval-Augmented Generation (RAG) takes a fundamentally different approach from the alternatives above. Instead of relying on an LLM's training data or general knowledge, RAG systems follow three steps:

- Retrieve relevant documents from your specific knowledge base

- Ground the LLM's response in those retrieved documents

- Generate answers only from retrieved content, with citations

This architecture directly addresses the core failures of generic AI in healthcare.

Hallucinations are minimized because the LLM is constrained to your uploaded documents. It can't make up policies that don't exist. Source attribution is automatic because the system knows exactly which documents were retrieved to generate each answer. Document control is maintained because the knowledge base is yours. You decide what goes in, how it's updated, and who has access.

The distinction matters in practice. Our customers in healthcare consistently report that grounded answers with citations change how staff interact with the system. When a nurse can tap on a source link and see the exact policy paragraph, the tool earns trust. Without that, adoption stalls within weeks.

Beyond basic RAG: autonomous knowledge maintenance

Most RAG platforms stop at retrieval and generation. They answer questions from your documents but do nothing to improve those documents over time. This gap determines whether a knowledge management system delivers lasting value or becomes another abandoned IT project.

Think about what happens when a nurse queries a RAG system and gets a confusing answer. In basic systems, that bad experience disappears. The nurse works around the system. The underlying documentation problem persists. Nobody learns from the failure.

Advanced RAG platforms include autonomous maintenance capabilities that close this loop:

Contradiction detection: The system analyzes your entire document repository and identifies conflicts. Policy A says 24 hours. Policy B says 48 hours for the same process. When we deployed Mojar at one healthcare customer, the system flagged three conflicting discharge documentation policies within the first week. Those contradictions had existed for over a year without anyone catching them.

Outdated content identification: The system recognizes when documents reference superseded regulations, former employees, or discontinued processes. It surfaces content for review based on signals beyond simple "last modified" dates.

Feedback-driven improvement: When users mark answers as unhelpful, the system investigates the source documents, identifies why the answer failed, and flags the documentation for correction. Bad experiences become improvement signals rather than abandonment triggers.

Natural language content updates: Instead of editing documents directly, staff can update the knowledge base conversationally: "Add that our visitor policy changed effective March 1st." The system processes these instructions and proposes updates for review.

This transforms knowledge management from a passive repository into a self-improving system. The platform doesn't just answer questions; it actively maintains the quality and consistency of the underlying documentation.

Making the decision

Choosing the right approach depends on your current state and priorities. Use this checklist to map your primary challenge to the tools that address it:

| If your primary challenge is... | Consider... | But know that... |

|---|---|---|

| EHR documentation burden | Clinical AI (Nuance, Suki) | These don't solve policy or procedure access |

| Microsoft 365 integration | Microsoft Copilot | Knowledge quality depends on existing SharePoint organization |

| Finding documents across systems | Glean | You still need to read the documents; answers aren't synthesized |

| Organizing existing documentation | Confluence, Notion, or SharePoint | Staff still need to navigate and search manually |

| Complete control and customization | Custom RAG build | Requires significant development and maintenance resources |

| Systematic knowledge retrieval with maintenance | RAG with autonomous maintenance | Emerging capability, and few platforms offer it today |

The question worth asking is not "which AI tool should we use?" but "what problem are we actually trying to solve?"

If staff can't find policies, you need better retrieval. If policies contradict each other, you need quality management. If documentation is outdated, you need maintenance workflows. If all three are true, and in our experience they usually are, you need a platform that addresses the full lifecycle of healthcare knowledge management.

How to evaluate and implement

Regardless of which direction you go, this guide covers the factors that determine whether the implementation succeeds or stalls.

Audit your documents first. No AI system fixes bad documentation. If your policies are poorly written, vague, or internally contradictory, AI will surface those problems faster (which is useful), but it won't fix them on its own. We recommend budgeting two weeks for a document audit before deployment. In my experience, this upfront investment saves months of cleanup later.

Plan for change management. Staff have adapted to broken knowledge systems by developing workarounds. They ask colleagues. They rely on memory. They develop local practices that may not align with official policy. Implementing AI requires building trust that the new system is faster, more reliable, and actually maintained. Pilot with one department, gather feedback, and expand from there.

Assign clear ownership. Someone needs to own the knowledge base. Who approves document updates? Who resolves contradictions? Who reviews AI-suggested improvements? Without clear governance, the system degrades over time no matter how good the technology is.

Embed the tool where staff already work. Standalone knowledge systems provide value, but embedded systems drive adoption. Browser extension, intranet widget, EHR sidebar: the fewer clicks between the question and the answer, the higher the usage. Our customers who moved from folder hierarchies to natural language search saw the biggest adoption gains when the tool was accessible within their existing intranet.

Define success metrics before you start. Time to find policies. Staff satisfaction scores. Audit preparation hours. New hire time-to-competency. Measure these at baseline and track improvement. If you can't show a measurable change in six months, something needs to adjust.

Where this leaves you

Generic AI tools are impressive technology, but they were not built for healthcare knowledge management. The requirements of clinical environments, specifically source attribution, hallucination prevention, document quality management, and compliance, demand specialized approaches.

RAG-based systems designed for enterprise knowledge management can deliver the benefits of conversational AI without the risks of generic LLMs. The ones that go further, actively maintaining and improving your documentation, address the problem most organizations actually have: not just finding information, but keeping it accurate and current.

If you're evaluating options for your organization, see how Mojar approaches healthcare knowledge management with autonomous document maintenance, contradiction detection, and source-grounded retrieval built for clinical environments. Book a demo to try Mojar with your own documents and see how it handles your specific policy library.

Frequently asked questions

Is ChatGPT HIPAA compliant for healthcare use?

ChatGPT's standard consumer and Plus plans are not HIPAA compliant. OpenAI offers Business and Enterprise tiers with a BAA, but the tool still lacks source attribution, hallucination prevention, and document-level access controls that healthcare knowledge management requires. A BAA alone doesn't make a tool clinically safe for policy retrieval.

What is RAG and why does it matter for healthcare?

Retrieval-Augmented Generation constrains an LLM to answer only from your uploaded documents, with citations to the exact source. This prevents hallucinations, provides verifiable source attribution, and keeps clinical policy answers grounded in your approved documentation. For a deeper technical overview, see RAG in healthcare: the complete guide.

Can I upload hospital policies to ChatGPT instead of using a RAG platform?

You can upload documents to ChatGPT via custom GPTs, but the tool does not provide consistent source attribution, cannot detect outdated or contradictory policies, and does not offer healthcare-grade access controls or audit trails. For policy retrieval where accuracy and traceability matter, a purpose-built RAG system is the safer approach.

How long does it take to deploy a healthcare RAG system?

A purpose-built RAG platform like Mojar can be deployed in days with existing document sets. Custom-built RAG systems using open-source frameworks typically take 3 to 6 months to reach production quality due to document parsing, embedding pipelines, security, and UI requirements.

Frequently Asked Questions

ChatGPT's standard consumer and Plus plans are not HIPAA compliant. OpenAI offers Business and Enterprise tiers with a BAA, but the tool still lacks source attribution, hallucination prevention, and document-level access controls that healthcare knowledge management requires.

Retrieval-Augmented Generation (RAG) constrains an LLM to answer only from your uploaded documents, with citations to the exact source. This prevents hallucinations, provides verifiable source attribution, and keeps clinical policy answers grounded in your approved documentation.

You can upload documents to ChatGPT via custom GPTs, but the tool does not provide consistent source attribution, cannot detect outdated or contradictory policies, and does not offer healthcare-grade access controls or audit trails.

A purpose-built RAG platform like Mojar can be deployed in days with existing document sets. Custom-built RAG systems typically take 3 to 6 months to reach production quality due to document parsing, embedding pipelines, security, and UI requirements.