How AI Can Detect Conflicting Clauses Before They Become Litigation

Contracts assembled by multiple drafters hide contradictions. AI finds conflicting clauses before opposing counsel does, with cited sources and confidence scoring.

Table of contents

A contract is never written by one person. It's assembled — by associates pulling templates, partners redlining provisions, counterparties inserting carve-outs, and deal teams layering amendments on top of master agreements built years ago. The result, as we explored in "Is This the Latest Template?" Why Law Firm Knowledge Bases Fail, is a document management landscape where nobody can be certain what's current, what's been superseded, and what's quietly wrong. But there's a sharper problem hiding inside the contracts themselves: contract contradiction detection — the ability to identify clauses that directly conflict with each other before they become the centerpiece of a dispute. The question isn't whether your portfolio contains contradictions. It's whether your team finds them before opposing counsel does.

What contract contradictions actually look like

Contract contradictions aren't theoretical. They're specific, recurring, and expensive. In practice, they fall into four distinct patterns.

The classic: unlimited indemnification meets a liability cap

Section 12.1 of a technology licensing agreement provides that the vendor "shall indemnify, defend, and hold harmless the licensee against any and all claims arising from intellectual property infringement, without limitation." Section 12.2, drafted by a different attorney three weeks later, states that "the aggregate liability of either party under this Agreement shall not exceed the total fees paid during the preceding twelve-month period."

These two clauses directly contradict each other. If a $50 million IP infringement claim materializes, does the indemnification obligation apply without limit, or is it capped at last year's fees? The answer depends on which clause a court prioritizes, and that's exactly the kind of ambiguity that generates litigation.

This isn't a hypothetical pattern. In the Proskauer Rose case reported by the ABA Journal, a single copy-pasted clause contributed to $636 million in client losses and a malpractice suit the court refused to dismiss. The clause wasn't invented from scratch. It was copied from a prior deal. It just didn't belong in this one.

Cross-reference errors that survive redlining

Amendment No. 3 references "the termination provisions set forth in Section 8.3 of the Master Agreement." But the Master Agreement was restructured last year. What was Section 8.3 (Termination for Convenience) is now Section 9.1. Current Section 8.3 addresses data privacy obligations. The amendment now modifies the wrong provision, and nobody noticed because the section number looked right.

Implicit conflicts: outdated legal references

A force majeure clause cites UCC § 2-615 and its original 1952 commentary as the governing standard for excuse of performance. But decades of post-COVID judicial interpretation have substantially reshaped how courts apply force majeure doctrines. The clause isn't technically wrong — it references a real statute — but it relies on interpretive assumptions that no longer hold, creating a gap between what the drafter intended and how a court would read it today.

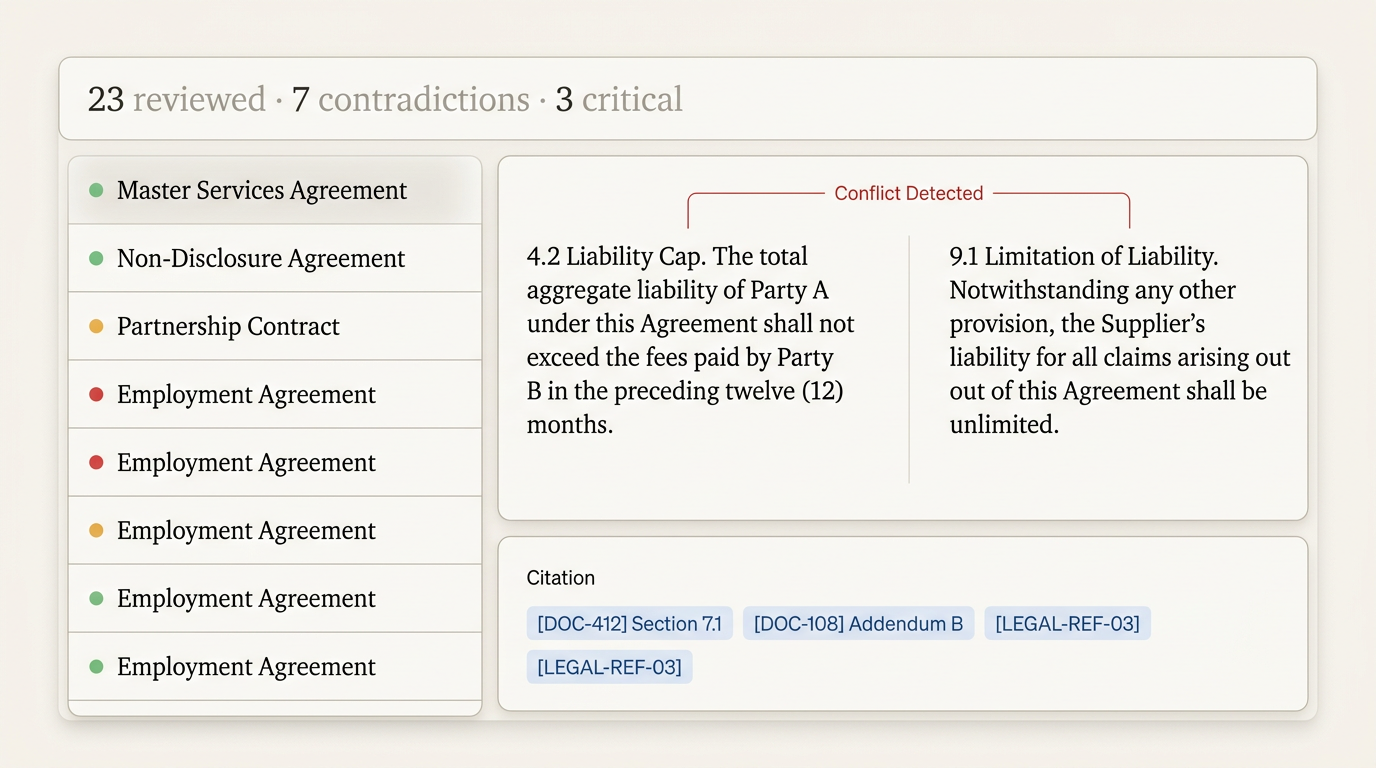

Portfolio-level contradictions

The master services agreement caps liability at $5 million. SOW #14, executed eighteen months later, contains an unlimited indemnification obligation for data breaches. Amendment No. 7 to the MSA raises the cap to $10 million but doesn't address the SOW-level indemnity. Three documents, three different liability regimes, zero clarity on which governs.

In Coinbase, Inc. v. Suski (2024), the Supreme Court confronted exactly this scenario — conflicting contracts with different provisions on arbitrability — and held that courts, not arbitrators, must determine which contract governs when the agreements themselves conflict. As the Oxford Business Law Blog analyzed, courts increasingly apply contextual interpretation and "specific over general" principles to resolve these conflicts. ScanMyContract documents how courts use harmonization doctrines and priority-of-terms clauses, but those doctrines only help if the contradiction is identified before it reaches a courtroom.

And the base rate for these errors is higher than most firms admit. Research from the Legal AI Benchmarking Phase 2 study found that human lawyers produce reliable first drafts only 56.7% of the time. In the Terraform case documented by ContractsProf Blog, a single-word error, "buyers" instead of "buyer", triggered over $300 million in liability and malpractice claims against Orrick and Cleary Gottlieb. One character. Nine-figure consequences.

How AI approaches contradiction detection

Not all approaches to this problem are equal. The solution landscape spans a wide range of sophistication, cost, and effectiveness.

| Approach | How it works | What it catches | What it misses |

|---|---|---|---|

| Manual review | Experienced attorneys read clause by clause | Nuanced tensions requiring contextual judgment | Scale: impractical across large portfolios |

| Keyword/rules-based tools | Flag predefined term patterns | Known patterns (e.g., "indemnify" + "limitation of liability") | Novel conflicts using different terminology |

| AI-powered semantic analysis (RAG) | Maps meaning across clause relationships | Semantic conflicts regardless of exact wording, including cross-document portfolio contradictions | Intentional carve-outs may generate false positives requiring attorney review |

Manual review

The traditional approach: experienced attorneys reading every clause, comparing provisions, and identifying conflicts through expertise and attention. This works. Senior lawyers with deep subject matter knowledge can catch contradictions that automated systems miss, nuanced tensions between provisions that require contextual understanding of how courts in a particular jurisdiction interpret specific language.

The problem isn't quality. It's scale. A human reviewer examining a 200-page contract can sustain focused attention for a limited period before fatigue degrades accuracy. Across a portfolio of 5,000 contracts, manual review becomes a multi-year, multi-million-dollar project. And the portfolio keeps growing while the review is underway.

Keyword search and rules-based tools

A step up from manual review: tools that search for specific terms and flag predefined patterns. Configure a rule to alert when "indemnify" and "limitation of liability" appear in the same contract. Search for "Section 8" references and check they point to the right content.

These tools catch what they're programmed to catch. The limitation is semantic: they operate on keywords, not meaning. "Indemnify" and "hold harmless" are synonyms that trigger different rules. A liability cap expressed as "aggregate exposure shall not exceed" won't match a rule looking for "limitation of liability." Rules-based tools are useful for known patterns but blind to novel conflicts.

AI-powered semantic analysis (RAG)

Retrieval-augmented generation represents a fundamentally different approach. Rather than matching keywords, RAG systems understand meaning. They parse the semantic content of clauses, map relationships between provision types, and detect when two or more clauses create incompatible obligations, even when those clauses share no common terminology.

Critically, RAG-based systems work across documents, not just within them. A contradiction between a master agreement and an amendment executed two years later is just as detectable as a conflict between adjacent sections. The system doesn't care about document boundaries — it cares about obligation consistency.

Evaluation framework: what to look for

If you're evaluating contradiction detection tools, whether for a firm, legal department, or ALSP, these are the capabilities that matter:

- Intra-document detection. The baseline: can the system identify conflicting provisions within a single contract? Indemnification vs. liability cap. Termination for convenience vs. minimum commitment period. Governing law vs. jurisdiction clause inconsistencies.

- Cross-document detection. The harder problem: contradictions that span master agreements, amendments, SOWs, and side letters. A system that only analyzes documents in isolation misses the most dangerous class of conflicts, the ones that accumulate across a contractual relationship over time.

- Source attribution. When the system flags a contradiction, does it cite exact clauses? Section numbers, page references, document names. Attorneys need to verify findings, not trust them blindly. A flag without a citation is noise.

- Historical pattern detection. Can the system identify that a particular contradiction type recurs across 40% of your vendor agreements? Pattern detection turns individual findings into systemic insights that inform template governance.

- Integration with existing DMS. Documents live in iManage, NetDocuments, SharePoint, and internal repositories. A contradiction detection tool that requires manual upload of every document is a demonstration, not a solution.

- Human review workflow. The system should flag for attorney review, not auto-correct. Contract contradictions often involve judgment calls, and resolution requires understanding the commercial context, not just the textual conflict.

How RAG-based contradiction detection works

Understanding the mechanics helps evaluate whether a system is genuinely detecting contradictions or simply matching keywords with better marketing.

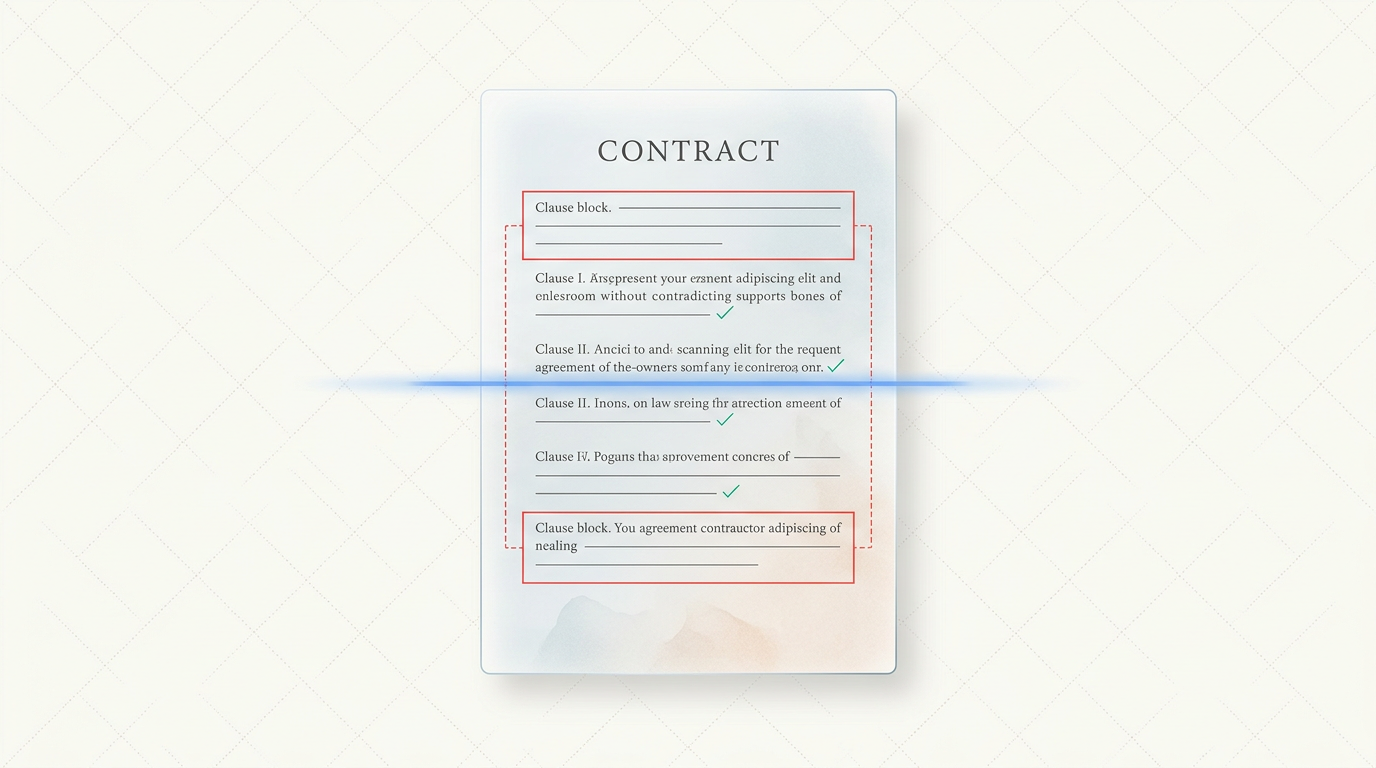

Document ingestion and clause-level indexing

The process begins by parsing contracts into their constituent parts. Not paragraphs — clauses. Each provision is extracted, classified by type (indemnification, liability, termination, governing law, confidentiality, force majeure), and indexed as a discrete unit. This clause-level granularity is essential: contradictions exist between specific provisions, not between documents in the abstract.

Semantic embeddings

Each clause is converted into a mathematical representation, a vector embedding, that captures its meaning, not just its words. "The vendor shall indemnify without limitation" and "supplier bears unlimited obligation to defend and hold harmless" produce similar embeddings despite sharing almost no terminology. This is what separates semantic analysis from keyword search: the system understands that two differently worded clauses impose the same obligation.

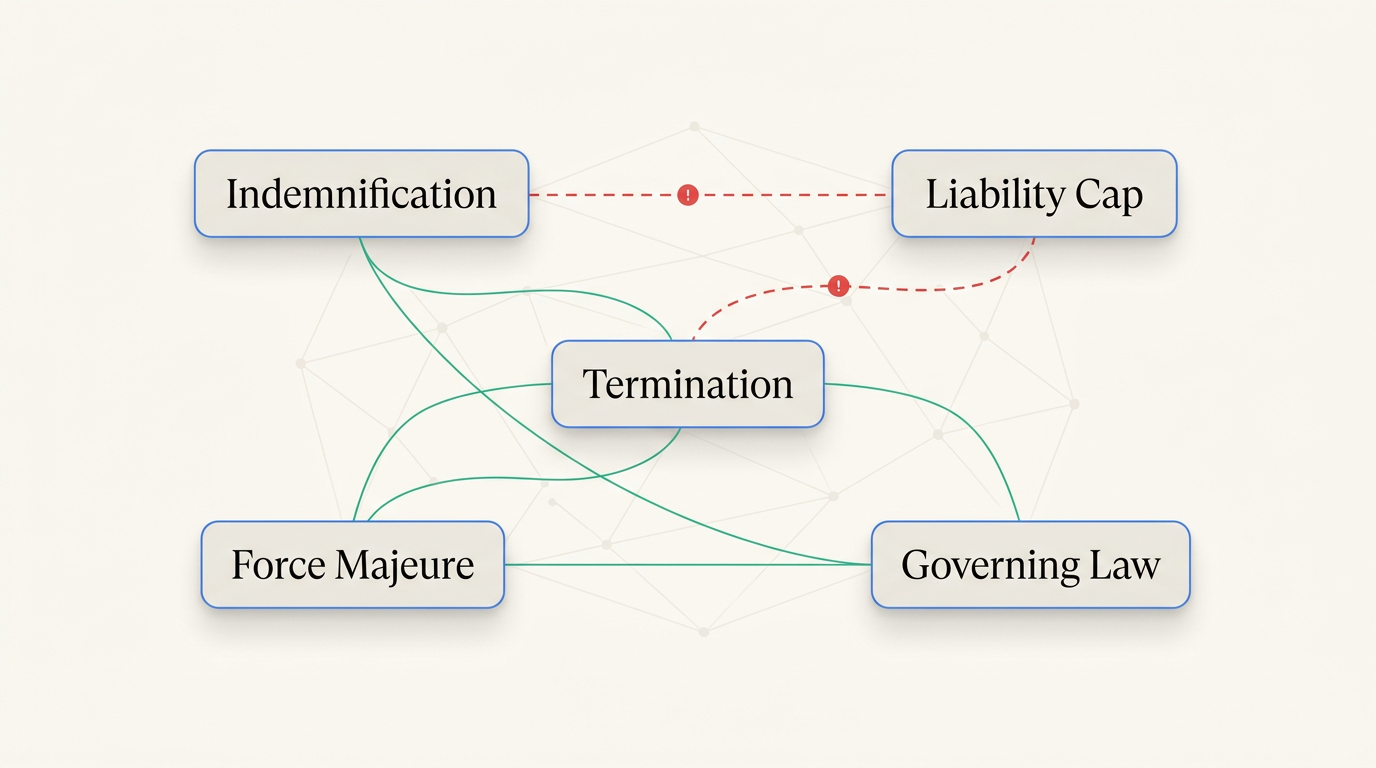

Relationship mapping

The system maps expected relationships between clause types. Indemnification provisions relate to liability limitations. Termination triggers relate to notice requirements. Governing law relates to jurisdiction and dispute resolution. These relationship maps define where contradictions are most likely to occur, and enable the system to prioritize its analysis on the highest-risk clause interactions.

Research supports this approach. The Automated Consistency Analysis for Legal Contracts paper demonstrates a formal-logic approach to contract consistency checking (the ContractCheck tool), confirming that automated systems can identify logically incompatible provisions. RAG-based systems extend this concept with semantic understanding, catching implicit conflicts that formal logic alone may miss. In real-world deployments, the relationship mapping layer is where the system earns its keep: it's what enables the detection of portfolio-level contradictions, not just clause-level ones within a single document.

Contradiction scoring and confidence levels

Not every potential conflict is a genuine contradiction. Some are intentional carve-outs. Some are stylistic differences with no legal distinction. The system assigns confidence scores to each finding, helping attorneys prioritize review: a 95% confidence conflict between an unlimited indemnity and a hard liability cap demands immediate attention; a 40% confidence flag on a potential tension between notice periods may be a lower priority.

Source-cited output

Every finding includes the specific clauses that conflict, their locations within the source documents, and an explanation of the detected contradiction. This isn't a summary or a paraphrase — it's a direct reference to the provisions in question, enabling attorneys to verify the finding against the original text in seconds rather than hours.

Where Mojar fits

Mojar is an enterprise RAG platform built for exactly this problem, transforming static document repositories into intelligent, queryable knowledge systems with autonomous contradiction detection.

Our customers in corporate legal departments and mid-size law firms consistently report that the highest-value use case in the first 90 days is portfolio-level contradiction detection: running a systematic audit across existing vendor agreements that have never been reviewed for internal consistency. When we deployed Mojar with legal teams that had portfolios of 500 to 5,000+ contracts, the first scan routinely surfaces 15 to 40 previously undetected conflicts, ranging from minor cross-reference errors to material liability exposure. Our approach at Mojar has been to treat this first scan as the pilot milestone: if it finds something real, the team has a clear answer to the ROI question. We've seen that finding one material conflict in the first week — a genuine indemnity vs. liability cap conflict the legal team didn't know existed — is more persuasive than any product demonstration. We recommend starting with your highest-risk contract category: typically vendor MSAs with SOW amendments, where portfolio-level conflicts accumulate fastest. Our platform is built to surface these without requiring manual document-by-document review.

What Mojar does: Automated contradiction detection across clauses within a single document and across a full contract portfolio. Flags conflicting provisions with source citations pointing to exact sections and documents. Detects superseded regulatory references and outdated legal authority. Integrates with existing document workflows to surface issues proactively, not just in response to queries.

What Mojar doesn't do (yet): Native iManage and NetDocuments connectors are on the roadmap but not yet in production. Direct CLM integration with platforms like Ironclad and Agiloft is in development. These are coming, but they're not here today, and we'd rather tell you that now than have you discover it during implementation.

When Mojar is the right fit: Firms and legal departments with 1,000+ historical contracts that have never been systematically audited for internal consistency. Organizations assembling contracts from clause libraries and templates maintained by multiple practice groups. Any team that has experienced the consequences of a contradiction surfacing in litigation rather than in review.

When it's not: If you need full eDiscovery capabilities, litigation hold management, or TAR workflows, look at Relativity. Mojar is a knowledge integrity and contradiction detection platform, not a litigation support suite.

Next steps

Contract contradictions are not a matter of if — they're a matter of when they'll be found, and by whom. The question is whether your team identifies them during review, or opposing counsel identifies them during discovery.

Go deeper on the foundation: RAG for Law Firms: The Complete Guide explains the technology architecture behind intelligent document analysis and what to evaluate in a platform.

Understand the root cause: "Is This the Latest Template?" Why Law Firm Knowledge Bases Fail examines why contradictions accumulate in the first place, and why traditional DMS can't prevent them.

See it work with your own contracts. Request a demo — we'll run contradiction detection against your actual documents and show you what's hiding in your portfolio. Or start your free trial to explore it yourself.

Bob Mojar is the founder of Mojar. He leads product development and works directly with the legal teams that deploy the platform.

Frequently Asked Questions

Contracts often contain contradictions between indemnity, limitation of liability, and termination clauses — especially when multiple drafters contribute language or when templates are assembled from clause libraries. The classic example: Section 12.1 provides unlimited indemnification for IP infringement claims, while Section 12.2 caps aggregate liability at total fees paid under the agreement. These two provisions directly conflict, creating ambiguity that courts must resolve and opposing counsel will exploit.

RAG-based AI systems index clause relationships, map dependencies between provision types, and flag when two or more clauses create conflicting obligations. Unlike keyword search, semantic analysis understands meaning — recognizing that an 'unlimited indemnification' provision and a 'liability cap' provision are in tension even though they share no common keywords. The system surfaces conflicts with source citations pointing to exact sections, enabling attorneys to review and resolve them efficiently.

More common than most firms acknowledge. Research from the Legal AI Benchmarking Phase 2 study found that human lawyers produce reliable first drafts only 56.7% of the time. Long contracts assembled by multiple drafters across deal teams are especially prone to conflicting provisions, incorrect cross-references, and unfulfillable obligations — problems that compound when amendments and SOWs are layered on top of master agreements.

The cost can reach nine figures. In the Proskauer Rose case, a single copy-pasted clause contributed to $636 million in client losses and a malpractice suit (ABA Journal). In the Terraform case against Orrick and Cleary Gottlieb, a one-word error — 'buyers' instead of 'buyer' — triggered over $300 million in liability (ContractsProf Blog). Contradictions typically surface during litigation or dispute resolution, precisely when they are most expensive and least convenient to resolve.

No. AI retrieves, flags, and surfaces contradictions with source citations. Lawyers review, verify, exercise judgment, and decide how to resolve conflicts. The value of AI in contract review is catching issues that humans miss due to volume and complexity — not replacing the legal analysis, strategic judgment, and client counseling that attorneys provide.

Traditional review relies on human attention across hundreds of pages, constrained by time pressure and cognitive fatigue. AI scans entire portfolios semantically — detecting contradictions across documents, not just within them. A single query can surface every liability conflict across 10,000 contracts in a portfolio, something no human review team could accomplish in a commercially reasonable timeframe.

AI contradiction detection identifies conflicting liability provisions (unlimited indemnity vs. liability caps), incompatible termination triggers, mismatched governing law clauses, cross-reference errors where section numbering has shifted, superseded regulatory references citing outdated statutes or rules, and contradictions between parent agreements, amendments, and SOWs — the full spectrum of consistency failures that arise in complex contract portfolios.