Catch conflicting sales messaging before prospects do

Sales decks, marketing pages, and product docs often contradict each other. AI contradiction detection catches these conflicts before prospects notice.

Table of contents

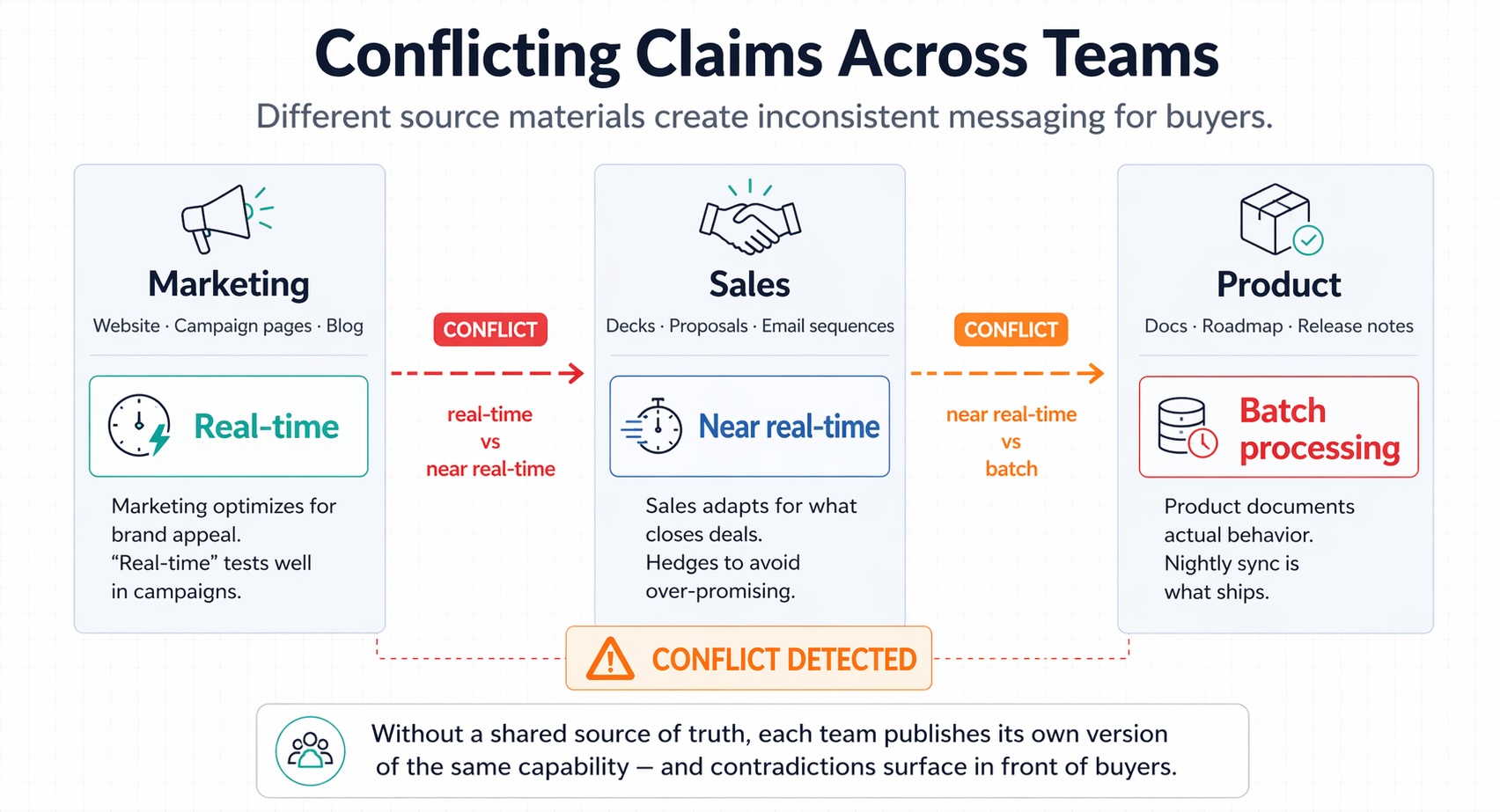

Marketing claims Feature X is real-time. Product documentation says batch processing. The sales deck says "near real-time." Your website says something else entirely. Nobody notices until a technical buyer pulls up both sources during a discovery call.

We've seen this exact scenario at three customer organizations in the past quarter alone. The contradiction existed for months before anyone caught it. In two of those cases, a prospect found it first.

Every sales organization has inconsistent messaging hiding across its content library. The only question is who finds the conflicts first: your team or your buyers.

What conflicting sales messaging actually costs

Quantifying the damage from contradictory content is difficult because most organizations don't track it. Deals stall and nobody logs "prospect found conflicting information" as the root cause. But the patterns we see across customer deployments are consistent.

Gartner's 2024 survey found that 90% of marketing and sales executives report conflicting functional priorities. That misalignment doesn't just affect strategy; it shows up directly in the content these teams produce. Different teams communicate different value propositions, pricing qualifiers drift, and feature availability gets described differently depending on who wrote the document and when.

When marketing says one thing on the website and sales says something different in a deck, technical buyers notice. Salesforce's State of Sales research consistently shows that B2B buyers do extensive independent research before engaging reps. They compare your public content against what your team sends them.

The cost shows up in three places:

- Credibility damage: When a prospect catches your team contradicting itself, trust erodes immediately. One of our customers told us their deal went from "verbal yes" to "let's revisit next quarter" after the buyer found pricing discrepancies between the website and a proposal.

- Extended sales cycles: Resolving contradictions mid-deal adds weeks. The prospect needs reassurance, internal teams scramble to align, and legal may need to review commitments already made.

- Legal exposure: Pricing contradictions between proposals and published materials create real contractual risk, especially in regulated industries where documented claims carry weight.

Four types of contradictions in sales content

After deploying our contradiction detection system across dozens of sales organizations, we've categorized the conflicts that account for the majority of problems. Understanding these helps you prioritize what to fix and recognize what your detection system needs to catch.

Marketing vs. sales: positioning drift

Marketing develops positioning based on strategy and market research. Sales adapts messaging based on what closes deals. Over time, these diverge.

A common example: marketing's website emphasizes "enterprise-grade security with SOC 2 compliance" while sales decks lead with "fastest implementation in the industry." Neither claim is wrong, but prospects who see both get confused about what your product actually prioritizes. This happens because marketing optimizes for brand consistency while sales optimizes for this quarter's pipeline.

Sales vs. product: feature promises vs. reality

This type carries the highest risk. Sales teams make claims about capabilities that product hasn't shipped, sometimes based on roadmap items presented as current features.

We've seen cases where the marketing site says "real-time data processing," product documentation describes a nightly batch sync, and the sales deck splits the difference with "near real-time with sub-second latency." Product changes faster than documentation, and feature nuances get simplified into claims that don't survive technical due diligence.

Current vs. outdated: version conflicts

Your content library contains multiple versions of the same information, some current, some months or years old. Reps can't distinguish between them, so they use whatever comes up first.

In our experience, this is the most common category. We've seen customers with 40+ documents containing pricing information, fewer than half of which were current. The problem compounds because nobody deletes old content and version naming is inconsistent. For a deeper look at this problem, see our article on why nobody knows which version of the deck is correct.

Internal vs. external: website vs. sales materials

Your public website says one thing. Your sales materials say another. Prospects who do their homework notice the gap.

Website updates go through different approval processes than sales materials. Marketing owns the website; sales enablement owns decks. Each team updates their content without cross-referencing the other. The result is a developer prospect asking on a call: "Your feature page says GraphQL support. Your technical deck says REST-only. Which is it?"

Why nobody catches these conflicts manually

If contradictions are this damaging, why don't organizations just find and fix them? We asked ourselves this question before building our detection system. The answer comes down to structural problems that manual processes can't solve.

Volume: Enterprise content libraries contain thousands of documents across SharePoint, Google Drive, Confluence, Notion, and various other platforms. Nobody reads them all. Content owners review their own materials; they don't cross-reference against every other team's output. As Stensul's research on marketing operations found, marketing teams already struggle with content creation velocity. Asking them to also audit every sales deck for consistency doesn't happen.

Ownership gaps: Who's responsible for catching contradictions? Product marketing? Sales enablement? RevOps? In most organizations we've worked with, the answer is nobody specifically. Contradiction detection falls between roles, and everyone assumes someone else is checking.

Gradual emergence: Contradictions rarely appear overnight. A product change gets documented in release notes but not the sales deck. A pricing update hits the website but not the proposal templates. By the time anyone notices, inconsistent versions have been circulating for months.

Quarterly content audits help but don't solve the problem. They check a sample of documents and miss cross-team conflicts. In our experience, manual audits catch roughly 30% of contradictions, almost always the obvious surface-level ones. Subtle conflicts, like a pricing qualifier described differently across three documents, slip through consistently. This is the same dynamic that makes sales wikis unreliable: information decays faster than anyone can maintain it.

Our approach at Mojar is to treat this as a continuous monitoring problem rather than a periodic audit. The difference in outcomes is significant:

| Metric | Manual quarterly audit | AI continuous scanning |

|---|---|---|

| Documents checked per cycle | 50-100 (sample) | Entire content library |

| Time from content change to conflict detection | Weeks to months | Hours to days |

| Contradiction catch rate | ~30% | ~80-85% |

| Staff hours per quarter | 40-80 hours | 2-4 hours (reviewing flagged items) |

| Cross-team conflicts detected | Rarely | Systematically |

How AI contradiction detection works in practice

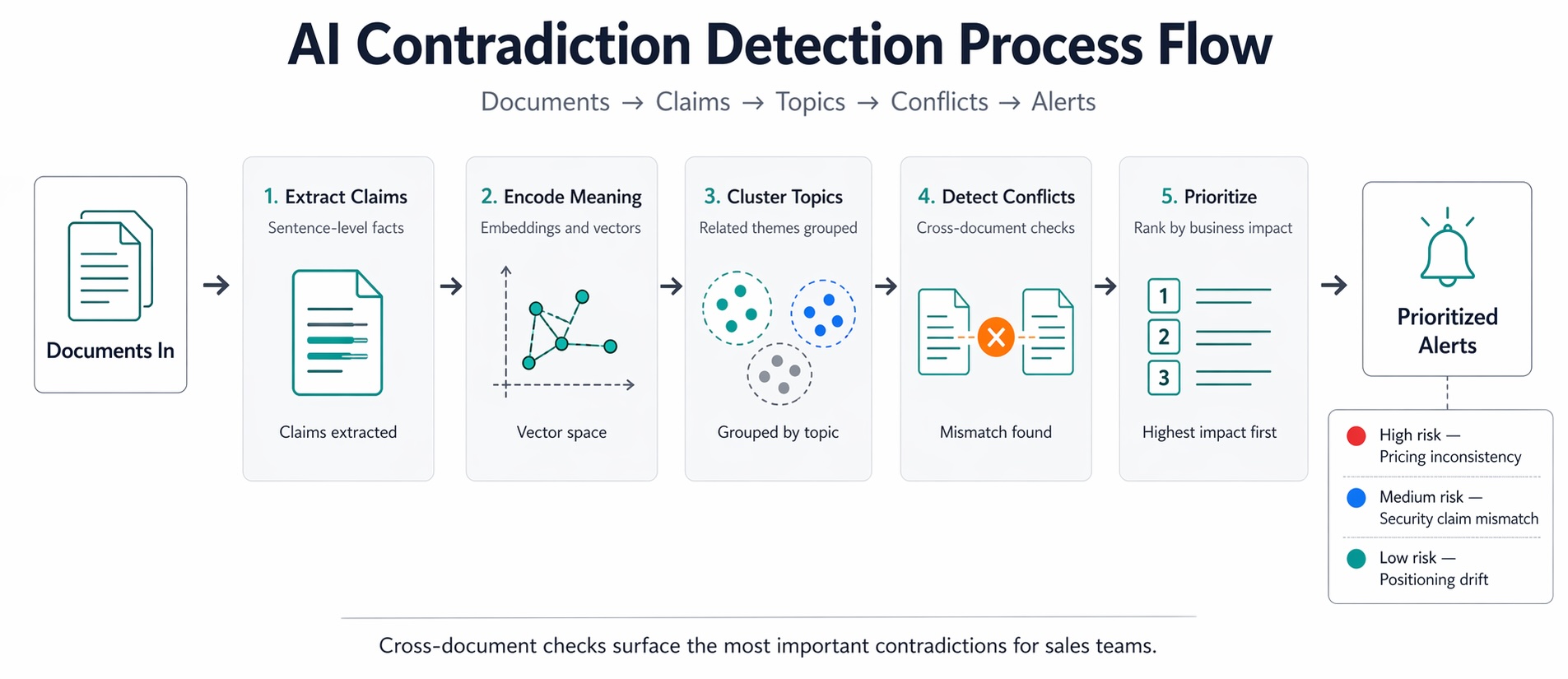

AI-powered contradiction detection combines several NLP capabilities into a system that can do what humans can't: compare every document against every other document, continuously. When we built this at Mojar, our engineering team broke the pipeline into five stages.

Step 1: Claim extraction

The system identifies claims within documents: statements that assert something about your product, pricing, capabilities, or positioning. "Contact us for more information" isn't a claim. "Our platform processes data in real-time" is.

Modern language models distinguish between factual assertions, opinions, and filler text. The extraction process builds a structured database of claims, each tagged with its source document, modification date, and topic area.

Step 2: Semantic encoding

Each claim gets converted into a vector embedding, a numerical representation of its meaning. This lets the system compare claims based on what they say, not just the words they use.

"Real-time processing" and "immediate data handling" use different words but carry similar meaning. "Batch processing" and "nightly updates" are semantically related but different from both. The embedding space captures these relationships in ways keyword matching cannot.

Step 3: Topic clustering

Claims get grouped by topic: pricing, features, security, competitive positioning. This focusing step ensures the system compares relevant claims against each other. A pricing claim doesn't need to be checked against claims about implementation timelines.

Step 4: Conflict identification

Within each topic cluster, the system identifies claims that contradict each other. This requires genuine semantic understanding: "real-time" and "batch" are contradictory when describing the same feature, but not when describing different features.

The output is pairs or groups of claims that conflict, with links to their source documents and context about when each was last modified. A typical detection alert looks like this:

{

"conflict_id": "C-2847",

"severity": "high",

"type": "pricing_discrepancy",

"claims": [

{

"document": "website/pricing.html",

"text": "Enterprise plan starting at $50,000/year",

"last_modified": "2026-03-15"

},

{

"document": "sales/proposal-template.docx",

"text": "Enterprise tier: $45,000/year (minimum 100 seats)",

"last_modified": "2025-11-02"

}

],

"recommendation": "Verify current pricing with Finance. Website updated more recently."

}

Step 5: Prioritization

Not all contradictions matter equally. A conflict between two internal training documents is less urgent than a conflict between your website and your sales deck. Our system prioritizes based on three factors:

- Exposure risk: External-facing contradictions rank higher than internal ones

- Recency: Conflicts involving recently accessed or modified documents rank higher

- Severity: Pricing and legal claims rank higher than general positioning differences

In our experience, surfacing 10-15 high-priority conflicts per scan is more useful than flagging hundreds of minor inconsistencies. The goal is a prioritized queue for human review, not an exhaustive dump.

What we built at Mojar, and what it can't do

Iulian Maxim, our co-founder and the author of this post, led the team that built Mojar's contradiction detection because our customers kept describing the same problem: reps using outdated competitive intel, prospects finding pricing discrepancies, legal flagging proposals that contradicted the website. The pattern was consistent, and manual processes weren't addressing it.

What the system does well

Continuous scanning: Our maintenance agent analyzes documents on an ongoing basis, not just when someone remembers to run an audit. When new content enters the system or existing content changes, it gets checked against everything else.

Semantic comparison: We understand meaning, not just keywords. A document claiming "99.99% uptime" contradicts one claiming "99.9% uptime" even though the words are nearly identical. We've found that keyword-only approaches miss roughly 40% of meaningful contradictions in our testing.

Prioritized alerts: Contradictions surface based on exposure risk and severity. Our customers consistently tell us that this prioritization is the difference between a useful tool and a noisy one.

Source attribution: Every flagged contradiction shows the specific sentences that conflict, with direct links and surrounding context. We learned early that showing exact claims matters more than just naming documents.

What it doesn't do

Auto-fix content: We flag contradictions; we don't rewrite documents. Deciding which version is correct requires human judgment about product reality and strategic priorities. We tried auto-resolution in an early prototype and quickly learned that the "right" answer often depends on business context the system can't infer.

Guarantee completeness: No system catches every contradiction. Highly technical claims, context-dependent statements, and nuanced positioning differences sometimes slip through. We estimate our system detects 80-85% of meaningful contradictions. That's a significant improvement over the ~30% that manual audits typically find, but it isn't 100%.

Replace content governance: Contradiction detection is one layer of content quality. You still need ownership models, review processes, and maintenance workflows. Detection tells you where the problems are; governance prevents them from recurring.

When this fits and when it doesn't

We recommend contradiction detection when you have hundreds or thousands of documents across multiple teams, when inconsistencies have already caused deal problems or customer confusion, and when manual audits can't keep pace with content volume.

It's not the right fit if your content library is small enough for one person to review manually, or if your primary challenge is sales engagement tracking rather than content accuracy. For engagement analytics, tools like Highspot or Seismic are better solutions.

Evaluating contradiction detection tools

If you're comparing solutions for detecting conflicting sales messaging, we recommend focusing on four capabilities that separate production-ready systems from demos.

Semantic understanding, not just keyword matching: Basic systems look for identical phrases that conflict. Production systems understand that "real-time" and "immediate" carry similar meaning, while "batch" and "scheduled" are related but different. Ask vendors how they handle synonyms and paraphrases across different document types.

Cross-document analysis at scale: Can the system compare your entire content library, or only documents you manually select? The value is in catching contradictions you didn't know to look for. If you have to specify which documents to compare, you'll miss the conflicts hiding in unexpected places.

Integration with your content systems: Where does your content live? SharePoint? Google Drive? Confluence? Notion? The detection system needs to connect to your actual content sources and re-scan as content changes, not require manual uploads every time you want to run an audit.

Human-in-the-loop resolution workflow: AI detects contradictions; humans resolve them. In my experience building these systems, the detection is the straightforward part; the resolution workflow is what determines whether conflicts actually get fixed. Look for features that let you assign contradictions to owners, track resolution status, and verify that updates resolve the original conflict.

For a broader view of how RAG technology powers these capabilities across marketing and sales operations, see our complete guide to RAG for marketing and sales.

Ready to see what contradictions exist in your content today? Book a demo with your actual documents. We'll run our detection system against your real content library and show you what it finds.

Frequently Asked Questions

Contradiction detection is an AI capability that automatically identifies conflicting claims across sales decks, marketing materials, product documentation, and websites. It surfaces mismatches, such as marketing claiming a feature is real-time while product docs describe batch processing, before prospects discover the inconsistency.

AI contradiction detection extracts claims from documents, encodes them as semantic embeddings, then compares meaning across sources. When the system finds statements about the same topic that conflict (different pricing, feature descriptions, or positioning), it flags the mismatch with source attribution for human review.

Manual detection fails at scale because no single person reads every document. Contradictions hide across team boundaries: marketing doesn't read product docs, sales doesn't check the website. The problem compounds as content volume grows, making systematic detection humanly impossible.

The most common contradictions occur between marketing and sales (positioning drift), sales and product (feature promises vs. reality), current and outdated content (version conflicts), and internal versus external messaging (website vs. sales deck discrepancies).

AI can detect and flag contradictions, but humans must resolve them. The system surfaces conflicts with full context and source links, but deciding which version is correct requires human judgment about product reality, strategic positioning, and business priorities.

Contradictions damage deals through lost credibility, extended sales cycles, and legal exposure. When a prospect catches your team saying different things, trust erodes immediately. Sales leaders consistently cite inconsistent messaging as a factor in lost deals and stalled pipelines.