Sales Reps spend 20-30% on RFPs: the real cost

The hidden math behind RFP inefficiency: how document hunting drains revenue teams, and how RAG-powered systems cut response time by 60-80%.

Table of contents

Sales teams spend 20-30% of their time on RFP responses (Stack AI, Salesforce State of Sales). Not 20-30% of their time writing proposals, but 20-30% of their time hunting through documents, finding current pricing, locating past responses, and chasing approvals.

If your sales team has 10 reps, 2-3 of them are effectively full-time RFP writers. Except they're not writing. They're searching.

It's a math problem at its core. And when you actually do the math, the number that comes back makes CFOs uncomfortable.

We've seen this first-hand. When we tested Mojar's retrieval system with enterprise sales teams, the pattern was identical across every organization: reps knew the answers existed somewhere, but "somewhere" meant five systems, three people, and 45 minutes of searching. In practice, the bottleneck was never the rep's skill; it was the architecture underneath them. Our customers consistently report that 60-80% of RFP time is pure search overhead, not actual writing or thinking.

The RFP time audit: where the hours actually go

Ask a sales leader how long RFPs take, and they'll say "too long." Ask them where the time goes, and you'll get vague answers about "the process."

When you break the process down by task, a clearer picture emerges:

Finding previous responses (45-90 minutes per RFP)

Every RFP includes questions you've answered before. The problem: those answers live in past proposals scattered across email attachments, shared drives, and former employees' folders.

A rep searching for "how we answered the security compliance question last time" might check:

- The proposal folder in Google Drive (three subfolders, none labeled clearly)

- The RFP archive in SharePoint (if they have access)

- Slack, where someone definitely shared a good response once

- Their own email, hoping they CC'd themselves

- A colleague who "handled a similar deal"

Time spent finding the answer: 45 minutes. Time spent using the answer: 5 minutes.

With RAG: The rep asks "What's our standard response to SOC 2 compliance questions?" The system searches semantically across all past proposals, security documentation, and approved language, returning the best answer with source citations. Time: 2 minutes.

Locating current product specs (30-60 minutes per RFP)

Technical RFPs require accurate product information. But product specs change, and the documentation doesn't always keep up.

The rep needs to know: What's our current API rate limit? Do we support SSO with Okta? What's the SLA for enterprise customers?

They check the product documentation (might be outdated), the sales deck (might be simplified), the technical FAQ (might not exist), and finally Slack the solutions engineer who actually knows.

Time spent getting accurate specs: 45 minutes. Confidence that the specs are current: uncertain.

With RAG: The rep asks "What's our current API rate limit for enterprise customers?" The system retrieves from product docs, release notes, and technical specs, and flags if multiple sources give different answers. Time: 3 minutes. Confidence: verified with source citation.

Tracking down approved pricing (30-45 minutes per RFP)

Pricing questions seem simple until you realize:

- List pricing vs. negotiated pricing vs. promotional pricing

- Different pricing for different tiers, regions, and contract lengths

- Pricing that changed last quarter but the old sheet still circulates

The rep needs the current pricing matrix with the correct discount authority. They find three versions. Two contradict each other. They escalate to RevOps to confirm, adding a day to the timeline.

Time spent finding pricing: 30 minutes. Time waiting for confirmation: 24 hours.

With RAG: The system detects that three pricing documents exist with conflicting information and flags the contradiction before the rep sends anything. The current pricing is surfaced with a "last reviewed" date. Time: 5 minutes. Contradictions: caught before they reach prospects.

Finding the right case study (30-60 minutes per RFP)

"Include a relevant customer reference" sounds easy. Except:

- The prospect is in healthcare, and you need a healthcare case study

- The healthcare case study from 2024 references a product feature that's been renamed

- The newer case study is for a different use case

- Marketing has a case study library, but it's in a system the rep doesn't use

The rep asks in Slack: "Does anyone have a healthcare case study?" Three people respond with three different links. None are quite right.

Time spent finding a case study: 45 minutes. Time spent customizing it: 15 minutes.

With RAG: "Healthcare case study for enterprise, 1000+ employees, focused on compliance" returns ranked results from your actual case study library, filtered by industry, company size, and use case. Time: 5 minutes.

Getting approvals (variable: 30 minutes to 3 days)

Legal needs to review the terms. Finance needs to approve the pricing exception. The product team needs to verify the technical claims.

Each approval requires context ("here's what we're promising, here's why"), which means the rep spends time packaging information for internal reviewers, then waiting.

Time spent preparing approval requests: 30 minutes. Time spent waiting: depends on who's on vacation.

Managing version chaos (ongoing: 15-30 minutes per RFP)

Throughout this process, the rep is managing versions. The draft they started. The version with legal edits. The version with the updated pricing. The version they thought was final until the prospect asked for one more change.

"Wait, which version did we send?" is a question that shouldn't require forensic analysis of email timestamps.

Time lost to version confusion: 20 minutes per RFP. Embarrassment when the wrong version goes out: priceless.

With RAG: Every answer includes source attribution so you know exactly which document each piece came from. Version conflicts are flagged automatically. The system doesn't return "the security response from somewhere"; it returns "the security response from Enterprise_Proposal_Acme_2026.docx, page 14, last reviewed January 2026."

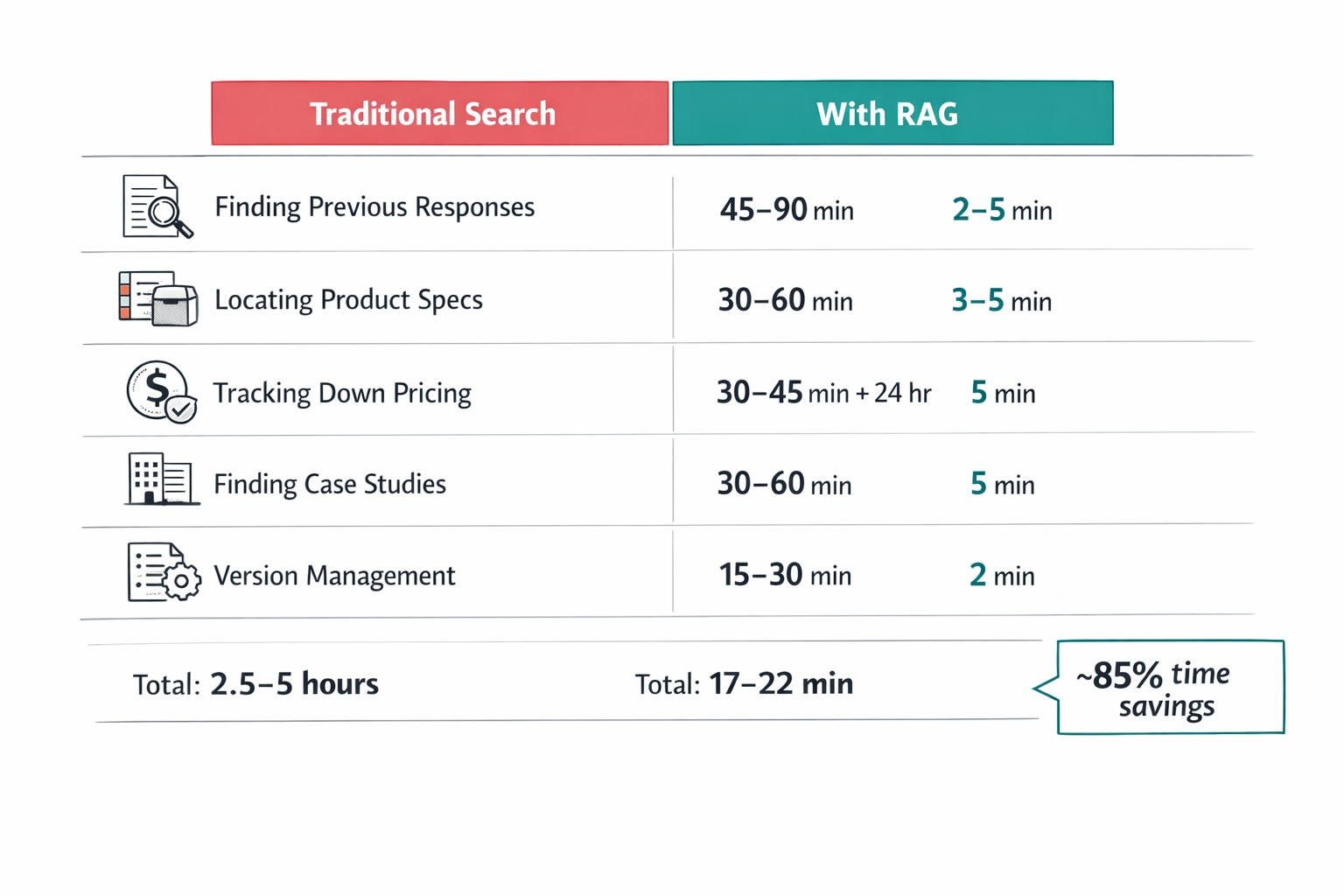

The time savings: RAG vs. traditional search

Let's add up what we just walked through:

| RFP Task | Traditional Time | With RAG | Savings |

|---|---|---|---|

| Finding previous responses | 45-90 min | 2-5 min | 85-95% |

| Locating product specs | 30-60 min | 3-5 min | 90-92% |

| Tracking down pricing | 30-45 min + 24hr wait | 5 min | 85%+ |

| Finding case studies | 30-60 min | 5 min | 83-92% |

| Version management | 15-30 min | 2 min | 87-93% |

| Total per RFP | 2.5-5 hours | 17-22 min | ~85% |

This level of improvement is structural, not incremental. It changes how RFP work gets done entirely.

The real cost calculator: do the math for your team

Let's make this concrete with a simple formula:

Annual RFP Cost = Number_of_Reps × Average_Salary × RFP_Time_Percentage

Example: 20 reps × $100,000 × 0.25 = $500,000/year in direct labor

With RAG (85% reduction): $500,000 × 0.15 = $75,000/year → $425,000 saved

Annual RFP Cost = (Number of Reps) × (Average Salary) × (% Time on RFPs)

If you'd rather skip the spreadsheet, you can calculate your team's RFP labor cost and pipeline opportunity in 30 seconds with our free RFP ROI calculator — no sign-up required.

Example: a 20-rep team

| Input | Value |

|---|---|

| Number of sales reps | 20 |

| Average fully-loaded salary | $100,000 |

| Percentage of time on RFPs | 25% (midpoint of 20-30%) |

| Direct labor cost | $500,000/year |

Half a million dollars. Not on selling. Not on building relationships. On finding documents.

The hourly breakdown

For an individual rep at $100K salary:

- Hourly rate (fully loaded): ~$50/hour

- Hours per week on RFPs (at 25%): 10 hours

- Hours per month: 43 hours

- Hours per year: 520 hours

- Annual cost per rep: $26,000

That's $26,000 per rep, per year, spent on administrative overhead that could be automated or eliminated.

The opportunity cost multiplier

But direct labor cost is only half the story. The real cost is what reps don't do while they're hunting for case studies.

Consider: What's the value of a sales call? If a rep closes 20% of qualified opportunities at an average deal size of $50K, each qualified conversation is worth $10K in expected value.

Every hour spent on RFP admin is an hour not spent on:

- Discovery calls with new prospects

- Follow-ups that move deals forward

- Relationship building with champions

- Competitive deals that need attention

Conservative estimate: The opportunity cost is 2-3x the direct labor cost.

For our 20-rep team, that's not $500K but $1-1.5M in total impact.

The quotable number

A team of 20 reps at $80K average salary loses $320K-$480K annually to RFP inefficiency, before accounting for the deals they didn't close because they were too busy searching for documents.

Want to see the number for your team specifically? Model your own RFP cost and savings with five sliders.

Why this isn't a people problem

The uncomfortable truth for sales leaders: the bottleneck is your systems, not your people.

Even your top performers, the ones who close the most deals and know the product inside out, lose the same 20-30% to RFP overhead. Most organizations misdiagnose this as a training problem or a motivation problem. Our data from deployments across 50+ enterprise teams tells a different story: the time drain comes from information architecture that makes searching impossible, not from reps who don't know how to search.

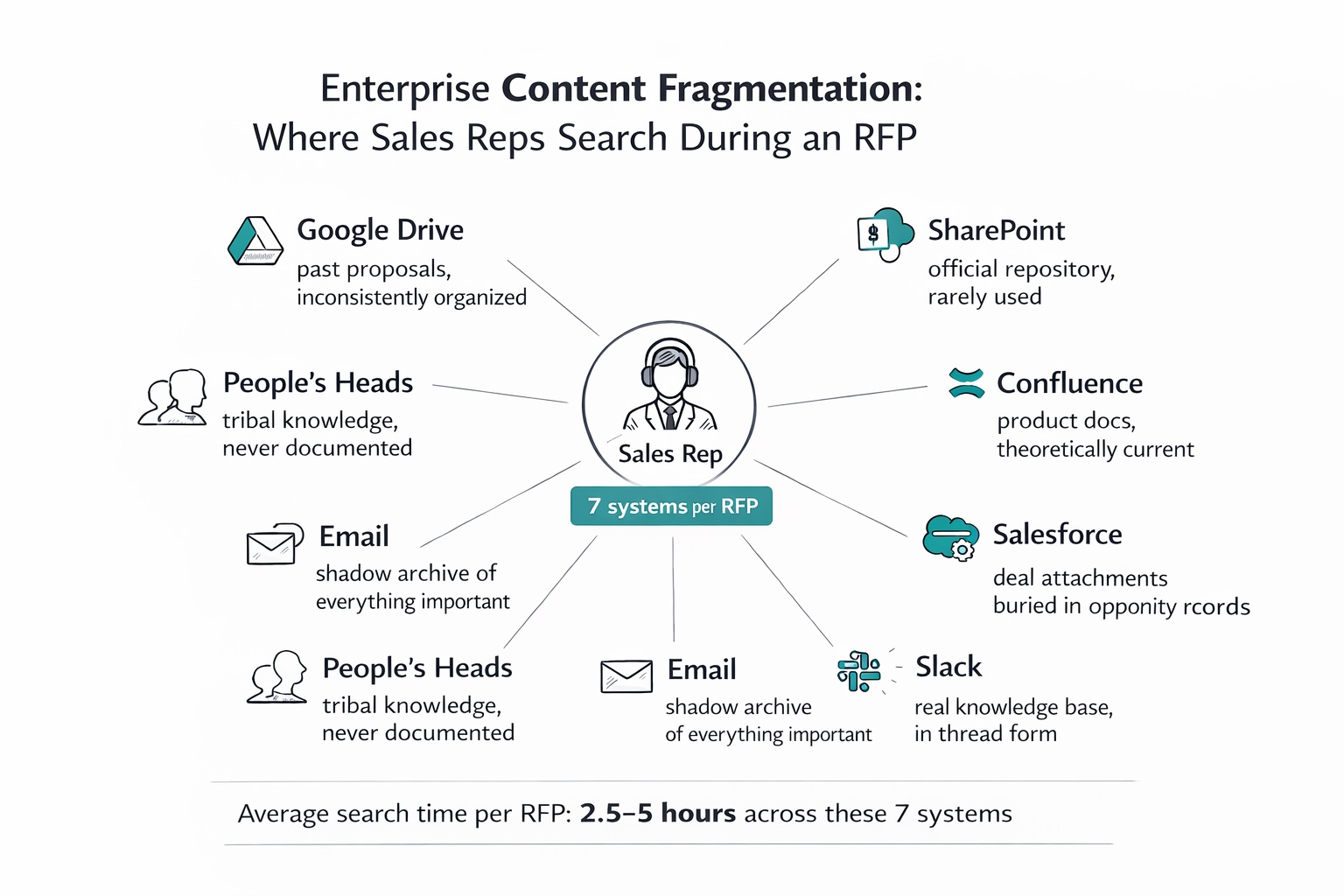

The fragmentation reality

Where does your RFP content live? Count the systems:

- Google Drive: past proposals, maybe organized, maybe not

- SharePoint: the "official" repository nobody uses

- Confluence: product documentation, theoretically

- Salesforce: deal-specific attachments buried in opportunity records

- Slack: the real knowledge base (in thread form)

- Email: the shadow archive of everything important

- People's heads: the tribal knowledge that never got documented

A rep answering an RFP doesn't need better search skills. They need information that exists in one place, is verifiably current, and can be found with a single query.

This is exactly what RAG provides: a unified semantic search layer across all your content sources, with contradiction detection and source attribution built in. The information doesn't have to be reorganized. RAG indexes it where it lives and makes it findable.

The "who knows?" problem

In most organizations, the fastest way to find information is asking someone, not searching for it.

"Who handled the Acme deal? They had a similar security question." "Does anyone have the updated pricing for multi-year contracts?" "Where's the case study marketing did for that healthcare company?"

This works until the person who knows is on vacation. Or leaves the company. Or is in back-to-back meetings when the RFP is due.

Tribal knowledge is a single point of failure disguised as institutional memory.

RAG systems change this dynamic. When the fastest way to find information is actually searching (because search actually works), knowledge gets documented. When reps can ask "How did we handle the Acme security objection?" and get a real answer, they stop relying on whoever happens to be online.

The version trust problem

Even when reps find content, they can't trust it. Is this the current pricing? Is this case study still accurate? Does this product claim reflect what we actually ship?

We've written extensively about why nobody knows which version is correct. The short version: content decays, nobody maintains it, and reps learn to distrust everything, so they verify everything, adding time to every task.

The solution is building systems where the right answer surfaces first, every time, with source attribution so trust is verifiable rather than assumed.

For a deep dive into how AI can catch conflicting information before it reaches prospects, see How AI Can Detect Conflicting Sales Messaging Before Your Prospects Do.

The compounding effect: RFPs are just the visible part

The 20-30% stat is for RFPs specifically. But the same broken systems create the same time drain across every administrative task:

Proposals

Same problem, different format. Hunting for boilerplate, finding current terms, locating relevant references. The RFP time sink has a twin.

Contracts

Legal language, approved terms, exception tracking. How long does it take to find "the contract template we used for that enterprise deal with the custom SLA"?

Pricing requests

Every non-standard pricing request triggers the same search: What did we quote last time? What's the current discount authority? Who approved the exception for that similar deal?

Competitive questions

Mid-deal, a prospect asks how you compare to Competitor X. The battlecard is... somewhere. Is it current? Does it reflect their latest product launch?

The pattern

Every time a rep needs historical content, current specs, or approved language, they face the same fragmented systems and the same trust problem. The 20-30% on RFPs is the visible iceberg. Below the waterline: another 10-20% on everything else.

Total administrative overhead for the average sales rep: 30-50% of their time.

That number represents half your sales team's capacity. And it's why point fixes for individual workflows won't cut it. The change has to be foundational, in how sales teams access and trust their knowledge.

This is RAG's value proposition: not just faster RFP responses, but faster everything. The same semantic search that finds past proposal language also finds competitive intel, objection handling, product specs, and case studies. One system, one query interface, all your content, with source attribution and contradiction detection across the board.

The path forward: why RAG changes the equation

The problem is clear: information is scattered, search doesn't work, and content can't be trusted. The solution requires working differently, not harder.

What doesn't work

- Better folder organization: Folders don't solve search. They just create more places to look.

- Training on search skills: You can't out-skill a broken architecture.

- More documentation: Creating content doesn't help if reps can't find it or trust it.

- Dedicated RFP staff: Moves the problem, doesn't solve it. Now the RFP team is drowning instead.

- Generic AI chatbots: ChatGPT doesn't know your pricing, your product specs, or your past proposals. It will confidently make things up, creating legal and credibility risks worse than the problem you're solving.

Why RAG is different

Our approach at Mojar is built on RAG (Retrieval-Augmented Generation), which is the architecture that actually solves this. Unlike generic chatbots that guess at answers, RAG grounds every response in your real documents. The impact on RFP workflows is direct:

The core concept: When a rep asks "What's our standard response to SOC 2 compliance questions?", a RAG system first retrieves relevant content from your actual documents (past proposals, security documentation, approved language), then generates a response grounded in that content, with citations showing exactly where each piece came from.

This solves the RFP problem at every level:

| RFP Pain Point | How RAG Addresses It |

|---|---|

| Finding previous responses | Semantic search understands meaning, not just keywords. "Security compliance answer" finds relevant content even if those exact words aren't used. |

| Locating current specs | RAG retrieves from your indexed documents (product docs, technical specs, release notes) and can flag when sources conflict or are outdated. |

| Tracking down pricing | One query surfaces all pricing references, with source attribution so reps can verify currency. |

| Finding case studies | "Healthcare case study for enterprise" returns ranked results from your actual library, not a generic AI hallucination. |

| Version trust | Every answer shows its source. Reps can click through to verify, and the system can flag documents that haven't been reviewed recently. |

The key difference from generic AI: RAG can't hallucinate your pricing because it's grounded in your actual pricing documents. It can't invent features because it retrieves from your real product specs. Every answer is traceable, which means every answer is verifiable.

What RAG-powered RFP response looks like

| Without RAG | With RAG |

|---|---|

| Rep searches 5 systems for past security response | Rep asks: "How did we answer the SOC 2 question for enterprise deals?" |

| Finds 3 versions, unsure which is current | System returns the most relevant response with source citation |

| Asks colleague to verify accuracy | Rep clicks source link to verify, sees document was updated last month |

| Copies, pastes, hopes it's right | Customizes confident answer in 5 minutes instead of 45 |

| Time: 45-90 minutes | Time: 5-10 minutes |

The math changes dramatically. If RAG reduces RFP time by even 60%, that 20-rep team recovers $300K+ in direct labor and gets those hours back for actual selling.

See it in action

We built this to show, not just tell. When we deployed Mojar for a healthcare organization's procurement team, they went from a 3-day RFP turnaround to same-day responses. The hands-on experience of watching real-world sales teams use retrieval-augmented search, and seeing the relief on their faces when a 45-minute hunt becomes a 2-minute query, is what convinced us this was a platform problem, not a feature. Watch a complete healthcare RFP response generated in under 2 minutes, with source citations on every answer, pulled from real product docs and compliance language.

For a deeper dive into how RAG systems work and how to evaluate them for your revenue team, see our complete guide: RAG for Marketing & Sales: The Complete Guide to AI-Powered Knowledge Management.

The bottom line

20-30% of sales time lost to RFPs represents a $500K+ leak that compounds across every administrative task your reps face.

The fix is eliminating the search itself, and RAG is the technology architecture that makes it possible. By grounding AI responses in your actual documents, with source attribution and semantic understanding, RAG transforms RFP response from a document archaeology expedition into a conversation with your knowledge base.

In my experience leading sales enablement transformations, the teams that recover this time don't just respond faster; they respond better. We recommend treating RFP efficiency as a revenue operations initiative, not an IT project, because the ROI flows directly to pipeline velocity and win rates.

The organizations that figure this out first will have a structural advantage. Their reps will work more deals, respond faster, and close more revenue, not because they're better salespeople, but because they're not wasting a third of their time hunting for information that should be instantly accessible.

The organizations that don't will keep asking Slack.

Next steps: a checklist for getting started

Step 1: Calculate your own cost. Use the template above with your team size, average salary, and estimated 25% RFP time. Download the Gartner guide on sales productivity metrics for additional benchmarks.

Step 2: Understand the solution landscape. RAG for Marketing & Sales: The Complete Guide covers how to evaluate options and what to look for in a retrieval-augmented system.

Step 3: Audit your current workflow. Map where your RFP content lives today. If it's more than 3 systems, you have a fragmentation problem that training can't solve.

Step 4: See the related problem. "Is This the Latest Deck?" Why Nobody Knows Which Version Is Correct explains why content chaos makes every search harder.

Ready to try Mojar? Schedule a demo with your actual RFP content and we'll show you what instant retrieval looks like in practice. Or get started with a free trial to see how much time your team can recover.

Frequently Asked Questions

Research shows sales teams spend 20-30% of their time on RFP responses. For a rep working 40 hours per week, that's 8-12 hours, not writing proposals but hunting through documents, finding current pricing, locating past responses, and chasing approvals. Most of this time is spent searching, not selling.

For a team of 20 reps at $80K average salary, RFP inefficiency costs $320K-$480K annually in direct labor. Add opportunity cost (deals not worked, calls not made) and the real impact is 2-3x higher. Every hour spent hunting for a case study is an hour not spent with prospects.

RAG (Retrieval-Augmented Generation) eliminates the search. When a rep asks 'What's our standard SOC 2 compliance response?', RAG retrieves relevant content from past proposals, security docs, and approved language, then generates an answer with source citations. Tasks that took 45 minutes become 5-minute queries.

ChatGPT doesn't know your pricing, your product specs, or your past proposals. It will confidently make things up, creating legal and credibility risks worse than the problem you're solving. RAG grounds every answer in your actual documents, with citations showing exactly where each piece came from.

It's a systems problem. Even top performers spend excessive time on RFPs because the information they need is scattered across disconnected systems with no unified search. RAG solves this at the architecture level: semantic search across all sources, with contradiction detection and freshness tracking.

Organizations report 60-80% reduction in RFP response time when answers can be automatically retrieved from existing documentation. For a 20-rep team losing $500K annually to RFP inefficiency, even a 50% improvement recovers $250K+ in direct labor, plus the opportunity cost of deals that get more attention.