RAG for marketing and sales: the complete guide

A practitioner's guide to RAG for revenue teams. Covers contradiction detection, content maintenance, RFP acceleration, and implementation from real deployments.

Table of contents

A rep is on a call. The prospect asks about enterprise pricing. The rep digs through three folders, two Slack threads, and a six-month-old deck that may or may not reflect current numbers. The prospect waits. The deal stalls.

We've watched this scenario play out across every revenue organization we've deployed with. Marketing launches a campaign claiming real-time analytics. Sales tells prospects it's batch processing. Product documentation says both, depending on the page. Nobody catches the contradiction until a prospect does, in the middle of a $500K deal.

Revenue teams don't have a content creation problem. They have a content chaos problem. Traditional tools like SharePoint, Confluence, and even purpose-built enablement platforms were designed to store content. They were never designed to verify that content is accurate, current, or consistent across teams.

RAG (Retrieval-Augmented Generation) changes that equation. This guide covers everything revenue teams need to know: what RAG is, how it works for sales and marketing specifically, how to evaluate solutions, and how to implement it based on patterns we've seen across 50+ enterprise deployments.

Adi Ghiuro leads product at Mojar and has run implementations with enterprise revenue teams across SaaS, financial services, and manufacturing. Our approach here is to be direct about what works and where other tools are a better fit.

What RAG for sales and marketing actually delivers

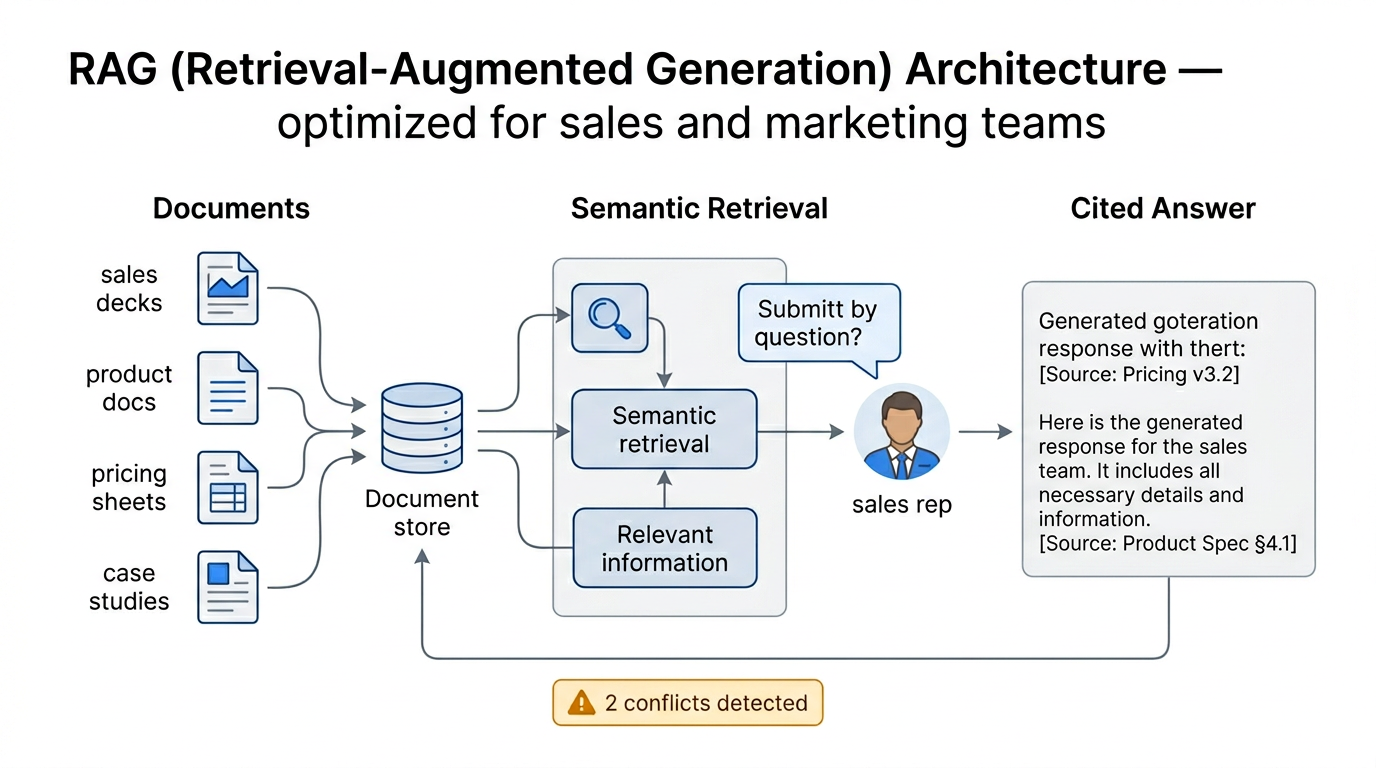

RAG stands for Retrieval-Augmented Generation. When someone asks a question, the system first retrieves relevant content from your actual documents, then generates a response grounded in that content, with citations showing exactly where each piece of information came from.

For revenue teams, this solves the fundamental trust problem with AI: hallucination. Generic tools like ChatGPT will confidently invent pricing, fabricate features, and generate case studies from thin air. RAG cannot do that because every answer is traceable to a source document your team uploaded and controls.

When we built Mojar's retrieval pipeline, we tested five different embedding models before landing on one that understood the nuance between "enterprise pricing" and "enterprise features." That distinction matters because a rep asking about pricing shouldn't get feature documentation, even though both contain the word "enterprise." Semantic understanding, not keyword matching, is what makes RAG work for revenue teams.

Beyond basic retrieval

Retrieval is the foundation, but the real value comes from what you build on top of it:

- Instant answers during live deals: "Do we have a healthcare case study for companies over 1,000 employees?" returns a cited answer in seconds, not a folder to dig through

- Contradiction detection: Surfaces mismatches between what marketing claims, what sales promises, and what product documentation actually says

- Automated content maintenance: Flags stale playbooks, outdated battlecards, and documents that reference deprecated features or old pricing

- Knowledge capture: Converts tribal knowledge into searchable institutional memory before your top performers leave

- RFP acceleration: Pulls approved, cited answers from existing documentation instead of forcing reps to hunt and assemble responses manually

Each of these capabilities addresses a specific, measurable problem. The rest of this guide breaks down how they work, how to evaluate solutions, and how to implement them.

The five core RAG capabilities for revenue teams

1. Semantic knowledge retrieval with source citations

Traditional search tools match keywords. A rep searching for "security compliance" might miss the document titled "SOC 2 Requirements and Data Protection Standards" because the exact phrase doesn't appear. Semantic retrieval understands that these topics are related and surfaces the right content regardless of how it's labeled.

Every answer includes direct links to the source documents. When a rep quotes a number on a call, they can verify where it came from in real time. Our customers report this single capability, cited answers instead of guesswork, is the primary driver of adoption.

What this looks like in practice: A rep asks, "What's our compliance story for financial services clients?" The system retrieves the SOC 2 certification document, the financial services case study, and the relevant section of the security whitepaper, then synthesizes a response with citations to each source. The rep gets a usable answer in under ten seconds.

2. Contradiction detection across documents

This is the capability most solutions miss entirely, and it's the one that prevents the most damaging failures. We've written extensively about how RAG detects conflicting sales messaging in a dedicated deep dive.

When we first deployed contradiction detection with an enterprise customer, the system flagged three conflicting pricing structures in the first 48 hours. Marketing's website said one number, the sales deck said another, and a proposal template from six months earlier had a third. All three were being used actively. Nobody on the team knew the others existed.

According to Gartner's 2024 research, marketing and sales functions collaborate on only 3 out of 15 commercial activities. That organizational distance creates fertile ground for contradictions. Your RAG system should catch them before prospects do.

Common contradiction patterns we see:

| Contradiction type | Example | Frequency |

|---|---|---|

| Marketing vs. product docs | Website claims "real-time" while docs say "batch processing" | Very common |

| Sales deck vs. current pricing | Old proposal templates with discontinued pricing tiers | Common |

| Regional messaging conflicts | US site says "HIPAA compliant" while EU site omits it | Moderate |

| Feature claims vs. roadmap | Marketing promises features still in development | Common |

3. Automated content maintenance

Knowledge bases decay faster than teams can update them. We've seen this cycle repeat in every organization: a playbook launches with fanfare, reps use it for a few weeks, product changes happen, the playbook drifts out of date, reps get burned by outdated information, and the whole system loses trust. We wrote about this pattern in detail in why nobody trusts their sales wiki.

According to research from Datategy on content effectiveness, content accuracy affects up to 40% of content effectiveness. When reps stop trusting the knowledge base, they default to Slack questions and tribal knowledge, which is exactly the problem you were trying to solve.

Advanced RAG platforms include maintenance agents that break this cycle:

- Staleness detection: Flags documents that haven't been reviewed in a configurable timeframe

- Deprecated reference scanning: Catches mentions of old product names, former employees, discontinued features, or sunset pricing

- Conflict-triggered updates: When new content is added, the system identifies older documents that may need revision

- Feedback-driven investigation: When users report a bad answer, the system traces it to the source document and flags the content owner

The vision, and what we're building toward at Mojar, is a self-improving knowledge base where bad experiences trigger automatic quality investigations, not just frustrated Slack messages.

4. RFP and proposal acceleration

Sales teams spend 20-30% of their time on RFP responses (Stack AI). We've broken down what that actually costs in dollar terms and it consistently surprises sales leaders.

The core problem is that RFP responses require assembling information from multiple sources: product documentation, past proposals, compliance certifications, pricing sheets, and case studies. Without RAG, this means hours of manual document hunting. With RAG, a rep asks a question and gets an answer assembled from approved content, with citations, in seconds.

We've also published a detailed guide on what actually works in AI-powered RFP automation, covering the technical distinction between RAG retrieval and AI generation for proposals.

Where RAG helps most in the RFP workflow:

| RFP task | Without RAG | With RAG |

|---|---|---|

| Finding relevant past responses | 30-45 min of folder diving | 10-15 seconds, cited |

| Locating compliance documentation | Multiple systems, multiple contacts | Instant retrieval with version info |

| Verifying current pricing | Slack thread asking "is this still right?" | Source-attributed current pricing |

| Finding relevant case studies | "Does anyone have a healthcare case study?" | Semantic match to industry and size |

| Checking feature accuracy | Cross-referencing product docs manually | Contradiction-checked response |

Our customers consistently report 60-80% reduction in RFP response time when answers can be automatically pulled from existing documentation. The time savings come almost entirely from eliminating the search overhead, not from faster writing.

5. Knowledge capture and institutional memory

When a top-performing rep leaves, they take years of deal knowledge, objection handling patterns, and customer context with them. RAG systems capture that knowledge by indexing internal communications, deal notes, and documented processes, making them searchable for the entire team.

This matters for onboarding too. Instead of shadowing senior reps for weeks, new hires can query the knowledge base: "How have we handled the security objection with financial services prospects?" and get real answers drawn from actual deal history.

We've seen new rep ramp time decrease meaningfully when institutional knowledge becomes searchable. The exact improvement varies by organization, but the pattern is consistent: reps who can find answers independently ramp faster than those who depend on Slack and interrupting colleagues.

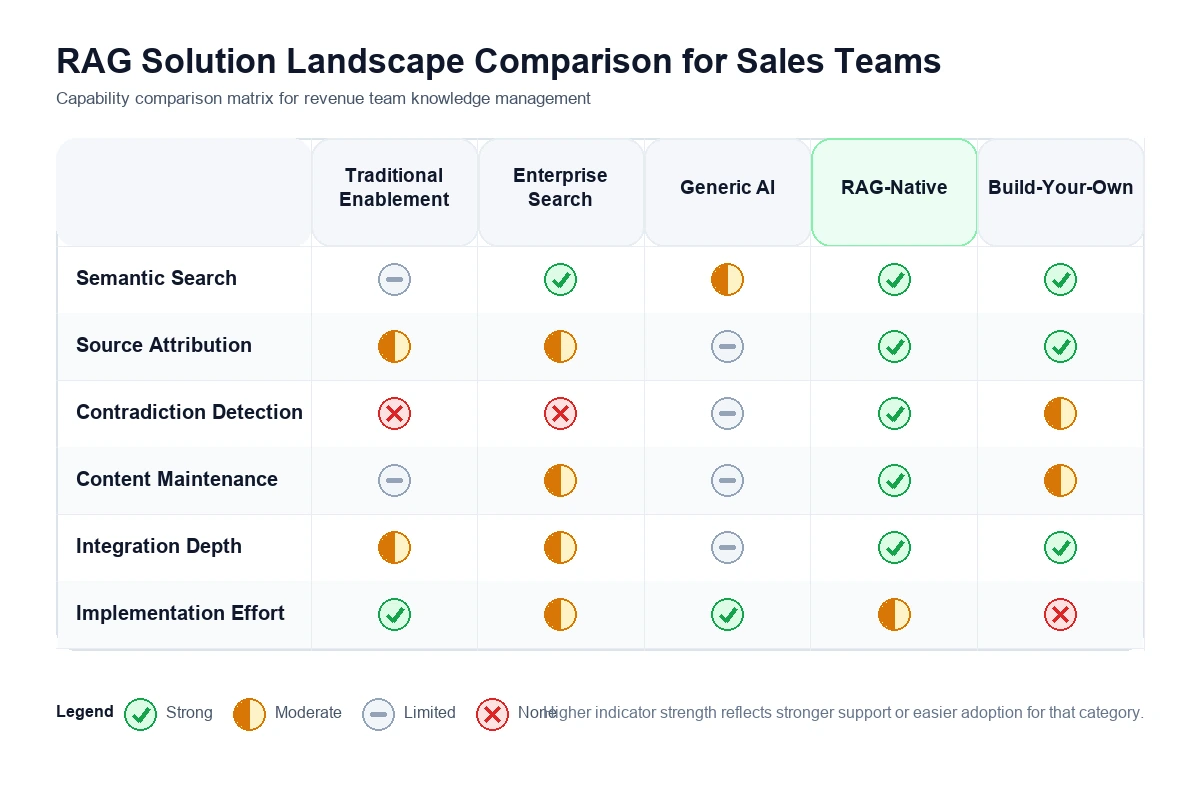

The solution landscape: understanding your options

If you're evaluating AI-powered knowledge management, you're looking at several distinct categories. Each has trade-offs worth understanding.

Traditional sales enablement platforms

Examples: Highspot, Seismic, Showpad

These platforms excel at content organization, engagement analytics, and sales-specific workflows. They track which decks get used, measure content engagement, and integrate with your CRM. What they typically lack is semantic search that understands meaning, contradiction detection across documents, and automated content maintenance.

Best for: Teams that need robust analytics and sales-specific workflows, and have dedicated enablement staff to maintain content manually.

Limitations: Content accuracy is a manual responsibility. If your battlecard is outdated, the platform won't catch it. It will just tell you how many times it was viewed. We've explored this problem in depth in our piece on why outdated battlecards persist.

Enterprise search tools

Examples: Glean, Guru, Notion AI

These tools index content across multiple systems and provide unified search interfaces. Better than folder diving, but most still rely on keyword matching with some semantic layering. They find documents well. They don't verify whether those documents are accurate, current, or consistent with each other.

Best for: Organizations that need unified search across many systems and can tolerate occasional retrieval of outdated content.

Generic AI assistants

Examples: ChatGPT, Claude, Gemini (without RAG integration)

Powerful for general tasks, but genuinely dangerous for revenue operations. They have no access to your internal content and will confidently fabricate answers. Ask "What's our enterprise pricing?" and you'll get a convincing, completely made-up number.

Best for: General productivity tasks that don't require accurate internal knowledge.

RAG-native platforms

Examples: Mojar, and emerging players building specifically for this category

Purpose-built for grounding AI responses in your actual documents. Key differentiators include source attribution on every answer, semantic search that understands context, and increasingly, automated content maintenance and contradiction detection.

Best for: Teams that need trustworthy AI answers backed by their actual content, especially those with large document volumes, compliance requirements, or multi-team content sprawl.

Build-your-own RAG

Some organizations attempt to build custom RAG systems using vector databases (Pinecone, Weaviate, Qdrant) and LLM APIs (OpenAI, Anthropic). This can work for technically sophisticated teams with specific requirements, but it consistently underestimates the complexity of document parsing, chunking strategies, retrieval tuning, and ongoing maintenance.

We initially explored this path ourselves before building Mojar as a product. The core retrieval is straightforward. The last 20%, robust document parsing across dozens of formats, handling scanned PDFs, tuning chunk sizes for different content types, maintaining embedding freshness, is where the engineering investment compounds.

Best for: Organizations with dedicated AI engineering resources, specific integration requirements, and the willingness to maintain infrastructure long-term.

Quick comparison

| Capability | Traditional enablement | Enterprise search | Generic AI | RAG-native | Build-your-own |

|---|---|---|---|---|---|

| Content organization | Strong | Moderate | None | Moderate | Custom |

| Semantic search | Limited | Moderate | N/A (no internal data) | Strong | Custom |

| Source attribution | File-level | File-level | None | Passage-level | Custom |

| Contradiction detection | None | None | None | Advanced | Possible with effort |

| Content maintenance | Manual | Manual | None | Automated | Custom |

| Analytics | Strong | Basic | None | Growing | Custom |

| Implementation effort | Moderate | Low | None | Low-moderate | Very high |

How to evaluate RAG solutions: a framework for revenue teams

Not all RAG implementations deliver equal results. After deploying with 50+ enterprise teams, we've identified six criteria that separate systems that actually help from those that create new problems.

Criterion 1: Source attribution and traceability

If a rep quotes pricing based on AI output and that pricing is wrong, you need to know whether the AI hallucinated or retrieved outdated content. Source attribution is your audit trail.

Test this: ask for pricing and verify the system shows exactly which document and passage it came from. Ask about something that doesn't exist in your documents and confirm the system says "I don't have information on that." If the vendor demo only shows document-level attribution without passage-level citations, you'll spend time reading full documents to verify every answer.

Criterion 2: Semantic understanding vs. keyword matching

A rep asking "What's our response to the security objection?" needs the system to understand that "security objection" might live under "compliance concerns," "data protection standards," or "SOC 2 requirements" in different documents.

Test this by asking questions using different vocabulary than what's in your documents. If the system only returns results when you use exact phrases from the source material, it's keyword matching with a thin AI layer, not true semantic retrieval.

Criterion 3: Contradiction detection

90% of marketing and sales executives report conflicting functional priorities between departments. Inconsistent messaging erodes buyer confidence and surfaces at the worst possible time.

Test this: upload two documents with conflicting information. Ask a question requiring both. Does the system flag the conflict, or silently pick one? You want proactive alerts that surface "Marketing says X, Product docs say Y" with both sources cited.

Criterion 4: Content freshness and maintenance

Content accuracy affects up to 40% of content effectiveness (Datategy). A RAG system that retrieves outdated content with perfect citations is still delivering wrong answers; it just gives you a trail to the mistake.

Look for: staleness flags, detection of deprecated features or old pricing, automated alerts when new content contradicts existing documents, and a workflow that notifies content owners of needed updates.

Criterion 5: Integration depth

The best knowledge system is useless if reps have to leave their CRM or call platform to access it. Adoption depends on meeting people where they work. Look for CRM integrations (Salesforce, HubSpot), browser extensions for real-time access during calls, API access for custom workflows, and SSO integration.

Criterion 6: Access controls and compliance

Enterprise revenue teams have legitimate content boundaries: regional pricing, confidential competitive intel, pre-announcement product details. Verify role-based access controls, document-level permissions, audit trails, and data residency options for regulated industries (HIPAA, SOC 2, GDPR).

The hidden problem: knowledge base maintenance

Most RAG discussions focus on retrieval quality and ignore the harder problem: retrieval is only as good as what you're retrieving from. Organizations invest in creating sales playbooks, battlecards, and marketing assets. They rarely invest in maintaining them, and the knowledge base decays faster than the team can keep up.

The content decay cycle

We've watched this pattern repeat at every organization we've deployed with:

- Launch: New playbook ships with great fanfare. Enablement team celebrates.

- Adoption: Reps use it for two to four weeks. Usage metrics look great.

- Drift: Product ships a new feature. Pricing changes. A competitor launches something new. The playbook doesn't reflect any of it.

- Distrust: A rep cites outdated information on a call. Word spreads. Trust erodes.

- Abandonment: The playbook sits untouched. Reps ask Slack instead. The enablement team wonders why adoption dropped.

Traditional enablement platforms have no mechanism to break this cycle. They track access but not accuracy. Knowing a battlecard was viewed 47 times last month doesn't tell you whether it's still correct.

AI-powered maintenance: the missing layer

This is where next-generation RAG platforms diverge from basic retrieval systems. Advanced implementations include maintenance agents that actively work on content quality:

- Inconsistency detection: Automatically flags when a sales deck contradicts product documentation or when two documents make different claims about the same feature

- Staleness identification: Surfaces content that references deprecated features, old pricing structures, former employees, or processes that have changed

- Update suggestions: When new content is added to the system, the maintenance agent identifies older documents that may need revision to stay consistent

- Feedback-driven investigation: When users report a bad answer, the system traces the problem to its source document and notifies the content owner

At Mojar, we're building toward a self-improving knowledge base where every bad user experience triggers an automatic quality investigation. We're not there yet for every edge case, but the maintenance agent already catches the most common decay patterns: stale pricing, deprecated features, and cross-document contradictions.

Real-world impact: what the numbers show

The time drain

- Sales teams spend 20-30% of their time on RFP responses (Stack AI), with the majority of that time spent hunting through documents, not writing or thinking

- Reps spend significant portions of their day on non-selling activities like data entry, document search, and internal communication (Lystloc)

- Marketing teams report content creation timelines stretching to hours or days per piece due to fragmented source material (Stensul)

The trust problem

- 30-40% of CRM data is unreliable (Salesmate), leading to poor lead qualification and wasted effort

- 60% of sales forecasts miss by more than 20% (Kairntech), often because forecasting relies on fragmented, contradictory data

- Content accuracy affects up to 40% of content effectiveness (Datategy), which means four out of ten content interactions are degraded by inaccuracy

The alignment gap

- Marketing and sales collaborate on only 3 out of 15 commercial activities, leaving 80% of go-to-market functions siloed

- Different teams pulling from different sources creates conflicting narratives that prospects notice before your team does

What RAG deployments deliver

Based on data from our customer deployments:

- 60-80% reduction in RFP response time when approved answers can be retrieved automatically with citations

- Meaningful reduction in new rep ramp time when institutional knowledge becomes searchable instead of tribal

- Fewer deal-killing inconsistencies when contradiction detection catches conflicts before they reach prospects

- Higher enablement content adoption when reps can trust that retrieved content is current and verified

We're careful not to overstate these numbers. The exact impact varies significantly by organization size, content volume, content quality at baseline, and how deeply teams integrate RAG into their workflows. Organizations with well-maintained content see faster results. Organizations with significant content debt see big improvements after the initial cleanup, which RAG forces.

If these patterns match what you're seeing, try Mojar with your actual documents. A 30-minute session with your own content tells you more than any benchmark.

Implementation playbook: what actually works

These are the patterns that separate successful implementations from expensive disappointments, based on what we've seen across 50+ deployments.

Phase 1: Audit and scoping (weeks 1-2)

Before touching any technology, map the terrain.

Content inventory: What documents exist? Where do they live? Systems we typically see: Google Drive, SharePoint, Confluence, Notion, CRM attachments, email threads, Slack messages, and local hard drives that nobody talks about.

Pain point documentation: Gather specific examples, not vague complaints. "Last Tuesday, a rep quoted pricing from a 2024 deck on a call with Acme Corp" is actionable. "Our content is a mess" is not.

Use case prioritization: Pick one specific problem to solve first.

| Use case | Good first choice? | Why |

|---|---|---|

| RFP acceleration | Yes | Clear before/after metrics, contained scope |

| New rep onboarding | Yes | High value, measurable ramp time |

| Competitive intelligence | Moderate | Requires ongoing maintenance commitment |

| Content consistency audit | Yes | Immediate value, surfaces problems fast |

| Full knowledge management | No | Too broad for a pilot |

Stakeholder alignment: Identify who owns each content type. This step is unsexy but critical. RAG surfaces content problems that were previously invisible, and someone needs to own the fix.

Phase 2: Pilot deployment (weeks 3-6)

Start contained. We recommend:

- 50-100 documents in the initial knowledge base, not thousands. Quality over quantity.

- 5-10 power users who are motivated to give honest feedback, ideally a mix of senior reps and new hires

- One specific workflow (RFP responses, competitive questions, or product FAQ)

- Baseline metrics documented before launch: current time per RFP, number of "does anyone have..." Slack messages per week, new rep time-to-first-deal

Content preparation matters more than you expect: Most organizations find that 20-30% of their "current" documents are outdated during the pilot. That cleanup is painful but valuable. RAG forces the content audit that was already overdue.

What a healthy pilot looks like after four weeks:

- Users query the system daily without being reminded

- Reported answer accuracy exceeds 85%

- At least one "we didn't know these documents contradicted each other" discovery

- Clear feedback on what's missing from the knowledge base

Phase 3: Expansion and workflow integration (weeks 7-12)

Once the pilot proves value on a single use case, expand deliberately.

Add document sources based on what users asked for: During the pilot, track every query that returned "I don't have information on this." Those gaps tell you exactly which documents to add next.

Expand user access incrementally: Each new user group has different content needs and different workflow patterns. Rolling out department by department lets you tune the system for each group.

Establish maintenance workflows: This is where most implementations stall. Define clearly:

- Who reviews flagged content? (Content owners, not the AI team)

- How often? (Monthly at minimum for active content, quarterly for reference material)

- What's the escalation path when contradictions are found? (Product team resolves product claims, marketing resolves positioning claims)

Build feedback loops: Every user correction and "this document is outdated" flag should route to content owners. That feedback loop is what makes the system improve over time.

Phase 4: Measurement and optimization (ongoing)

Track metrics that matter, not vanity numbers.

| Metric | What it tells you | How to measure |

|---|---|---|

| Answer accuracy rate | Is the system giving correct information? | Weekly sample of 20 queries, manually verified |

| Query-to-action time | How fast do reps get from question to usable answer? | Compare pre-RAG and post-RAG workflows |

| Adoption rate | Are people actually using it? | Daily active users / total users |

| Trust indicators | Do reps rely on the system? | Survey, plus track "does anyone have..." Slack frequency |

| Contradiction discovery rate | Is the system finding problems? | Count of contradictions flagged per month |

| Content freshness score | How current is the knowledge base? | Percentage of documents reviewed within their freshness window |

Vanity metrics to ignore: total documents indexed, total queries processed, and "AI accuracy score" without methodology.

Common pitfalls and how to avoid them

We've made some of these mistakes ourselves and watched customers make others.

Pitfall 1: Indexing everything at once

The instinct is to throw every document into the system immediately. This backfires. Old documents with outdated information dilute retrieval quality. A 2023 pricing sheet retrieved alongside the 2026 version creates confusion, not clarity.

Start with a curated, verified set of documents. Add sources deliberately, validating content as you go.

Pitfall 2: Treating RAG as a one-time project

RAG isn't software you install and forget. Your content changes, products evolve, competitors shift. Without ongoing maintenance, retrieval quality degrades as the knowledge base drifts from reality.

Budget for ongoing content audits (quarterly at minimum), feedback loop management, and retrieval tuning. Assign a content owner for each major content category.

Pitfall 3: No feedback mechanism

If users can't report bad answers easily, the system can't improve. We've seen deployments where reps quietly stop using the tool instead of reporting problems, because reporting was harder than asking Slack.

Make feedback frictionless. A thumbs-down button with an optional one-line note is enough. Route feedback to content owners, not the AI team.

Pitfall 4: Ignoring access controls

Enterprise sales teams have legitimate content boundaries: regional pricing, confidential competitive intel, pre-announcement product details. A system that surfaces confidential information to the wrong people creates real risk.

Map your existing permission structure onto the RAG system before launch. Test with sensitive documents to verify access controls work as expected.

Pitfall 5: Measuring the wrong things

"We indexed 10,000 documents" tells you nothing about value. Focus on outcomes: did RFP response time decrease? Are reps using the system instead of Slack? Did contradiction detection catch something real? Those are the questions that matter.

Where Mojar fits: honest positioning

We built Mojar because we experienced these problems firsthand building AI systems for enterprise teams. Transparency about where we add value, and where we don't, matters more than marketing claims.

What Mojar does well

Contradiction detection: Our maintenance agent actively scans for inconsistencies across your documents. When your sales deck claims "real-time processing" but product docs say "batch mode," we surface that conflict with both sources cited and a notification to the content owner.

Content maintenance automation: We don't just retrieve content. We help you maintain it. Automated flags for stale documents, references to deprecated features, and content that hasn't been reviewed surface before they cause problems.

Universal document support: PDFs (including scanned documents via OCR), Word, Excel, PowerPoint, Markdown, HTML, and more. Our hybrid parsing engine handles the messy reality of enterprise content, where critical information lives in a scanned contract PDF from 2019.

Source attribution on every answer: No black boxes. Every response shows exactly which documents and which passages informed it. Reps can verify any answer before using it on a call.

Where we're still building

Deep CRM integration: We have API access and basic integrations, but native Salesforce and HubSpot widgets that embed directly in the CRM workflow are on the roadmap. They aren't shipped yet.

Real-time external data: Connecting internal knowledge with live market intelligence, competitor pricing changes, and news feeds is a capability we're developing. It isn't a current strength.

Advanced analytics: Usage analytics are functional but not yet as rich as what dedicated enablement platforms like Highspot offer. We're investing here, but we're honest that it's not our strongest area today.

When Mojar isn't the right choice

- If you need robust sales analytics, content engagement tracking, and coaching workflows, Highspot or Seismic are better fits

- If you need a general-purpose AI assistant for tasks that don't require internal knowledge, ChatGPT or Claude work well

- If your content is well-organized and accurate, and your primary need is better content discovery within a single system, enterprise search tools like Glean may be sufficient

- If you have a large AI engineering team and highly specific integration requirements, building your own RAG pipeline might make sense

We're focused on a specific problem: making your internal knowledge trustworthy, findable, and consistent across teams. If that's your primary pain point, we should talk. If it's not, we'll tell you.

Getting started: a practical path forward

Questions to ask your team first

Before evaluating any vendor, answer these internally:

- Where does your team's knowledge actually live? Map every system: CRM, Drive, SharePoint, Confluence, Slack, email, local files. The answer is always "more places than we thought."

- What's the most expensive knowledge failure you've had in the last quarter? Make the cost concrete: a lost deal, a compliance issue, a rep quoting wrong pricing.

- Who owns content maintenance today? If the answer is "nobody" or "everyone," that's the root cause of your content quality problem.

- What's your highest-value use case? RFPs, onboarding, competitive intel, or content consistency. Pick one.

Questions to ask any vendor

- How do you handle source attribution? Can I trace every answer to the specific passage it came from?

- What happens when my documents contradict each other? Show me.

- How do you identify outdated content, and what does the fix workflow look like?

- Can I see a demo with my actual documents, not your curated examples?

- What access controls do you support? How do you handle documents with different permission levels?

- What happens when the system doesn't know the answer? Does it say so, or does it guess?

A realistic timeline

| Phase | Timeline | What happens |

|---|---|---|

| Content audit and scoping | Weeks 1-2 | Inventory documents, identify pain points, pick pilot use case |

| Pilot deployment | Weeks 3-6 | 50-100 docs, 5-10 users, one workflow, baseline metrics |

| Pilot evaluation | Week 7 | Measure results, gather feedback, decide go/no-go |

| Expansion | Weeks 8-12 | Add documents, users, and workflows based on pilot data |

| Steady state | Ongoing | Quarterly content audits, feedback loops, continuous improvement |

The bottom line

Revenue teams are drowning in content they can't trust. "Is this the latest deck?" shouldn't require three Slack messages and a folder archaeology expedition to answer.

RAG technology offers a path forward, not by creating more content, but by making existing content trustworthy, findable, and consistent. The key differentiator between RAG solutions isn't retrieval speed or model sophistication. It's whether the system helps you maintain content quality over time, or just surfaces whatever mess already exists.

The organizations that invest in this now will have a compounding advantage: reps who find accurate answers in seconds, marketing teams confident their messaging is consistent, and leadership that can trust the information flowing through their revenue engine.

The organizations that don't will keep asking Slack.

Ready to see it in action? Request a demo with your actual documents, not our curated examples. We'll show you what your knowledge base looks like through Mojar's lens, contradictions and all.

Frequently Asked Questions

RAG (Retrieval-Augmented Generation) is an AI architecture that retrieves relevant content from your actual documents, such as sales decks, playbooks, product specs, and marketing assets, before generating a response. Every answer comes with source citations, so reps can verify where information came from instead of trusting a black box.

Traditional platforms like Highspot and Seismic store and organize content but cannot verify accuracy or detect conflicts between documents. RAG systems understand the meaning of your content, retrieve it semantically, and surface contradictions proactively. The difference is that RAG understands content, not just file locations.

Yes, and this is one of the most overlooked capabilities. When we deployed Mojar with enterprise sales teams, the system caught conflicting pricing across three different documents in the first week. Advanced RAG systems compare claims across your entire knowledge base and flag mismatches before prospects discover them.

Our customers consistently report 60-80% reduction in RFP response time. The savings come from eliminating document hunting: instead of searching through SharePoint, Google Drive, and Slack, reps ask a question and get a cited answer pulled from approved content in seconds.

ChatGPT has no access to your internal documents and will confidently fabricate pricing, features, and case studies. RAG grounds every response in your actual content with traceable source citations. When a rep asks about enterprise pricing, RAG returns your real pricing sheet, not a hallucinated number.

Basic RAG retrieves whatever exists, including stale content. Advanced RAG platforms include maintenance agents that flag outdated information, detect documents referencing deprecated features or old pricing, and identify content that contradicts newer sources. This maintenance layer is what separates useful deployments from liability risks.

RAG scales well for enterprise deployments with thousands of documents across multiple teams. The key requirements are robust access controls, audit trails, integration with existing systems like CRM and DAM platforms, and the ability to handle multi-region content variations. Most enterprise challenges we encounter are organizational, not technical.

A focused pilot with 50-100 documents and 5-10 users can launch in two to three weeks. Full enterprise rollout across multiple teams and thousands of documents typically takes two to three months. The bottleneck is almost always content preparation and organizational alignment, not the technology.