AI-powered RFP response automation: what actually works

RAG-powered RFP tools retrieve your approved content with source citations instead of generating AI guesses. A practical guide to evaluation and implementation.

Table of contents

Sales teams lose 20-30% of their time to RFP responses, and the bulk of that time goes to searching, not writing (Stack AI, Salesforce State of Sales). If you've read our breakdown of what that actually costs, the numbers are stark: $320K-$500K annually for a 20-rep team in direct labor alone, before counting the deals that don't get enough attention.

This article covers the solution: what "AI-powered RFP response" actually means, which technical approaches work, which create more risk than they solve, and how to evaluate tools before signing a contract.

The core distinction is simpler than most vendors make it sound: AI doesn't write your proposals. It eliminates the search. The human still crafts the response. The system makes finding the right content instant instead of exhausting.

What AI RFP response automation actually means

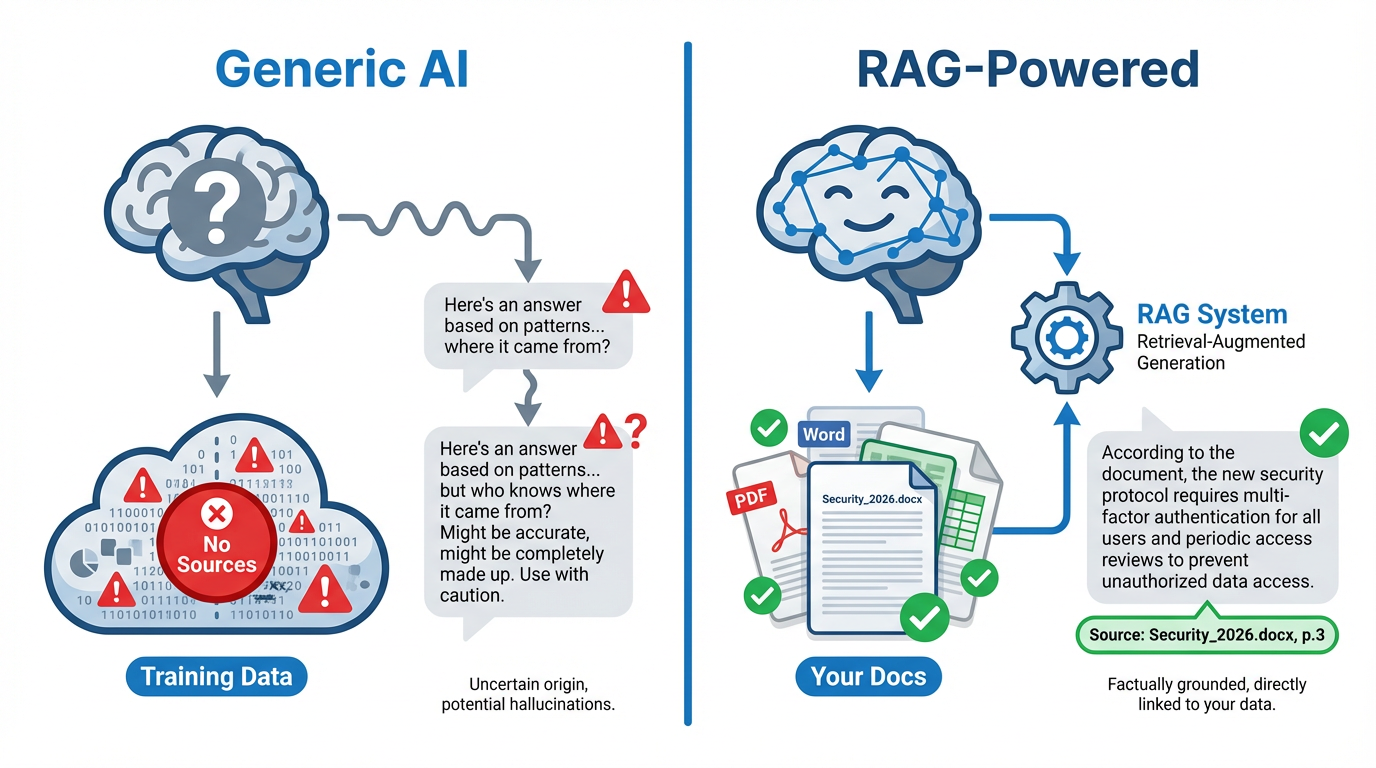

When people hear "AI RFP automation," they picture ChatGPT writing proposal text. That mental model leads to bad purchasing decisions because it conflates two fundamentally different approaches.

Generic AI: generating text from training data

Tools like ChatGPT generate plausible-sounding responses based on patterns in their training data. For RFPs, this creates specific, measurable risks:

- Hallucination: The model invents pricing, fabricates product features, and composes compliance claims from nothing, with complete confidence

- Legal liability: AI-generated text that promises capabilities you don't have or pricing you didn't authorize creates binding obligations

- No audit trail: When a prospect asks "where did you get this number?", there's no source document to point to

- Credibility damage: A single fabricated claim that a prospect catches undermines the entire proposal

Generic AI doesn't know your company. It doesn't know your pricing, your product specs, your approved compliance language, or your past proposals. It fills gaps with confident guesses.

RAG: retrieving your approved content

RAG (Retrieval-Augmented Generation) works differently. The key word is retrieval.

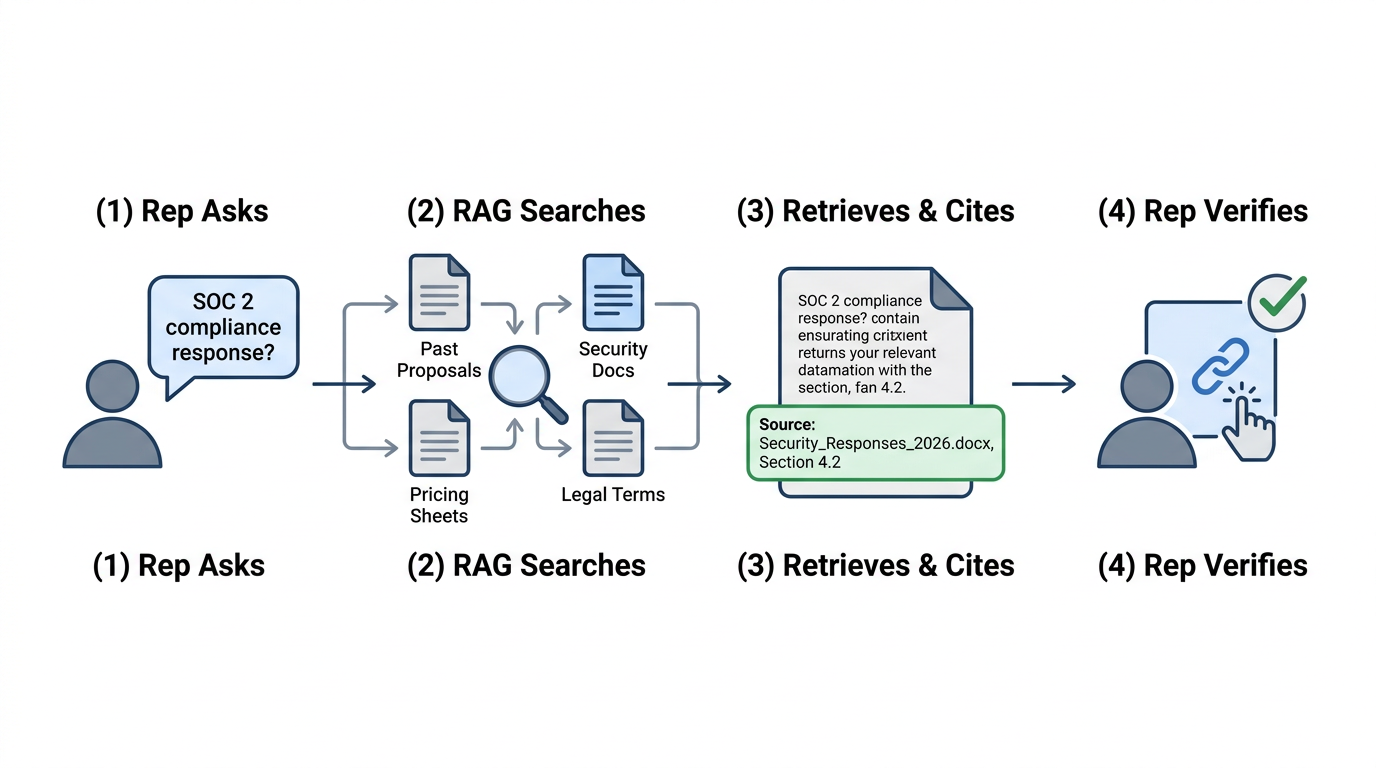

When a rep asks "What's our standard response to SOC 2 compliance questions?", a RAG system:

- Searches across all indexed documents: past proposals, security documentation, approved compliance language

- Retrieves the most relevant content based on meaning, not just keywords

- Returns an answer with citations showing exactly which documents it came from

- Lets the rep verify the source with one click

The AI finds the content that should be in your proposals. It doesn't invent content. The human reviews, customizes, and submits. But the hours spent hunting through folders, Slack threads, and email attachments are gone.

The risk profile is completely different. RAG can't hallucinate your pricing because it retrieves your actual pricing documents. It can't invent features because it pulls from your real product specs. Every answer is traceable, which means every answer is verifiable.

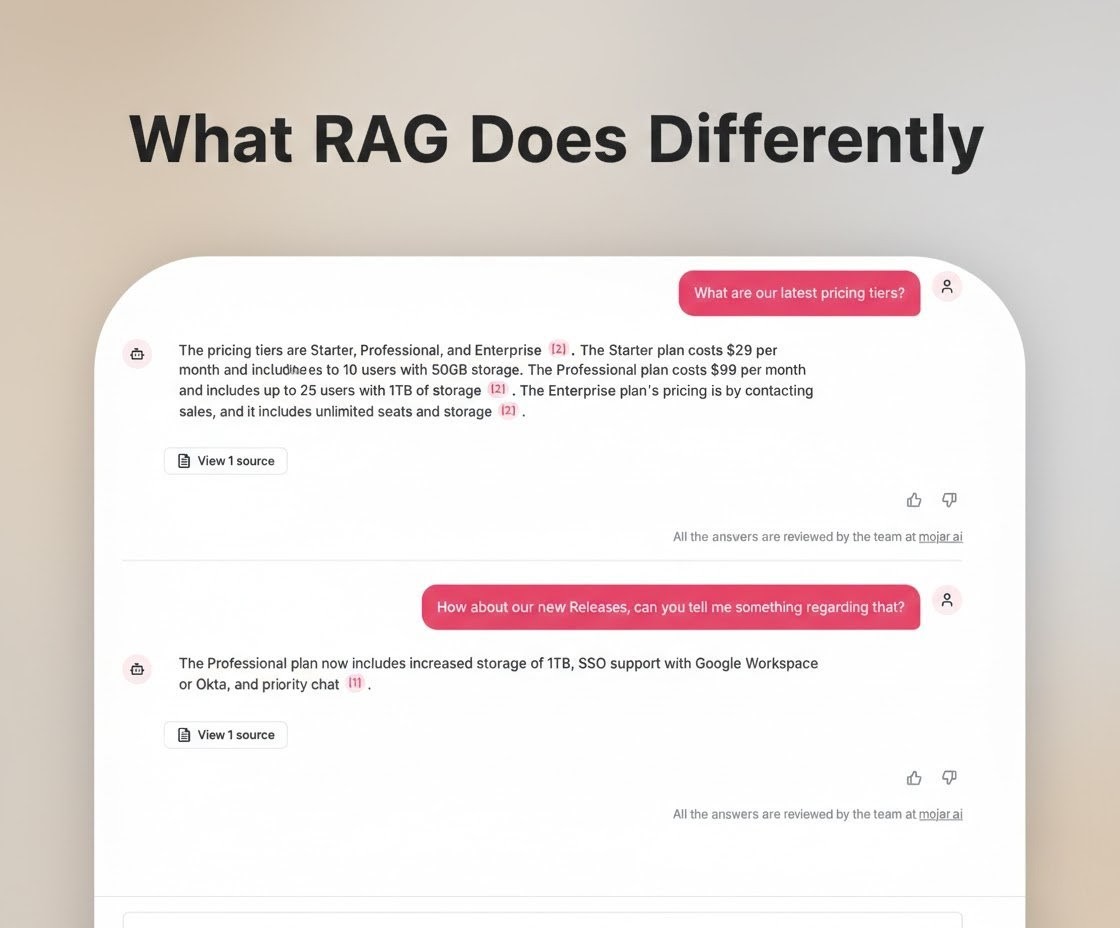

See it work: a healthcare RFP retrieved in 2 minutes

In this demo, we use Mojar's MCP integration to respond to a healthcare RFP, retrieving compliance language, product specs, and past proposals in real time with source citations on every answer.

The 45-minute document hunt becomes a 2-minute conversation with your knowledge base. Every answer is grounded in your actual documents.

How RAG handles specific RFP components

Different RFP sections have different pain points. We've deployed Mojar across enterprise sales teams and tracked which components benefit most from retrieval-based automation. In practice, we learned that the pattern is consistent: the more repetitive and compliance-sensitive the content, the greater the time savings.

| RFP component | Primary pain point | RAG advantage | Typical time reduction |

|---|---|---|---|

| Security/compliance | Finding pre-approved Legal language | Retrieves exact approved phrasing with citation | 85-90% |

| Pricing | Conflicting versions across documents | Contradiction detection flags stale pricing | 85%+ |

| Technical specs | Scattered across docs, decks, and FAQs | Cross-source retrieval with conflict flagging | 80-85% |

| Case studies | Buried in folders nobody can navigate | Semantic search by industry, size, use case | 80-85% |

| Legal terms | Requires Legal review or perfect recall | Surfaces pre-approved contract responses | 75-80% |

Security and compliance questions

Security sections are the longest and most repetitive part of most enterprise RFPs. Every prospect asks about SOC 2, GDPR, encryption, access controls, and incident response. Your legal team has reviewed and approved specific language for each.

The pain point: Reps know approved responses exist somewhere. Finding them is the problem. Using the wrong phrasing, even slightly different wording, creates liability. So reps hunt for the exact approved response, afraid to improvise.

With RAG: "What's our GDPR compliance statement for EU prospects?" The system retrieves from approved security documentation, showing the specific language Legal signed off on, with a citation including the source document and approval date.

The result: Consistent, pre-approved language every time. Legal's work gets reused instead of recreated. Reps don't improvise compliance claims.

Pricing questions

Pricing seems straightforward until you account for enterprise vs. SMB tiers, annual vs. multi-year contracts, volume discounts, regional variations, and promotional pricing that expired last quarter but whose PDF still circulates in the shared drive.

The pain point: Reps find multiple pricing documents and can't tell which is current. They escalate to RevOps. The response gets delayed. Sometimes the wrong pricing goes out, and then you're either honoring a price you didn't intend or having an uncomfortable conversation with the prospect.

With RAG: The system surfaces current pricing with source attribution. Contradiction detection flags when multiple documents contain different rates.

Example output: "Three documents reference enterprise pricing. Pricing_Matrix_2026.xlsx (last reviewed January 2026) shows $X. Proposal_Template_Legacy.docx shows $Y. Recommend using Pricing_Matrix_2026.xlsx based on review date."

The result: Reps know which pricing is current. Contradictions get caught before they reach prospects. RevOps escalations drop because reps can self-serve accurate information.

Technical specifications

Technical buyers ask detailed questions: API rate limits, authentication protocols, uptime SLAs, compliance certifications. These answers live in product docs, API documentation, release notes, and technical FAQs, and they don't always agree.

The pain point: A rep finds an answer in a sales deck that contradicts the product docs. Which is right? They Slack the solutions engineer and wait for a response. The SE is in back-to-back meetings. The RFP deadline is tomorrow.

With RAG: The system retrieves across all technical sources and flags contradictions instead of returning a confident wrong answer.

Example: A query for "API rate limit" returns the product documentation answer and flags that a sales deck shows a different number. The rep investigates before putting incorrect specs in a binding proposal.

The result: Technical accuracy with confidence. Contradictions surface before they become commitments.

Case studies and references

Prospects want relevant examples: same industry, similar company size, comparable use case. The right case study usually exists somewhere. Finding it is the problem.

The pain point: Marketing has a library somewhere, organized by some schema nobody else uses. You need healthcare, enterprise, compliance-focused. You get a link to a folder with 47 PDFs.

With RAG: Semantic search by industry, company size, use case, and outcome.

Query: "Healthcare case study, enterprise, 1000+ employees, compliance-focused"

The system returns ranked matches based on content relevance. The case study that mentions HIPAA compliance and enterprise deployment rises to the top because the system understands meaning, not just file names.

The result: The right case study surfaces because the system understands what "relevant" means. No more posting in Slack: "Does anyone have a healthcare case study?"

Legal terms and contract language

RFPs frequently include contract terms, and prospects often want modifications. What's your standard response to unlimited liability requests? What's negotiable vs. non-negotiable?

The pain point: Legal has approved responses to common contract modifications. Finding them requires either knowing exactly where to look or asking Legal directly, which adds days.

With RAG: "What's our standard response to unlimited liability requests?" The system returns the pre-approved Legal response with citation. If the specific situation isn't covered, the rep knows to escalate rather than improvising language that creates risk.

The result: Reps handle routine contract questions without Legal delay. Non-standard requests get escalated appropriately.

Why generic AI fails for RFPs specifically

Generic AI tools are useful for brainstorming, drafting emails, and synthesizing research. RFP responses are a different category entirely. In my experience leading RAG deployments for sales teams, the failure modes are severe enough that we recommend evaluating generic AI and retrieval-based tools as fundamentally different product categories.

The knowledge gap

ChatGPT doesn't know your company. Ask it "What's our standard response to SOC 2 compliance questions?" and it generates plausible-sounding text based on what SOC 2 responses generally look like. That text might be wrong. It almost certainly doesn't match what your Legal team approved. And there's no way to verify where it came from.

The same applies to pricing, product specs, past proposals, and case studies. Generic AI generates from patterns, not from your documents.

Hallucination in binding proposals

For brainstorming, hallucination is a minor annoyance. For RFP responses, it's a deal-killing liability.

A fabricated pricing claim means you're either honoring a price you didn't intend or walking it back and damaging credibility. A fabricated feature claim sets expectations you can't meet post-sale. A fabricated compliance statement could create genuine legal exposure. Gartner's sales enablement research identifies information accuracy as a top-three driver of buyer trust, and fabricated content destroys it instantly.

Generic AI can't distinguish between what you actually offer and what a typical company might offer. It fills gaps with confident guesses. That's useful for first drafts; it's dangerous for binding proposals.

The attribution problem

Even when a generic AI answer happens to be correct, you can't prove it. When a prospect asks "where did this compliance language come from?", you can't point to an approved source. When Legal asks "did I review this?", there's no citation to show.

RFP responses need audit trails. AI-generated text without source attribution doesn't have one.

RAG changes this equation. Every response includes a citation: "This answer came from Security_Responses_2026.docx, paragraph 3, last updated January 2026." The rep clicks through to verify. Legal confirms the source was approved. The audit trail exists from the start.

Evaluating RFP automation tools: five questions to ask vendors

We've watched organizations evaluate RFP automation tools and seen the same purchasing mistakes repeat. Our approach at Mojar is built on the conviction that retrieval with citations is the minimum bar, but not every vendor agrees. The market is crowded, and vendor claims often blur the line between retrieval and generation. We tested dozens of tools before building our own, and these five questions consistently separate tools that reduce risk from tools that create it.

1. Does it retrieve from your documents or generate from training data?

This is the threshold question. If the system generates responses from a language model without grounding them in your specific documents, every answer carries hallucination risk.

What to look for: Ask the vendor to answer a question using your actual content during the demo. Verify that the response text comes directly from your documents, not from the model's training data. If the vendor can't demonstrate retrieval with your content during evaluation, that's a red flag.

2. Does every answer include verifiable source citations?

Citations aren't a nice-to-have feature. They're how reps verify accuracy, how Legal confirms approved language was used, and how you maintain an audit trail for binding proposals.

What to look for: Citations should include the specific document name, the section or paragraph, and ideally a last-reviewed date. "This came from your documents" isn't a citation. "This came from Security_Responses_2026.docx, section 4.2, last reviewed January 15, 2026" is.

3. How does it handle contradictions?

Your documents will contradict each other. Old pricing sheets, outdated product specs, and legacy proposal templates don't delete themselves. A system that confidently returns stale information is worse than one that admits uncertainty.

What to look for: Feed the system two documents with conflicting information (different pricing, different feature descriptions) and ask a question that triggers both. A good system flags the conflict. A bad system picks one and presents it as fact.

When we deployed Mojar, contradiction detection caught pricing conflicts within the first week for most customers. The value isn't convenience; it's preventing the most embarrassing RFP errors before they reach prospects.

4. What happens when content is stale?

Documents age. Product specs change. Pricing gets updated. Compliance certifications get renewed. A system that returns outdated information with confidence is creating risk, not reducing it.

What to look for: Freshness tracking that flags documents past a review threshold. Bonus: the ability to mark content as deprecated without deleting it, since sometimes you need the historical record.

5. How does it integrate with existing workflows?

The best knowledge retrieval system is useless if reps won't use it. Integration with existing tools matters more than feature count.

What to look for: Does it work alongside your current RFP platform (RFPIO, Loopio, Responsive), or does it require replacing your entire workflow? The adoption barrier for a new query interface is much lower than for a full process migration.

Where Mojar fits: honest positioning

We built Mojar because we experienced these problems firsthand. Iulian Maxim, Mojar co-founder and engineer, led the initial RAG architecture specifically to solve RFP retrieval for enterprise sales teams. Transparency about what the system does and doesn't do matters more than marketing claims.

What Mojar does

Semantic search across all RFP-relevant content: Past proposals, product specs, pricing sheets, security documentation, case studies, legal terms. One query interface across all your sources, indexed where they live without requiring reorganization.

Source attribution on every answer: Every response shows exactly which document it came from, with links to verify. When Legal asks "where did this come from?", you have the answer.

Contradiction detection: When your documents disagree, the system flags the conflict before you send it to a prospect. This catches the most damaging RFP errors: conflicting pricing, outdated specs, and mismatched compliance language.

Freshness tracking: Documents that haven't been reviewed recently get flagged. Content referencing deprecated products, old pricing, or former employees gets surfaced before it reaches prospects.

What Mojar doesn't do (yet)

Direct integration with RFP platforms: We don't plug directly into RFPIO, Loopio, or Responsive. Mojar is the knowledge layer that feeds your RFP workflow, the system that makes finding content instant, rather than a replacement for your RFP management tool.

Writing proposals for you: Mojar retrieves and cites. Humans write, customize, and submit. We're not trying to automate human judgment out of the process.

When Mojar is the right fit

- Your content is scattered across multiple systems (Drive, SharePoint, Confluence)

- You need audit trails and source verification for compliance or legal reasons

- Accuracy matters more than speed-at-any-cost

- Your team spends hours per RFP on document hunting

- You've tried generic AI tools and found the hallucination risk unacceptable

When Mojar isn't the right fit

- Your content is already well-organized in a single system that works

- You need deep analytics on RFP performance and win rates (dedicated RFP platforms handle this better)

- You want AI to write proposals rather than retrieve content

Getting started: a practical path

If the technical approach makes sense for your team, implementation doesn't have to be complex. We've seen this across every deployment: a focused pilot consistently outperforms a full rollout. When we tested the pilot-first approach against simultaneous multi-team launches, the pilot groups had 3x higher adoption at 90 days.

Step 1: Audit your current state

Before evaluating any tool, understand where you are:

- Content inventory: Where do RFP-relevant documents live? How many systems?

- Time tracking: Have reps track actual hours on their next 5 RFPs. Where does the time go?

- Pain point mapping: Which RFP sections cause the most hunting? Security? Pricing? Technical?

- Volume assessment: How many RFPs, RFIs, and security questionnaires per month?

For a detailed framework on measuring RFP costs and where the time actually goes, see our cost analysis with the full ROI formula. To skip the spreadsheet, you can also model your team's RFP ROI directly in the browser with our free calculator — it shows annual labor savings and added pipeline impact in real time.

Step 2: Define success metrics

Decide what "working" looks like before the pilot starts:

- Hours saved per RFP (measurable through time tracking)

- Contradiction catches (conflicts flagged before reaching prospects)

- Adoption rate (are reps actually using the system?)

- Source verification rate (are answers traceable to approved documents?)

Step 3: Run a focused pilot

Don't index everything at once. Start with:

- One document category (security responses are usually the quickest win)

- One team or a small group of power users

- One month of tracking before/after metrics

Expand based on results. If security response retrieval works well, add pricing. If the pilot team sees value, expand access.

Step 4: Integrate with existing workflows

The goal isn't to replace your RFP process. It's to eliminate the document hunting step within it. Mojar provides the knowledge layer; your existing tools handle project management, collaboration, and submission.

For more on how content fragmentation creates the underlying problem, and why folder reorganization doesn't solve it, see why nobody knows which version is correct.

The bottom line

AI-powered RFP response automation comes down to one architectural decision: does the system retrieve from your documents, or generate from its training data?

Retrieval with citations means every answer is traceable, every claim is verifiable, and every rep can self-serve accurate content in minutes instead of hours. Generation without grounding means confident guesses, hallucination risk, and no audit trail.

The organizations adopting retrieval-based automation respond faster, more accurately, and with the source verification that regulated industries require. Their reps spend time selling instead of searching. For a deeper understanding of the underlying technology, see RAG for Marketing & Sales: the complete guide.

Ready to see retrieval in action with your content? Request a demo with your actual RFP documents. We'll show you what instant, cited retrieval looks like with your data, not a curated example set. Or get started with a free trial to test it against your own RFPs.

Frequently Asked Questions

Generic AI like ChatGPT generates plausible-sounding text from training data. It doesn't know your pricing, product specs, or approved compliance language, and will fabricate answers confidently. RAG (Retrieval-Augmented Generation) retrieves from your actual documents first, then generates a response grounded in that content with source citations. The AI finds existing answers instead of inventing new ones.

Generic AI creates legal risk because it hallucinates, inventing pricing, features, and compliance claims. RAG-powered systems are different: they retrieve from your approved documents and cite sources. The AI doesn't write your proposals; it finds the right content from your verified library. Humans still review and submit.

Security and compliance questions see the biggest gains because organizations have pre-approved SOC 2, GDPR, and HIPAA responses that just need to be found quickly. Pricing questions benefit from contradiction detection that catches outdated quotes. Technical specs, case study matching, and legal terms all see 80%+ time reduction when RAG retrieves from indexed documentation.

Advanced RAG systems detect when your documents disagree, flagging when the pricing sheet says one thing but a proposal template says another. This contradiction detection prevents the most damaging RFP errors: sending conflicting information that prospects catch, or quoting pricing you can't honor.

No. RAG indexes documents where they live, across Google Drive, SharePoint, Confluence, and other systems. The semantic search understands meaning, not folder structure. You don't need a migration project; the system makes your existing content findable and adds source attribution automatically.

Ask five questions: Does the system retrieve from your documents or generate from training data? Does every answer include verifiable source citations? Can it detect contradictions across documents? Does it flag stale content? And does it integrate with your existing RFP workflow without requiring a full process overhaul? Retrieval with citations is the minimum bar.

RAG-powered systems handle regulated industries particularly well because they retrieve pre-approved, Legal-reviewed compliance language rather than generating new text. When a rep queries for HIPAA compliance responses, the system returns the exact language your legal team approved, with the source document and approval date cited. This eliminates the risk of reps improvising compliance claims.