Outdated battlecards: why sales reps stop trusting them

Battlecards decay faster than teams can maintain them. We break down why reps abandon competitive intel and how RAG-powered systems catch staleness early.

Table of contents

Every sales organization has a battlecard graveyard. A folder full of decks launched at SKO, emailed to the team, maybe covered in a training session, then quietly abandoned eighteen months later.

Competitor X has shipped two major releases since that launch, changed their pricing model, and added the integration your battlecard says they don't have. Your reps know. They stopped using the battlecards months ago.

When we first deployed Mojar with an enterprise sales organization, the system flagged 11 battlecard contradictions in the first 72 hours. Three of them referenced features the competitor had shipped more than a year earlier. The reps who had been using those cards on calls were not guessing, they were following the playbook. The playbook was wrong.

This post is our take on why outdated battlecards are a systems problem, not a discipline problem, and how RAG-powered knowledge systems actually break the decay cycle. I'm Iulian Maxim, co-founder at Mojar, and I've led dozens of enterprise deployments where this is the first thing the system catches.

The battlecard trust collapse

Sellers face competitors in 68% of deals (Crayon), yet most companies rate themselves only 3.8 out of 10 in competitive selling preparedness. That gap costs organizations an estimated $2-10 million annually in lost deals.

The problem isn't that companies don't create battlecards. They do. The problem is that battlecards decay, and the decay happens faster than anyone maintains them.

"The battlecard says they don't have SSO, but I just saw it on their website."

This is what the collapse sounds like. A rep, mid-deal, discovers the competitive intel they were given is demonstrably false. The prospect corrects them. The call goes sideways. The rep never trusts that battlecard again, and they tell their teammates.

Why reps abandon battlecards

When we talk to sales reps during deployment kickoffs, the same themes come up across every organization:

- "I stopped using battlecards after I got burned on a call."

- "Our competitive intel is from before their last product launch."

- "Who's responsible for updating competitive info? I honestly don't know."

- "I just ask [top performer] what to say about Competitor X."

These aren't complaints about the concept of battlecards. They're complaints about the reliability of the ones that exist. Reps want competitive intelligence. They just don't trust the current version.

According to research from G2, 65% of sales reps can't find relevant enablement content to send to prospects, not because it doesn't exist, but because they can't trust what they find. Only 10% of sales enablement content generates 50% of prospect engagement. The rest sits unused.

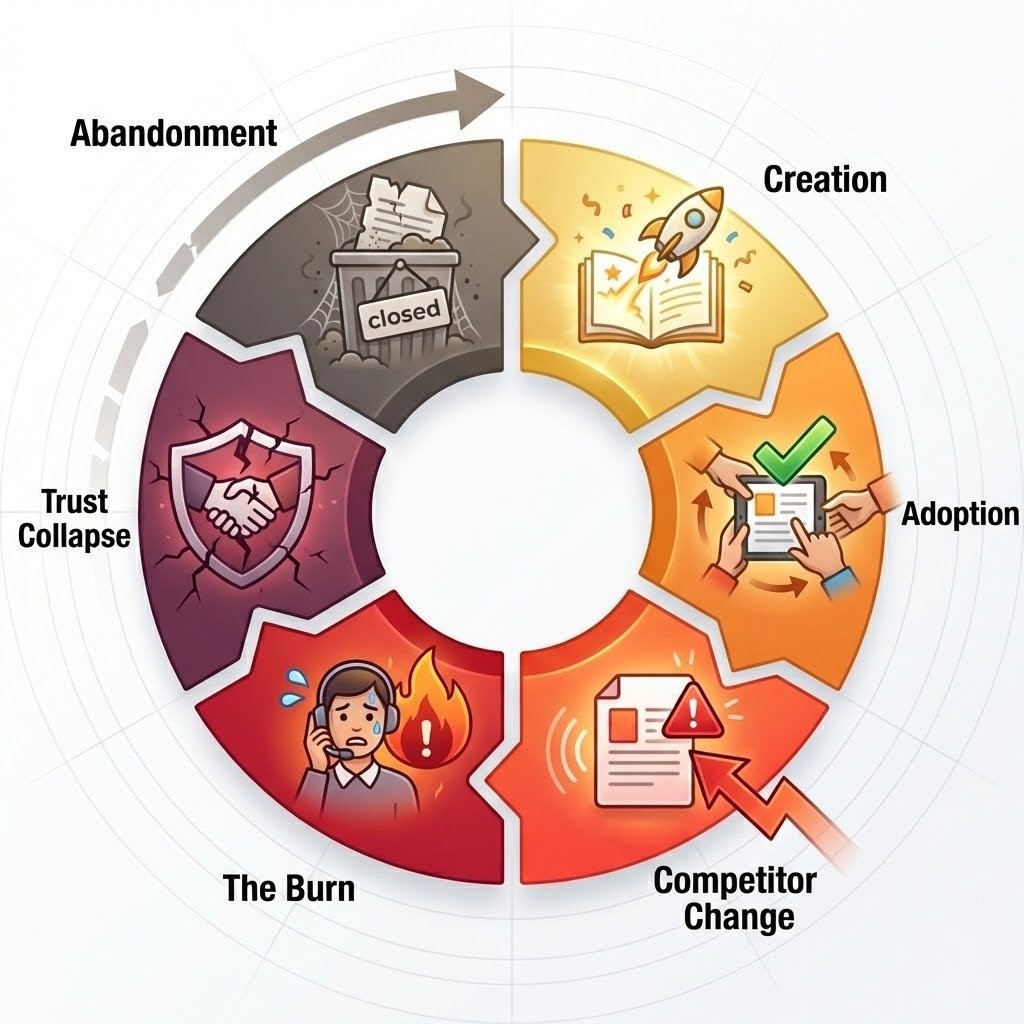

The battlecard decay cycle

Every battlecard we've audited follows the same predictable lifecycle. Understanding each stage is how to break the cycle, because the fix differs depending on where decay starts.

Stage 1: Creation (the fanfare)

Product Marketing creates comprehensive battlecards for top competitors. There's a launch meeting, an enablement training video, and a promise from leadership that this is the year competitive enablement gets fixed. Adoption is high in week one.

Stage 2: Initial adoption (the honeymoon)

Reps try the battlecards on a few calls. Some objection responses work. The positioning feels useful. Usage metrics look good in the first month, and everyone declares victory.

Stage 3: First competitor change (the crack)

Competitor X announces a new feature, the exact integration your battlecard claims they lack. Or they update their pricing page. Or they rebrand their enterprise tier.

Your battlecard doesn't change, because it doesn't know anything changed. In B2B SaaS, competitors ship new features weekly, update messaging monthly, and can pivot positioning overnight (Aeravision). Traditional quarterly battlecard reviews capture only static snapshots. The gap between reality and documentation starts growing immediately.

Stage 4: The burn moment (trust destruction)

A rep uses the outdated claim on a call. The prospect pulls up the competitor's website in real-time, right there during the meeting, and shows the feature your battlecard said doesn't exist.

The rep looks either uninformed or dishonest. Neither is accurate, but the damage is done. The deal might survive. The rep's trust in the battlecard won't.

Stage 5: Trust collapse (the spread)

That rep tells their teammates: "Don't trust the Competitor X battlecard, it's wrong about SSO." Word spreads through Slack, through hallway conversations, through the informal channels where real information flows. Within weeks, the entire team knows the battlecards can't be trusted.

Stage 6: Abandonment (the graveyard)

Battlecard usage drops to near zero. Reps develop workarounds: asking top performers, checking competitor websites themselves, improvising based on what they remember. The investment in creating the content yields nothing, and the battlecards join the graveyard.

The ownership vacuum

Ask who's responsible for maintaining battlecard accuracy and watch the finger-pointing begin.

Product Marketing created the battlecards. They own competitor positioning. However, they're busy with launches, messaging, and content for the next campaign. Maintenance isn't in their quarterly goals.

Competitive Intelligence monitors competitors. They see the changes. However, pushing updates to every affected document isn't their job: they deliver insights, not document edits.

Sales Enablement owns the platform where battlecards live. They track usage, manage access, run trainings. However, they don't own the content accuracy, that's Product Marketing's domain.

The result is that "everyone" owns battlecard maintenance, which means no one does. Changes happen to competitors. Changes don't happen to battlecards. The gap widens until the content is useless.

According to Crayon's State of CI research, 52% of compete programs lack a sales executive sponsor, and less than one-third engage with sales daily or weekly. When competitive intelligence operates in isolation from the sales team using it, battlecard decay is inevitable.

The real-world consequences

Outdated battlecards don't sit there harmlessly. They actively damage deals and team effectiveness in four specific ways we've seen first-hand across deployments.

Credibility destruction

Your rep claims Competitor X doesn't support the integration the prospect needs. The prospect checks the competitor's website during the call. The integration is featured prominently, launched three months ago.

Your rep looks like they either didn't do their homework or deliberately misled the prospect. Neither interpretation helps you win the deal, and both follow the rep into future calls.

Lost deals to stale differentiation

When competitive positioning is outdated, reps can't effectively differentiate. They're fighting the competitor that existed six months ago, not the competitor that exists today. They emphasize advantages that are no longer unique, and they ignore new weaknesses they don't know about.

The competitor's current sales team, armed with accurate information, has an advantage your team doesn't realize exists.

The hearsay culture

When battlecards can't be trusted, reps develop rational workarounds that create their own problems:

- Slack the top performer: "Hey Sarah, what do you say when Competitor X comes up?"

- Check the website yourself: duplicating research effort across the team

- Wing it: making claims based on what seems right, hoping they're accurate

Sarah becomes a bottleneck. Individual research duplicates effort and misses context. Winging it leads to exactly the credibility problems you're trying to avoid.

Wasted investment

The money spent creating battlecards (agency fees, internal hours, launch training) generates zero return when the content isn't used. The next time Product Marketing proposes creating competitive content, leadership remembers that the last batch sits unused in a folder nobody touches. Budget for competitive enablement gets harder to defend each cycle.

What actually works: continuous validation

The fix isn't better quarterly reviews. The fix is continuous validation: systems that catch contradictions before reps encounter them on calls.

When properly implemented with ongoing maintenance, battlecards can increase competitive win rates by 54% (Crayon). The battlecard concept works; the maintenance model is what breaks.

Why quarterly reviews fail

Traditional battlecard maintenance is reactive: wait until someone reports a problem, then fix it. By the time that happens, multiple reps have already been burned and trust has already collapsed.

We recommend a proactive model built on four capabilities:

- Continuous monitoring: track when competitor materials change, not when a rep notices something is off

- Contradiction detection: flag when your claims conflict with the competitor's current public materials

- Staleness alerts: surface content that hasn't been reviewed in X months, automatically

- Cross-document validation: ensure your own materials are consistent about competitors, not contradicting each other

This isn't about working harder at maintenance. It's about building systems that make maintenance visible and unavoidable.

Continuous vs. quarterly maintenance, side by side

A quick reference for what changes when you move from calendar-based audits to continuous validation:

| Capability | Quarterly review model | Continuous validation model |

|---|---|---|

| Competitor monitoring | Manual scan once per quarter | Ongoing tracking of public materials |

| Contradiction detection | When a rep gets burned on a call | When the system flags the conflict |

| Staleness awareness | Age of the file | Last-reviewed date + automated alerts |

| Ownership trigger | Ambiguous, often no one | System routes the alert to an owner |

| Rep-level trust | Decays across the quarter | Restored as alerts resolve |

| Cost of a miss | Lost deal, rep credibility | Internal ticket, before the call |

Every enterprise team we've onboarded has started in the left column and migrated toward the right. The migration isn't technical, it's organizational, and the tooling is the forcing function.

Where RAG and AI change the equation

This is where Mojar fits, and where our approach differs from traditional enablement platforms.

Contradiction detection across your content

Mojar doesn't just store battlecards. Our platform analyzes claims across documents and flags when they conflict with each other, or when they conflict with what competitors now say publicly.

"Your battlecard says Competitor X doesn't support SSO, but their website now features it prominently."

This surfaces the problem before a rep uses the outdated claim on a call. The contradiction shows up as a system alert, not as a failed customer meeting.

This capability is part of how Mojar approaches detecting conflicting sales messaging across your entire content library.

Automated staleness alerts

When a battlecard hasn't been reviewed in three months, it gets flagged automatically. When it references a competitor's old product name, deprecated features, or pricing from two years ago, the system catches it. No one has to remember to audit. The system surfaces what needs attention, and maintenance becomes visible instead of invisible.

Cross-document consistency

"Your sales deck says we're 2x faster than Competitor X. Your battlecard says 3x. Your website says 'significantly faster' with no number."

These inconsistencies exist in most content libraries. They're invisible until a prospect notices them, or until a system surfaces them proactively. Mojar's analysis spans your entire document set, catching when materials disagree about competitive positioning, product claims, or feature comparisons.

For a concrete example of how we've seen this play out in practice, the same pattern causes chaos in sales deck version control and in RFP responses under deadline pressure.

What a contradiction alert actually looks like

When a rep asks Mojar a competitive question, the response is grounded in specific documents and timestamps, with conflicts surfaced inline. The output below is an example alert from a real battlecard query during a recent deployment (names and numbers abstracted):

Query: "Does Competitor X support SSO for enterprise customers?"

Answer: According to our internal materials, Competitor X does not

support SSO. However, a conflict was detected with public sources.

Sources:

→ Competitor X Battlecard v3 (Last reviewed: Aug 12, 2024)

→ Competitive Deep Dive, Q3 2024 section (Last reviewed: Sep 2024)

⚠️ Conflict detected:

→ Competitor X security page (public) references "SAML SSO + SCIM"

as of a crawl timestamp in the current quarter.

→ Review action routed to Product Marketing owner.

→ Battlecard flagged as "do not use" until reconciled.

That "do not use" flag is the step traditional search can't replicate. The rep doesn't just get an answer; they get proof it's current, or a warning that it isn't.

Real-time monitoring (vision)

We're building toward live competitive monitoring, connecting battlecard claims with ongoing competitor intelligence. The goal is battlecards that surface maintenance needs when competitors announce changes, not months later when a rep discovers the problem on a call. This capability is in development. We mention it because it's the direction the category needs to go, not because it ships today.

What we've learned deploying with revenue teams

When we built Mojar's cross-document analysis pipeline, our initial assumption was that most version conflicts would live in sales decks. We were wrong. The worst contradictions lived in competitive battlecards and technical spec sheets, content types that change frequently and have the broadest distribution across teams.

In one deployment with a B2B SaaS company, Mojar flagged 14 pricing contradictions, 8 deprecated feature references, and 3 instances of completely conflicting competitive positioning within the first week. The VP of Sales told us they had been aware of "some version issues" but had no idea of the scale. Their newest reps had been using a playbook that referenced a product tier discontinued nine months earlier.

Our data from more recent enterprise rollouts is consistent: the first 7 days of a Mojar deployment typically surface 10-25 battlecard-level contradictions that the team didn't know existed. The ratio of "we knew about this" to "we had no idea" is roughly 1 to 4.

What we're honest about

We're not a replacement for full sales enablement suites (Highspot, Seismic) if you need content engagement analytics and training delivery. We're also not a replacement for comprehensive competitive intelligence platforms (Crayon, Klue) if you need win/loss analysis and full CI program management.

RAG also doesn't fix the approval bottleneck. If updating a battlecard requires sign-off from Product, Legal, and Marketing, that's still a people process. What our platform does is make the bottleneck visible: the system flags stale content, and humans decide when and how to update it.

What we do well, and what traditional tools can't: catch when your existing content contradicts itself, surface staleness before reps encounter it, and ensure your competitive claims are consistent across documents. If your battlecards exist but can't be trusted, that's the problem we solve.

A short checklist for breaking the decay cycle

If you're not ready for new tooling yet, here's a lightweight template you can run with any team this quarter:

- Inventory: list every battlecard, where it lives, and the last time it was reviewed.

- Flag the oldest: anything unreviewed for more than 90 days is presumed stale.

- Cross-check three claims per card: pick claims about features, pricing, and integrations. Verify each against the competitor's public site.

- Assign one owner per competitor: a single named person, not a team.

- Set a weekly 15-minute review: short, recurring, and calendared. Not quarterly.

- Log every contradiction found: a simple spreadsheet is fine. The point is to make the decay visible.

This is a manual version of what a RAG-powered system automates. Running it once is a useful audit. Running it every week is usually where teams decide they need a system to take over.

Breaking the cycle

Your battlecards aren't useless because your team doesn't care about competitive intelligence. They're useless because the maintenance model doesn't match the pace of competitive change. Competitors ship weekly. Battlecard reviews happen quarterly. The math doesn't work.

The fix requires systems that make maintenance continuous, automatic, and visible. Systems that catch contradictions before reps encounter them. Systems that surface staleness before it burns deals.

Your reps want to use competitive intelligence. They just want intelligence they can trust.

Next steps

- See the broader pattern: read our guide on catching conflicting sales messaging before prospects do for how contradiction detection works across your content library.

- See version control, the same problem in a different content type: our breakdown of sales deck version control applies the same pattern to revenue-team decks.

- Learn the full RAG landscape: our complete guide to RAG for marketing and sales explains how retrieval-augmented generation works and where it fits.

Ready to find your contradictions? Book a demo with your actual battlecards, and we'll show you what's contradicting itself, what's stale, and what claims might not survive a prospect's Google search. If you'd rather explore the technology first, you can try Mojar with your own documents and see what surfaces in the first hour.

Frequently Asked Questions

Shift from periodic manual audits to continuous monitoring. Use systems that automatically flag when competitor websites contradict your battlecard claims, alert you when content hasn't been reviewed in set timeframes, and detect when your own documents conflict about competitor positioning. Manual quarterly updates can't keep pace with competitors who ship weekly.

Reps stop trusting battlecards after getting burned on a call, using outdated claims only to have the prospect correct them with the competitor's own website. Once a rep looks uninformed in front of a buyer, they will not trust that battlecard again. Word spreads fast: 'Don't use the Competitor X card, it's wrong,' and trust collapses across the team.

This is precisely why battlecards decay: ownership is unclear. Product Marketing typically creates them, Competitive Intelligence monitors competitors, and Sales Enablement owns the delivery platform. When everyone owns maintenance, no one does. Successful programs assign explicit ownership with defined review cadences and automated staleness alerts.

In B2B SaaS, competitors ship new features weekly, update messaging monthly, and can pivot positioning overnight. Traditional quarterly battlecard reviews capture only static snapshots, creating strategic blind spots. By the time your quarterly review happens, your battlecards may already be several product releases behind.

The immediate impact is credibility destruction: the prospect corrects your rep using the competitor's own website. The rep looks unprepared at best, dishonest at worst. Beyond that deal, the rep tells teammates the battlecard is wrong, trust collapses across the team, and battlecard usage drops to near zero.

Yes. AI-powered systems can continuously monitor for contradictions between your battlecard claims and competitors' public materials, flag content that hasn't been reviewed within set timeframes, and detect when your own documents conflict about competitor positioning. This shifts maintenance from reactive (finding problems after deals are lost) to proactive (catching issues before reps encounter them).